5 Questions Every VP of Engineering Should Ask About Their Test Operations

Author

Neerav Singh

Technical Product Specialist

Author

Neerav Singh

Technical Product Specialist

Reading Time

5 min read

- 1. Can my team tell me, right now, where every prototype is and what's being done to it?

- 2. Are my teams working from the same information or just assuming they are?

- 3. How much of my engineers' time is actually spent on real engineering?

- 4. If an auditor walked in tomorrow, what would my test documentation look like?

- 5. Is my lab scaling with my business or holding it back?

- The Conclusion That's Hard to Sit With

5 Questions Every VP of Engineering Should Ask About Their Test Operations

There's a particular kind of silence that falls over a lab when something goes wrong late in the testing cycle.

Not the silence of focus or concentration. A heavier kind. The kind that settles when an engineer pulls up a test result and realizes the data tied to it is missing. Or when two teams discover they've been running conflicting versions of the same test because nobody had a single source of truth. Or when a prototype fails a verification check and the concerned people find it extremely challenging to trace exactly where in the process the requirement was missed because the tests, the lab schedule and the results all live in different places, managed by different people, tracked in different ways.

That silence is expensive. Not just in hours lost or reruns scheduled. In the quiet erosion of confidence that happens when a VP of Engineering walks into a review and realizes they can't actually answer the most basic question about their own operations: what is happening, right now, in my lab?

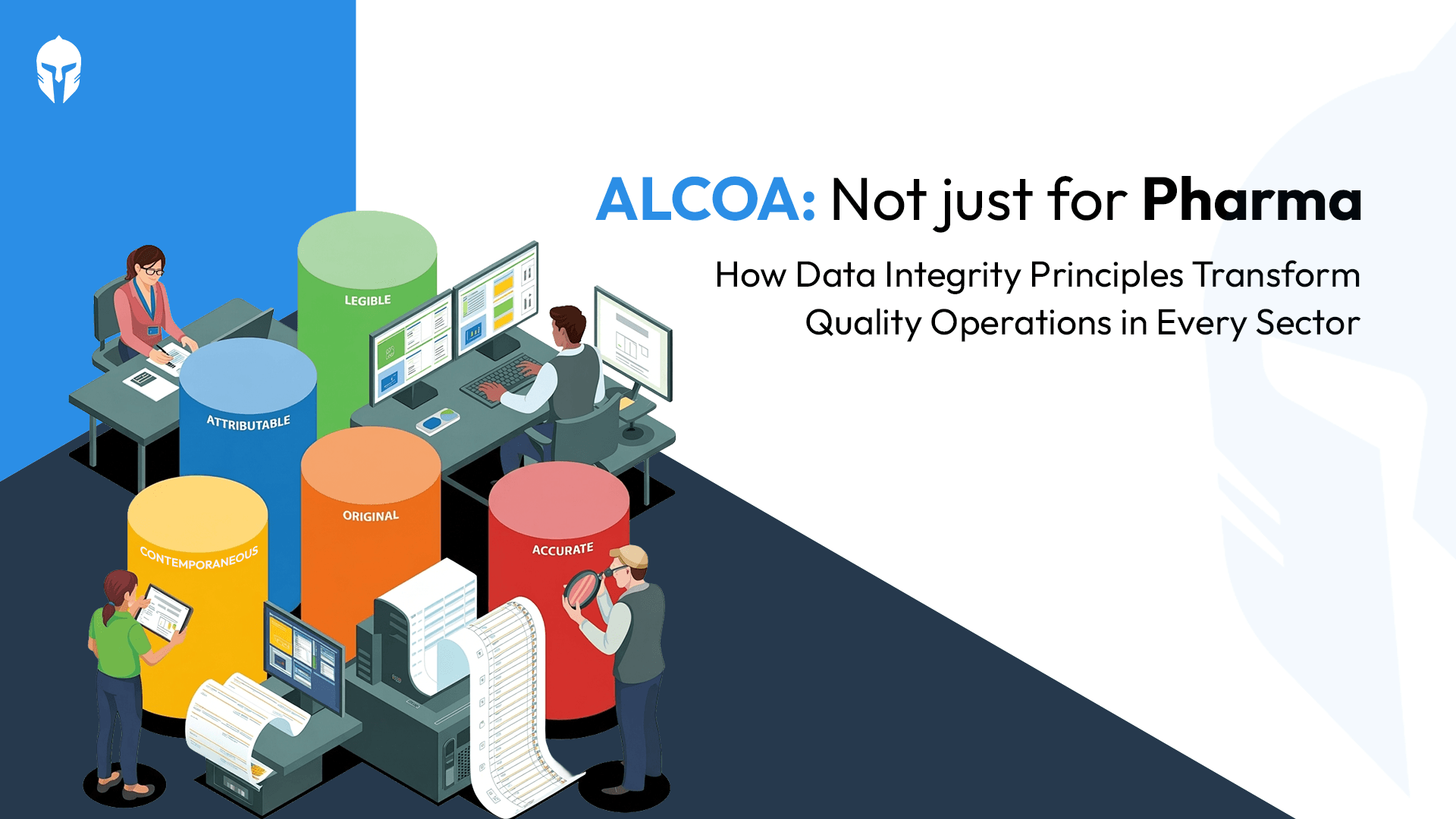

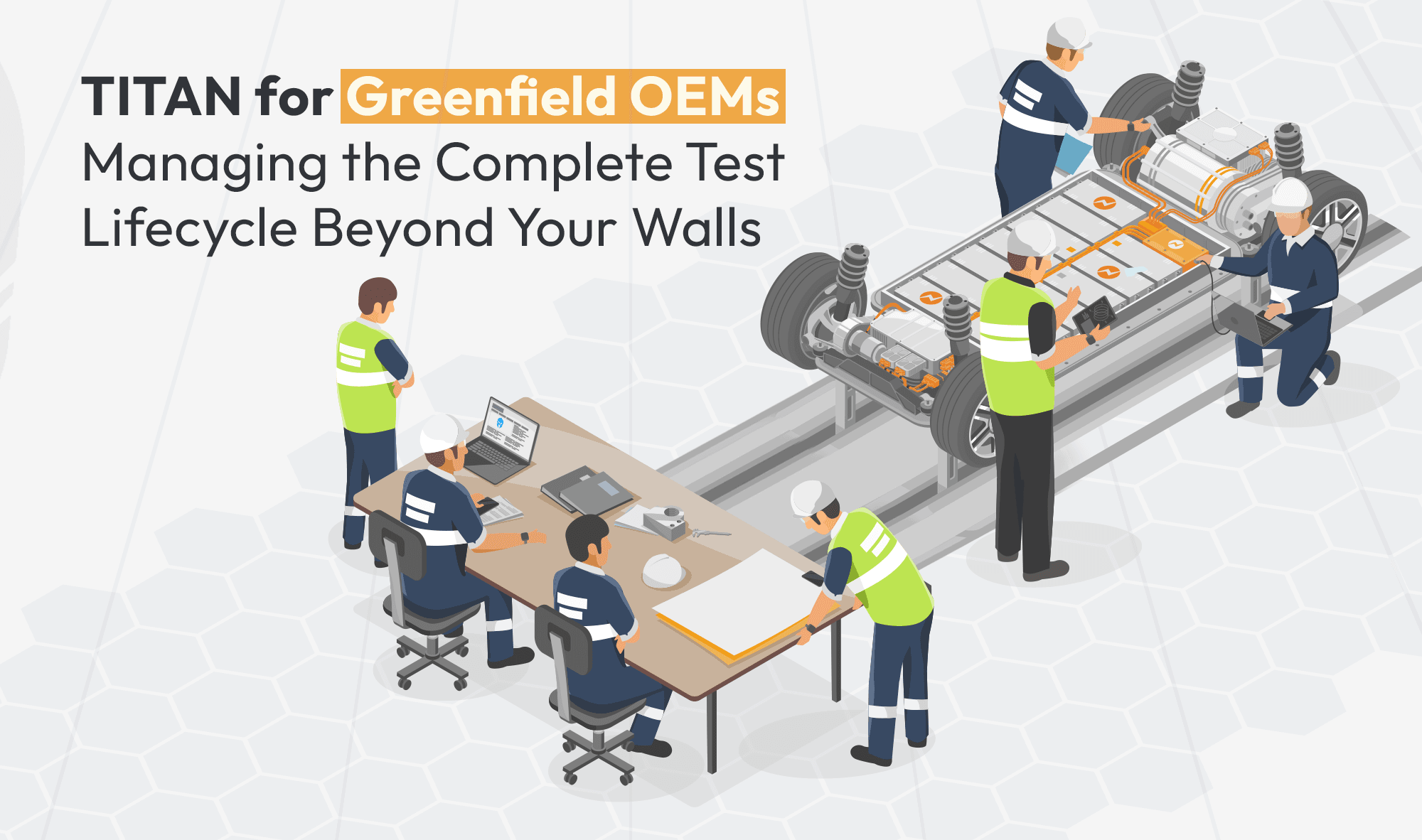

The unsettling truth is that this situation is far more common than anyone in leadership wants to admit. R&D labs at some of the world's most respected manufacturers, automotive, aviation, marine, railway, consumer electronics, are still operating with fragmented tools, disconnected information flows and processes that were designed for a different era of product complexity. Engineers are talented. Labs are equipped. But the systems tying it all together are holding everyone back.

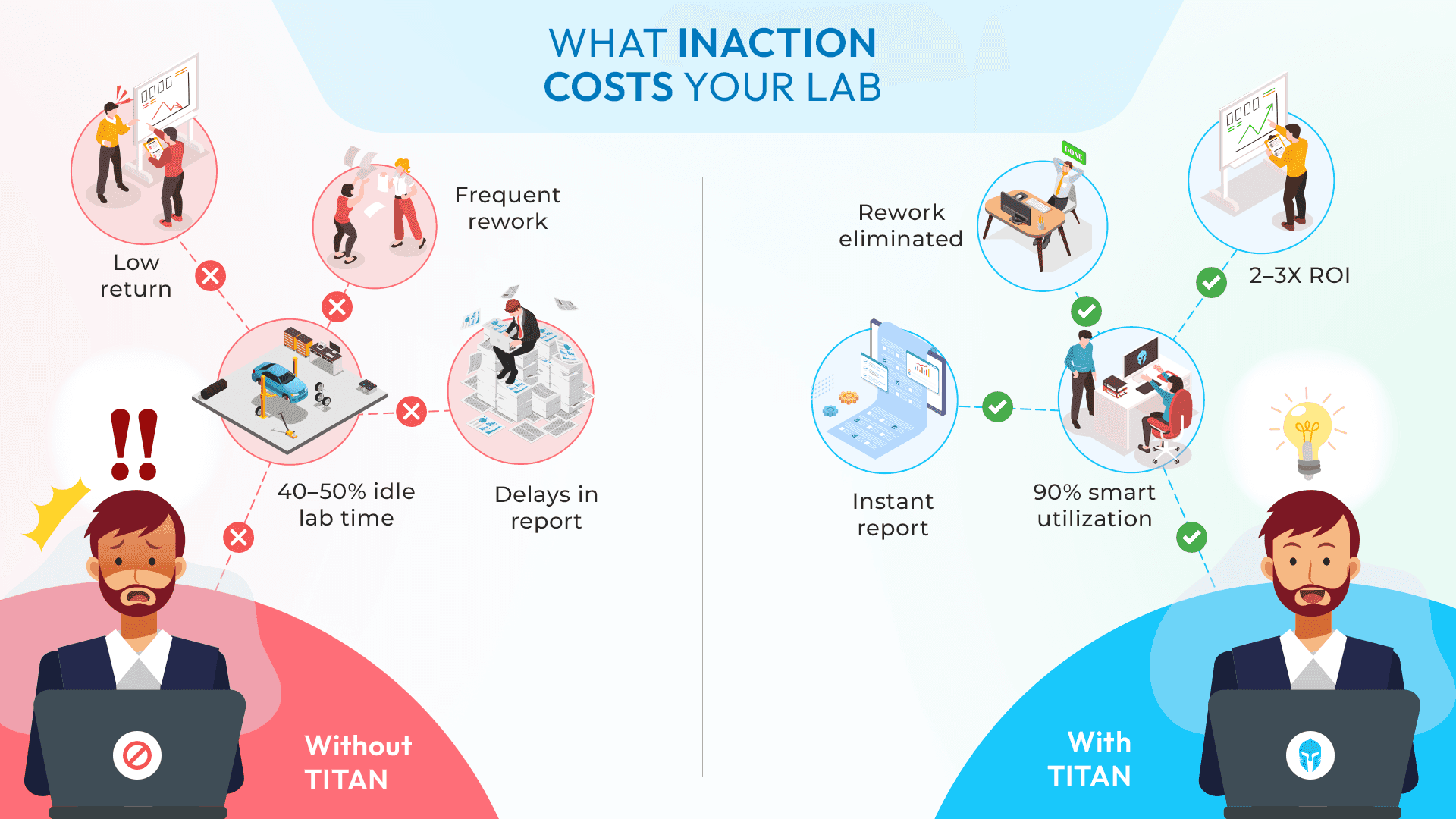

The numbers tell a story most organizations would prefer to ignore. Knowledge workers with specialty training spend 41% of their time on activities that do not require their expertise calling vendors, coordinating schedules, hunting for missing data. Approximately 85% of product development organizations don't quantify the cost of delay for their projects, which means teams make daily tradeoffs about testing schedules without understanding what those delays cost. 84% of executives believe data silos directly slow decision-making and reduce organizational agility.

The damage happens in detail. Human error rates in manual data entry usually hover around 1%, with laboratory studies finding error rates of almost 4% and over 14% of those errors containing significant and potentially dangerous discrepancies. In calibration processes, 40% of calibrations contained errors when data had to be entered twice, once on paper, once into the system. These errors are the kind of mistakes that trigger retests, invalidate batches and erode trust in the entire testing process.

The cumulative cost is staggering. According to the American Society for Quality, the cost of poor quality, including rework and scrap, can equal 15% to 20% of annual revenue. For a mid-sized manufacturer, that can mean tens of millions lost annually, not to catastrophic failures, but to the slow bleed of inefficiency that fragmented systems create. Human errors cause 23% of unplanned downtime incidents, the predictable result of forcing people to operate in systems that were never designed to work together.

Here are five questions that cut through that fog. If a VP of Engineering can't answer them clearly, it's worth paying attention to why.

1. Can my team tell me, right now, where every prototype is and what's being done to it?

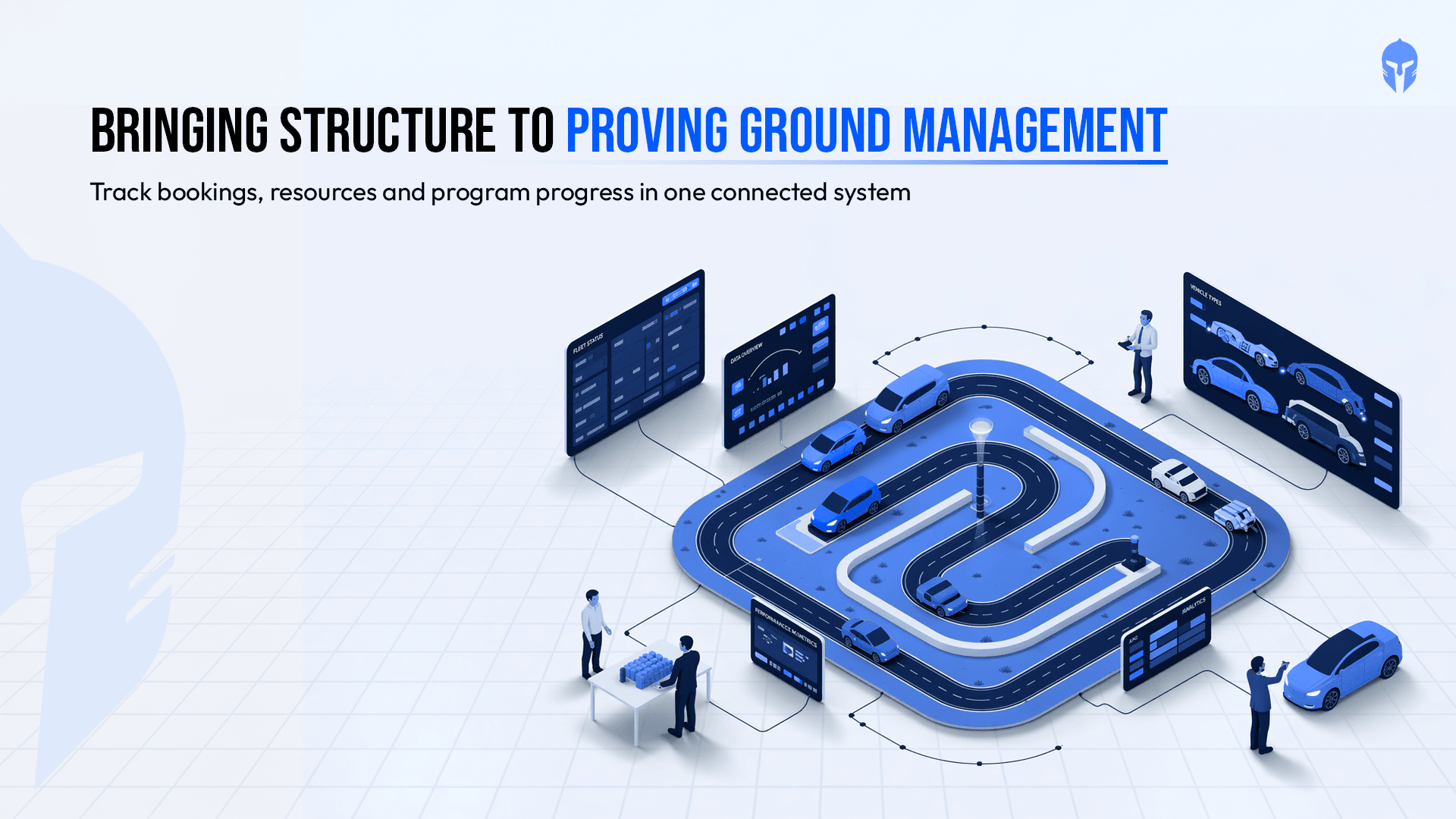

Prototype management sounds straightforward until a lab is running multiple concurrent test programs across different teams. At that point, the prototype, the physical test article that everything depends on becomes one of the most fought-over, most mismanaged resources in the building.

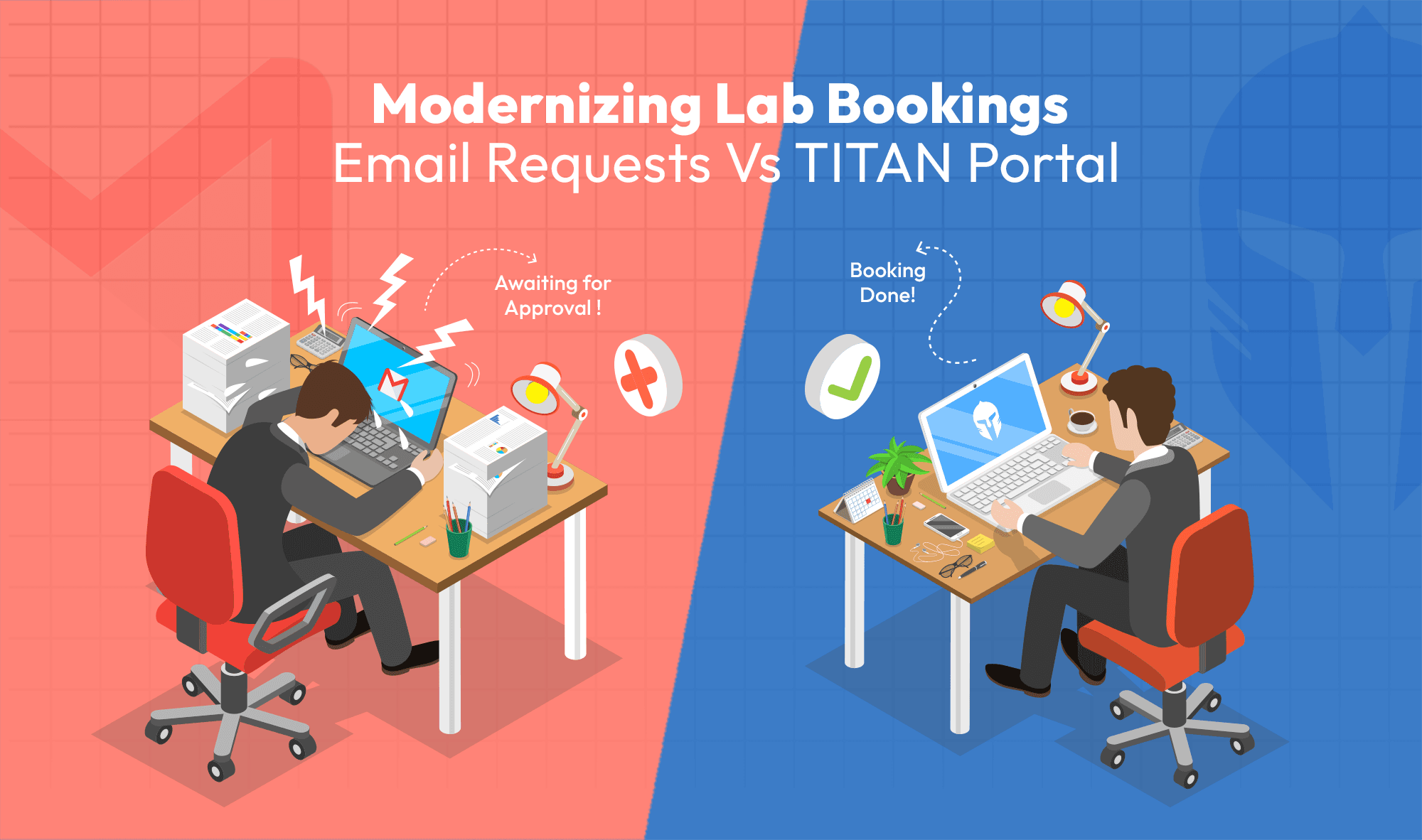

Teams double-book. Schedules conflict. Someone runs a test on a configuration that's already been updated without telling anyone. And when the results come back, nobody's entirely sure which version of the prototype was actually tested. The configuration history is in someone's inbox. Or on a shared drive nobody's updated. Or worse, in someone's notebook or memory.

Real-time visibility into prototype status, configuration management, test and issues history and work order tracking isn't a luxury anymore. It's considered to be the foundation of a test operation that produces results teams can actually trust. When that visibility doesn't exist, engineers spend their time managing the admin work instead of doing the actual engineering work.

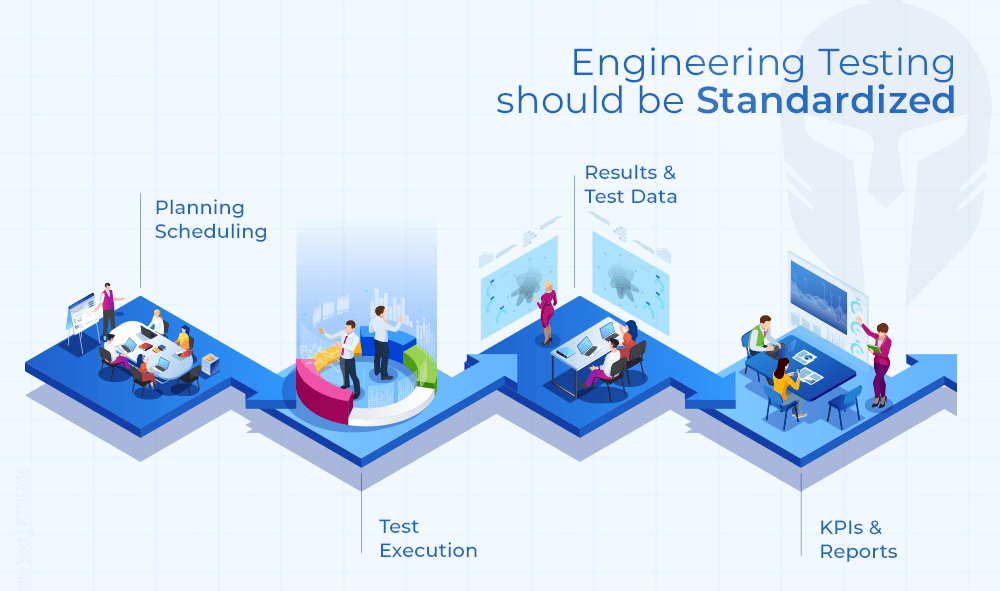

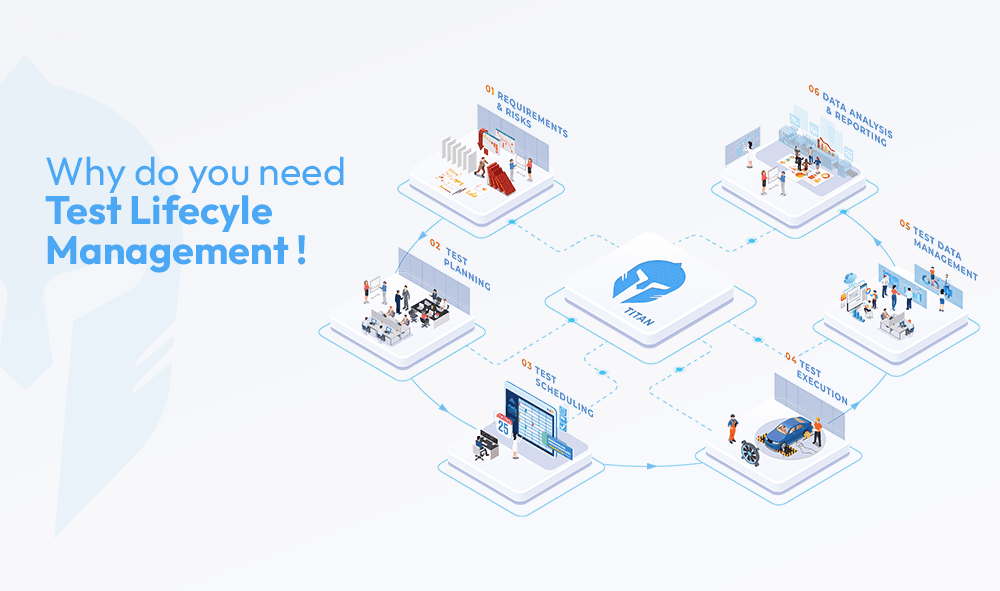

2. Are my teams working from the same information or just assuming they are?

This is the question most engineering leaders are afraid to ask directly, because the honest answer is usually uncomfortable or confusing.

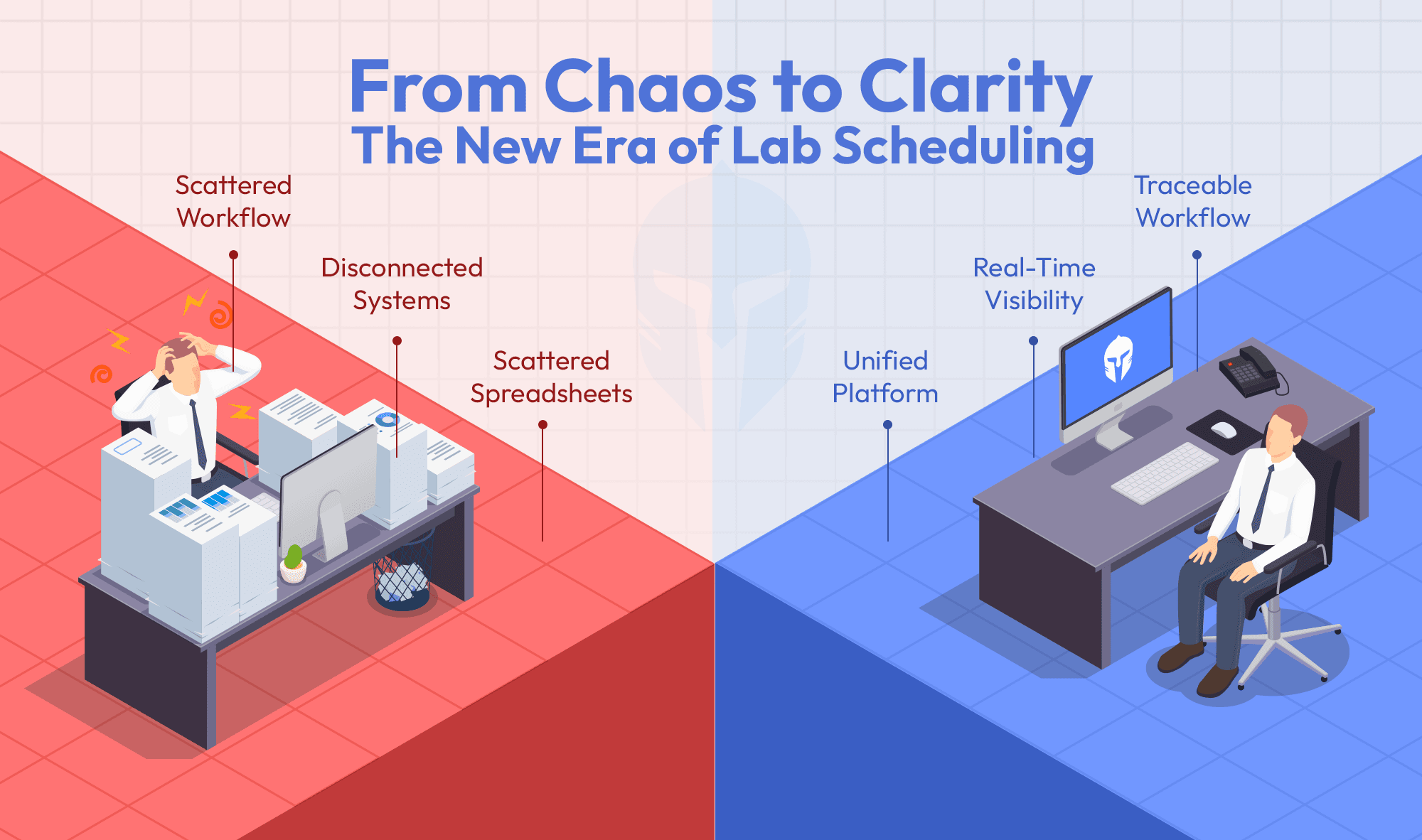

In labs where test plans, lab schedules, work orders and data all live in separate tools or in no tool at all, the answer is almost always the same: teams are working from their own version of the truth, syncing up occasionally in meetings and hoping the gaps don't surface at a critical moment. The information flow is disconnected. And when information doesn't flow, neither does accountability.

A test engineer can't verify performance against a test they can't easily access. A lab manager can't flag a scheduling conflict they can't see coming. A team lead can't track progress on a test that's being documented in three different places simultaneously. The result is inefficiency that compounds quietly, over weeks and months, until it becomes a quality issue or worse, a compliance one.

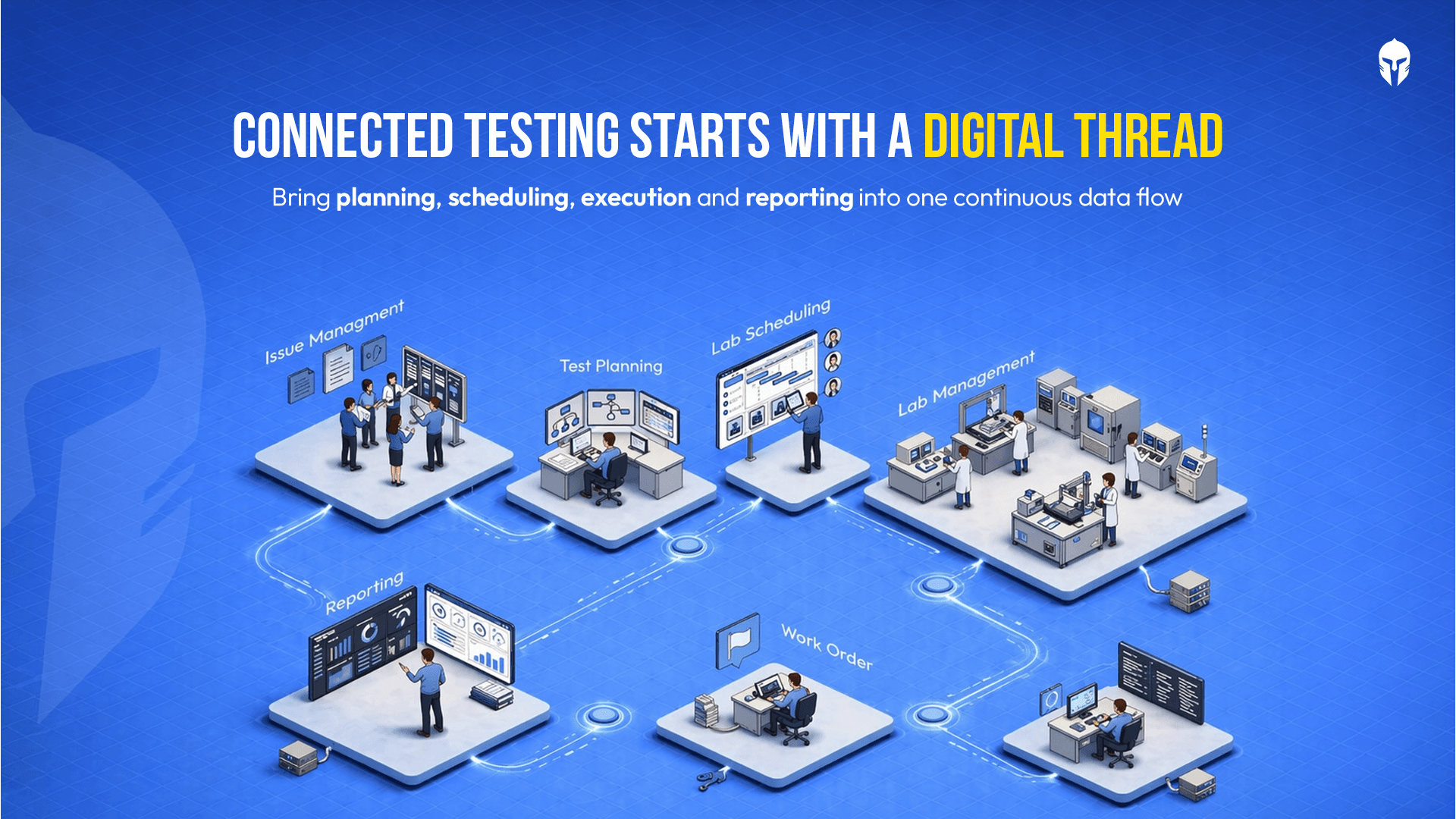

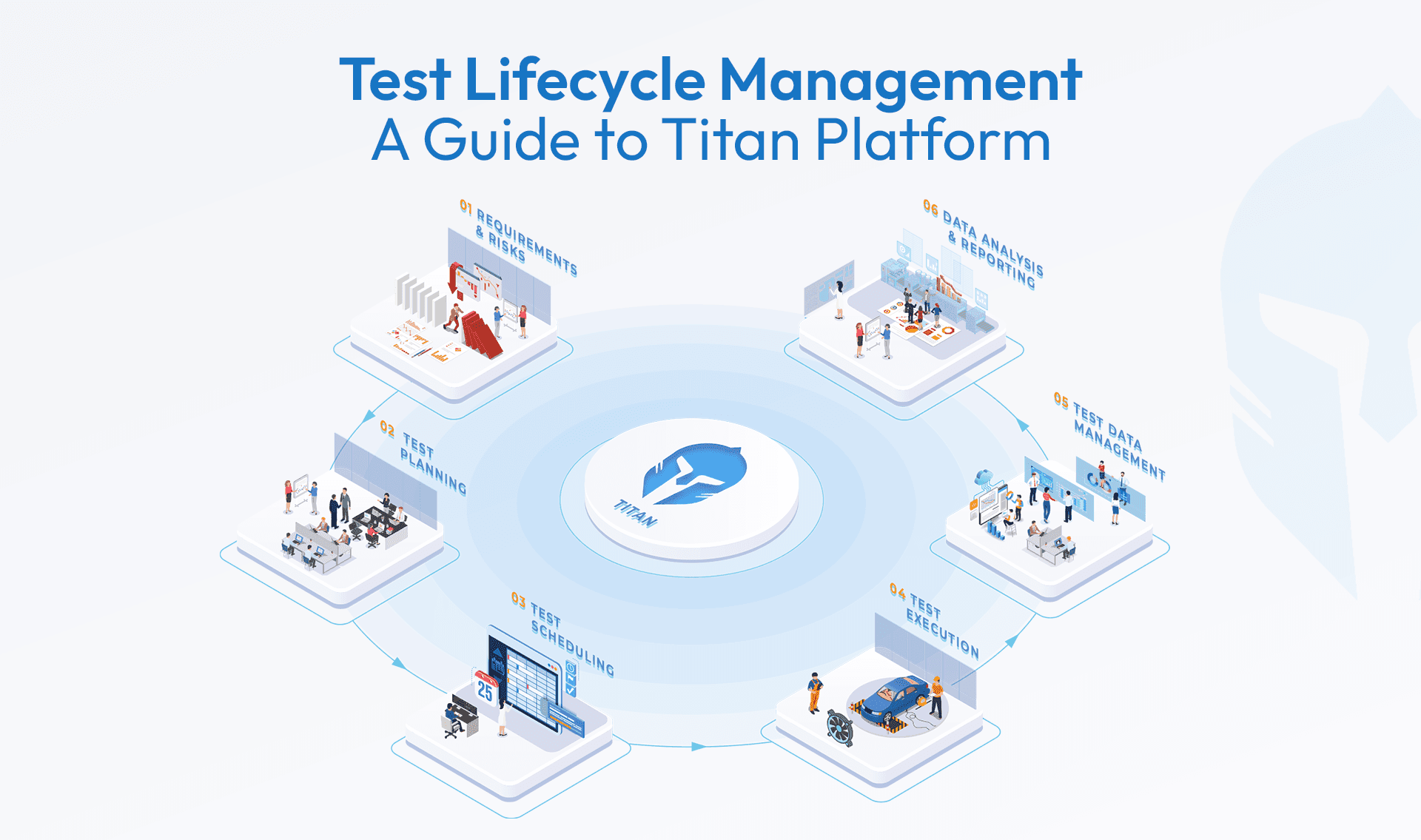

When everything is connected: tests, lab, prototype, maintenance, data and reports, teams stop assuming and start knowing. That's a fundamentally different state and the difference shows up in the output.

3. How much of my engineers' time is actually spent on real engineering?

This question tends to produce a lot of discomfort when asked honestly in a leadership setting, because the number is almost always lower than it should be.

In labs running on outdated or fragmented tools, engineers spend a remarkable portion of their time on things that have nothing to do with engineering. Searching for historical test data. Chasing down approvals on work orders. Manually reconciling test results. Rebuilding context that should already exist in a shared system. Sitting in coordination meetings that exist solely because the tools don't coordinate on their own, often triggered by conflict in the schedule.

The operational cost of this is significant. But the human cost is worth naming too. Engineers who trained for years to solve complex technical problems find themselves spending their days on administrative overhead. It's demoralizing and it's a direct threat to the quality of the work.

Reducing that administrative drag through standardized processes, integrated work order management and a system that handles scheduling, assignment and tracking automatically gives engineers back the time and mental space to do what they were hired to do.

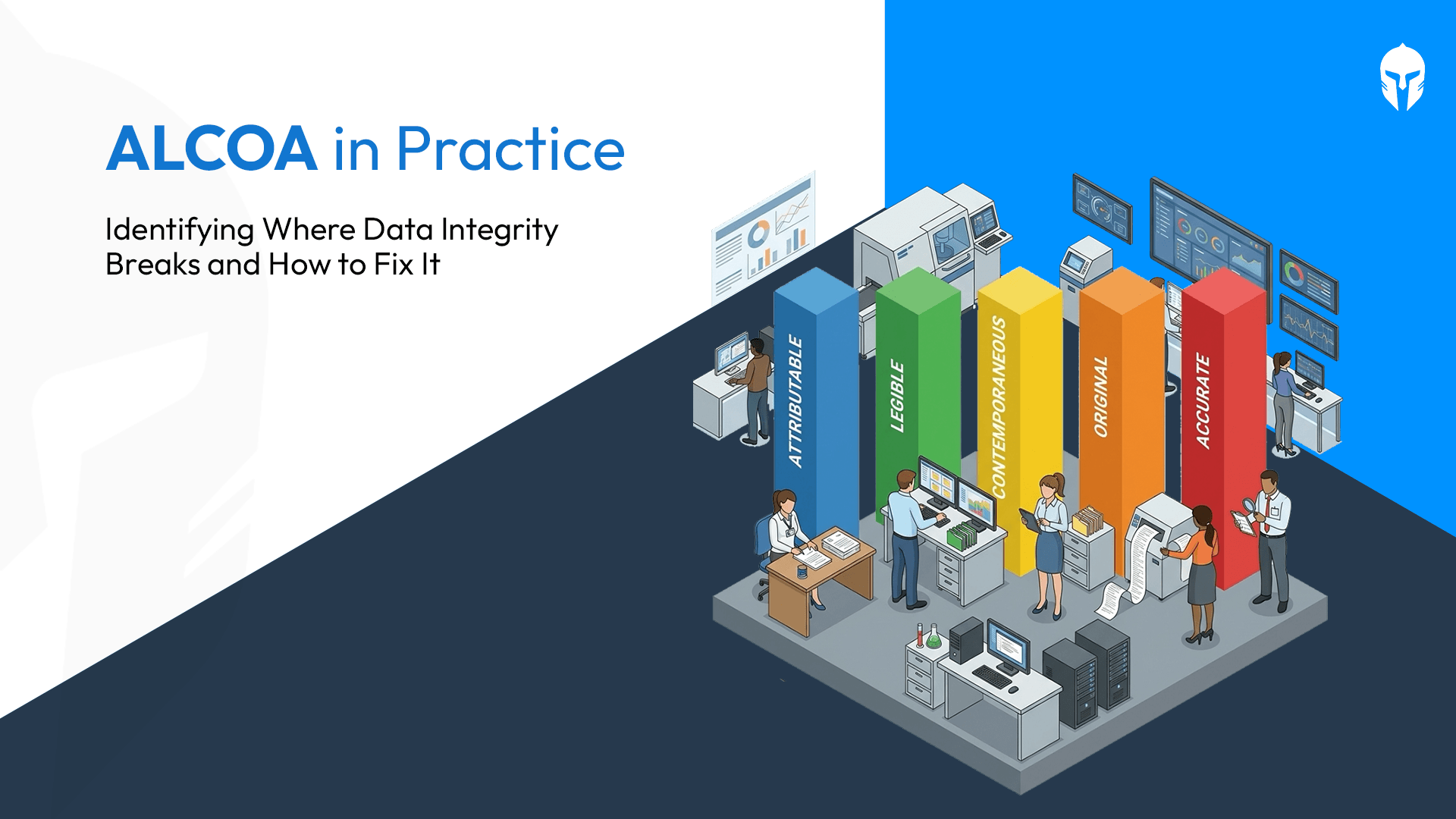

4. If an auditor walked in tomorrow, what would my test documentation look like?

This question has a way of clarifying priorities very quickly.

Audit readiness is one of those things that engineering operations either build into their daily process or scramble to reconstruct when it matters. The labs that scramble are the ones operating without full traceability without a system that tracks every test, every result and every change in real time, end to end.

The labs that answer this question well prepared because their normal operating state is already auditable. That's a discipline. And it's one that has to be built into the process, not bolted on afterward.

5. Is my lab scaling with my business or holding it back?

Growth exposes the cracks that existing workloads have been papering over. More products, more test programs, more teams, more data and suddenly, in a contemporary operational environment, a system that was just barely functional becomes visibly broken.

The labs that scale well share something in common: their underlying platform was built to grow with them. Modular enough to start with what matters most and expand when ready. Configurable enough to match the unique workflows of each testing team without compromising the consistency of the overall operation.

A multi-tenant architecture means different testing teams can each have their own dedicated space, customized to their specific needs, within a single unified enterprise-wide system. That's not a small thing. It's the difference between a lab that serves the business and a lab that the business has to work around.

The Conclusion That's Hard to Sit With

The five questions above aren't particularly technical. They don't require specialized knowledge to ask. Any VP of Engineering can walk into their lab tomorrow and start asking them.

What makes them hard isn't the asking. It's what happens when the answers reveal that the operation running behind those questions is still held together by workarounds, scattered spreadsheets, outdated tools and the heroic effort of individuals who have learned to compensate for systems that were never designed for the complexity they're now managing.

The product that eventually reaches a customer, the vehicle, the aircraft, the device, carries the quality of every decision made in that test process. Every double-booked component. Every result that couldn't be traced. Every hour an engineer spent searching for a file instead of solving an actual problem.

The lab is not a back-office function. And a VP of Engineering who treats their test operations as an afterthought, something to be managed cheaply, tolerated rather than invested in will eventually face a product failure that traces all the way back to a system that was simply not up to the job.

What matters is whether your lab is ready for the demands of upcoming test cycles.

Evaluate Your Test Operations

Identify gaps in visibility, efficiency and control across your lab workflows