What -30°C teaches you about a tire

Author

Neerav Singh

Technical Product Specialist

Author

Neerav Singh

Technical Product Specialist

Reading Time

3 min read

What -30°C teaches you about a tire

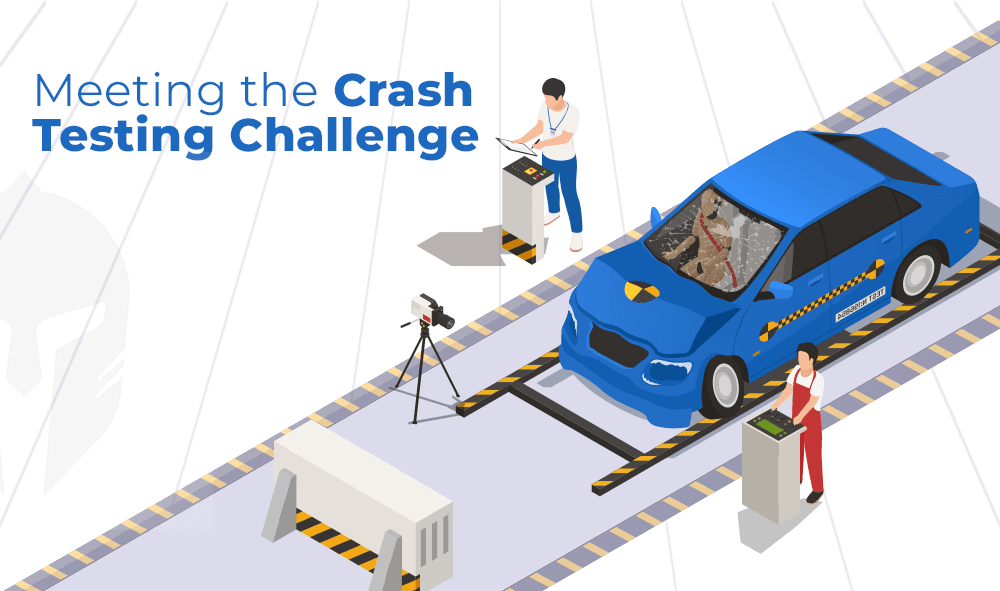

In northern Finland, engineers listen to rubber meet ice in silence so complete it feels deliberate. What they're really chasing consistent, trustworthy data turns out to depend as much on how a test program is run as where it takes place.

Key Takeaways:

- Conditions are fleeting. The perfect test window fresh snow, overnight ice and the right temperature disappears fast. Miss it and you're building on approximations.

- Time is lost at the edges. Most teams lose ground before and after the test in planning gaps, scattered results and knowledge that walks out the door at season's end.

- The real gap is operational. The distance between running a test and understanding what it means is where development cycles quietly stall.

- Structure is what makes testing count. A connected lifecycle from planning through validation and into the next season is what turns good conditions into great products.

There is a place in northern Finland where the sun does not bother showing up for weeks at a time.

The town is called Ivalo. Population: just over 3,000. The landscape stretches out in every direction, flat and frozen and blanketed in a silence so complete it feels almost deliberate. The birch trees stand still. The lake is a white sheet. And somewhere in the middle of all that stillness, engineers are at work.

They are listening to tires.

Not in a metaphorical sense. Literally listening. To the way rubber meets ice at different temperatures. To the micro-sounds of tread channels filling and releasing snow. To the kind of feedback that no simulation has quite managed to replicate, because the real world has a way of surprising even the most thorough models.

This is where tire development actually happens. Not in the brochure. Not on the spec sheet. Here.

The problem with borrowing time

For a long time, winter tire testing worked like this: you booked a window on a shared proving ground, flew your team out, packed in as many test runs as the schedule allowed and flew home with data. Then you waited for lab results. Then you iterated. Then, if the season had not already ended by the time you needed to retest, you booked another window and started again.

It worked. The industry ran on it for decades.

"Cold weather does not check availability calendars. The conditions that produce the most valuable test data are fleeting. Miss them and you are working with approximations."

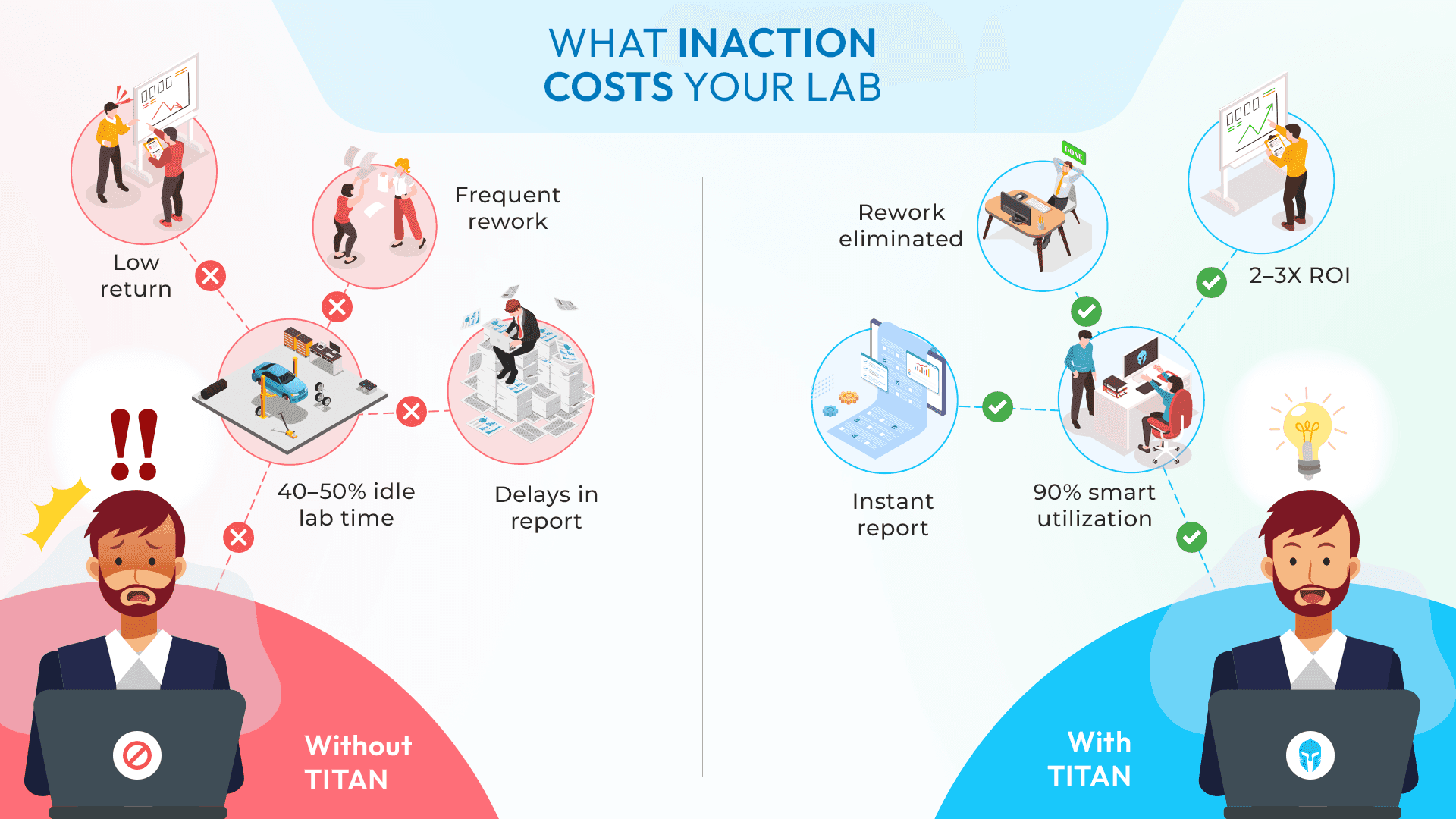

The issue is that a "booked window" is not how winter arrives. Cold weather does not check availability calendars. The conditions that produce the most valuable test data the precise combination of fresh snow, low temperatures and the kind of low-friction ice that forms overnight are fleeting. Miss them and you are working with approximations. And in tire development, approximations compound. One slightly uncertain data point leads to a slightly less confident validation. A slightly less confident validation leads to a longer test cycle. A longer test cycle means a better product arrives later than it should.

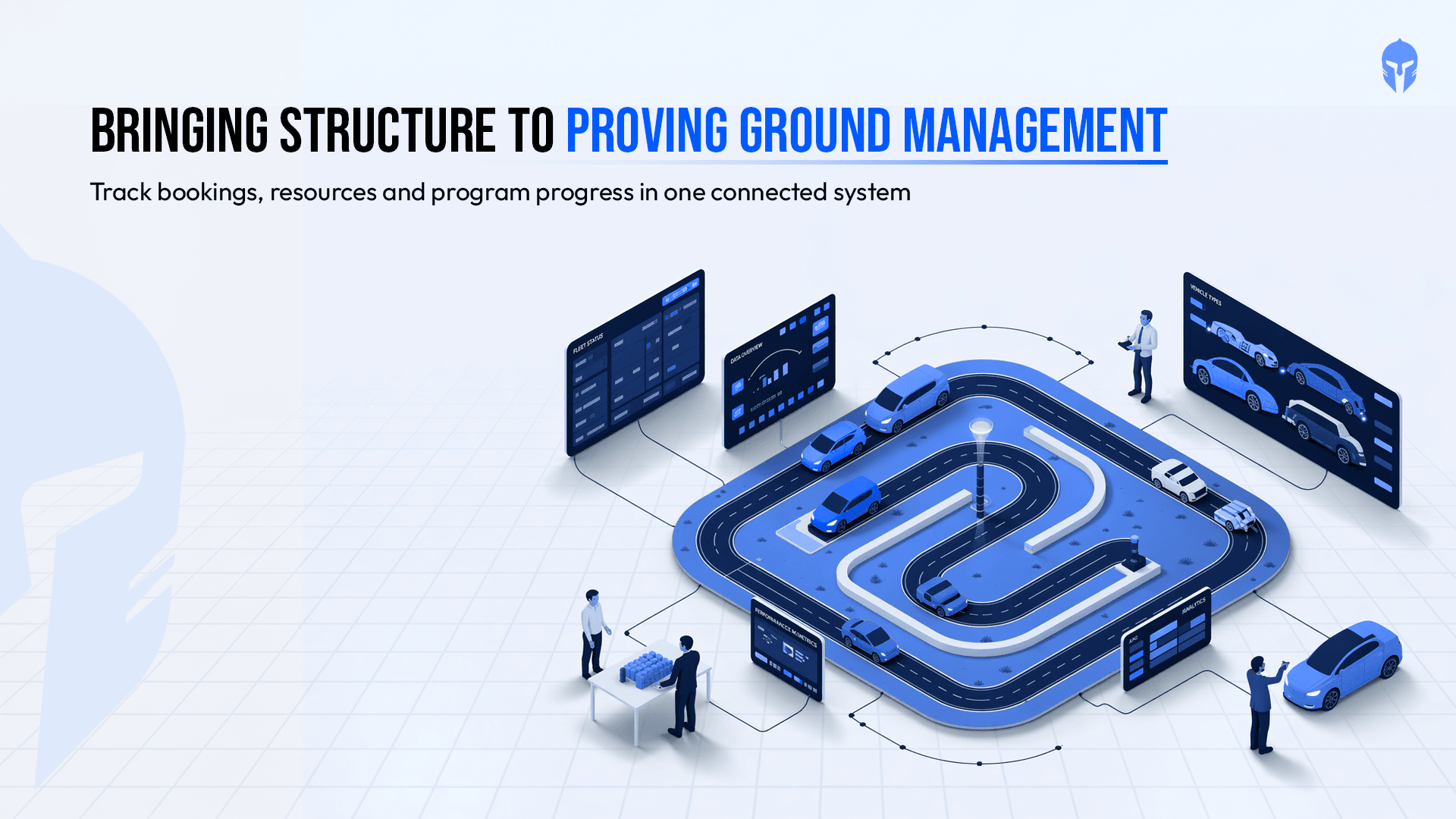

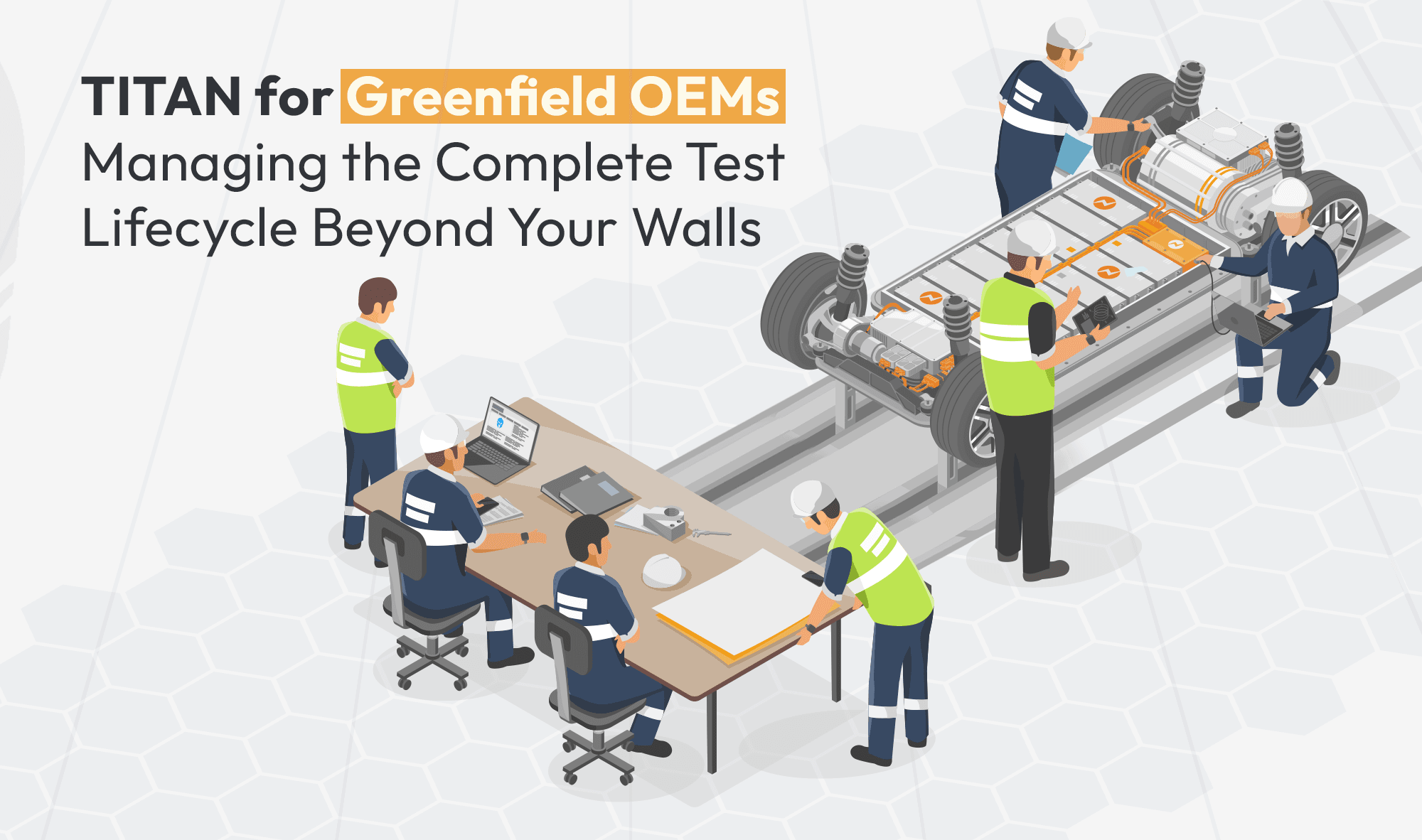

Some organizations have solved this by investing in dedicated outdoor facilities in places like Ivalo. Purpose-built tracks on snow and ice. Advanced measurement systems. Infrastructure designed around one goal: the ability to test winter and all-season tires on their own terms, on their own schedule.

It is an extraordinary commitment to getting testing right and it raises a genuinely important question about what "getting testing right" fully requires.

What a serious facility investment is really solving for

When a company builds a dedicated testing site from the ground up, the story is always about what that facility is solving.

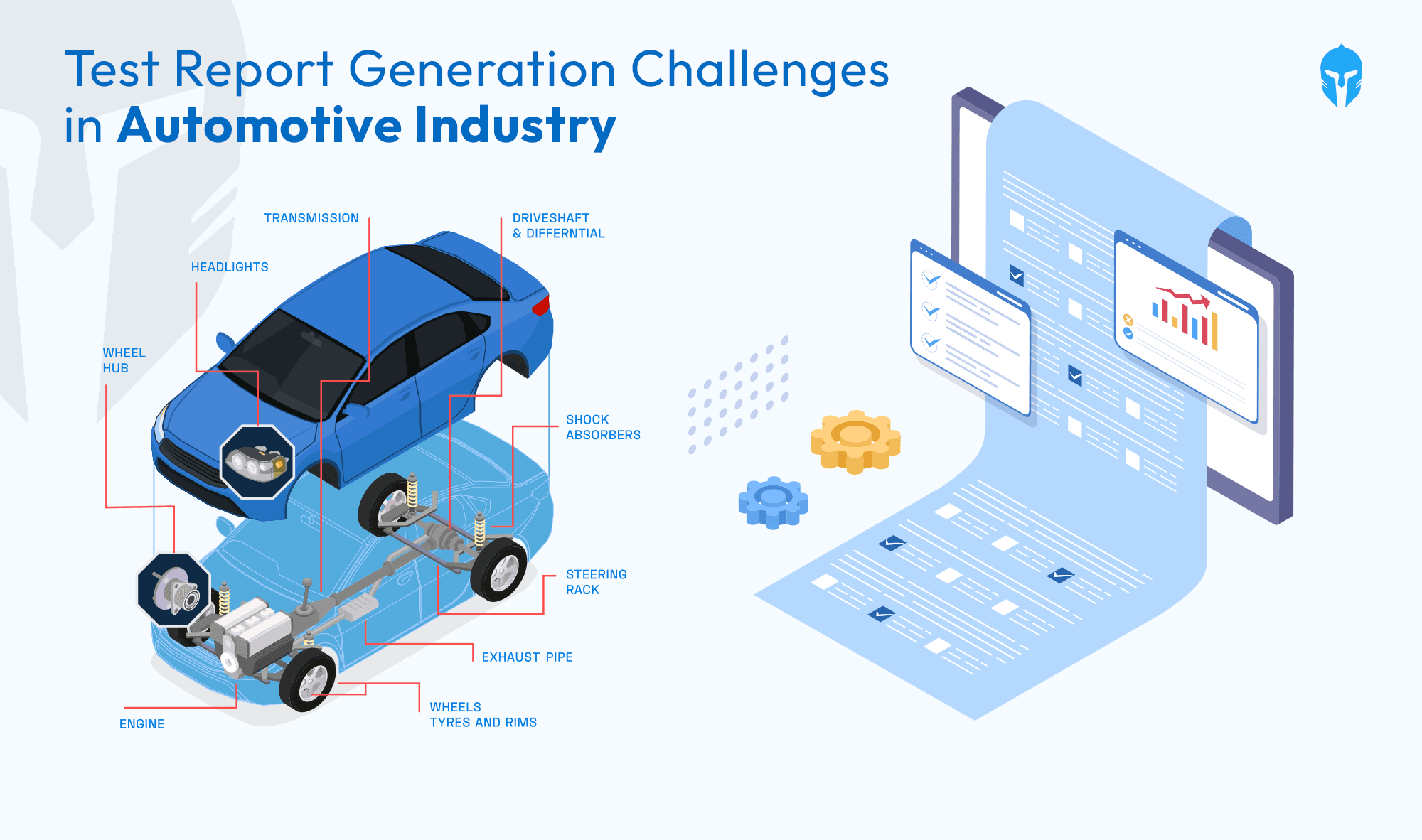

The pain points are familiar: the need for consistent, repeatable data; the frustration of compressed testing windows that don't align with the conditions you actually need; the logistical complexity of fitting a development cycle around shared infrastructure; the gap between finding something unexpected in the data and being able to respond to it before the season closes.

A dedicated facility addresses those problems by removing external constraints and giving teams the freedom to test on their own terms. The organizations that extract the most from that freedom are the ones who arrive prepared with a program structured to take advantage of pristine conditions, capture what those conditions reveal, and carry that learning forward into the next cycle.

The operational layer that determines what testing is worth

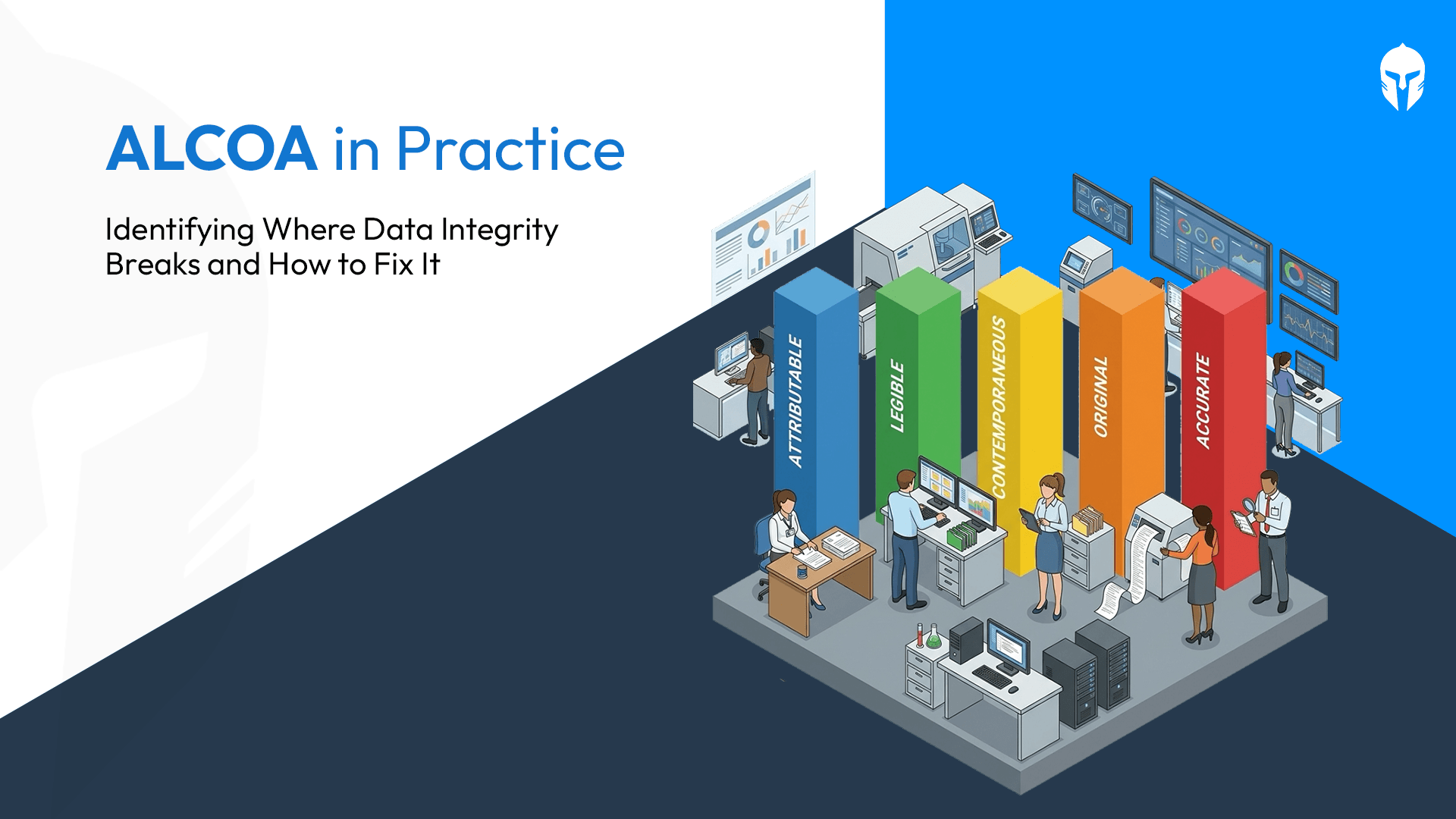

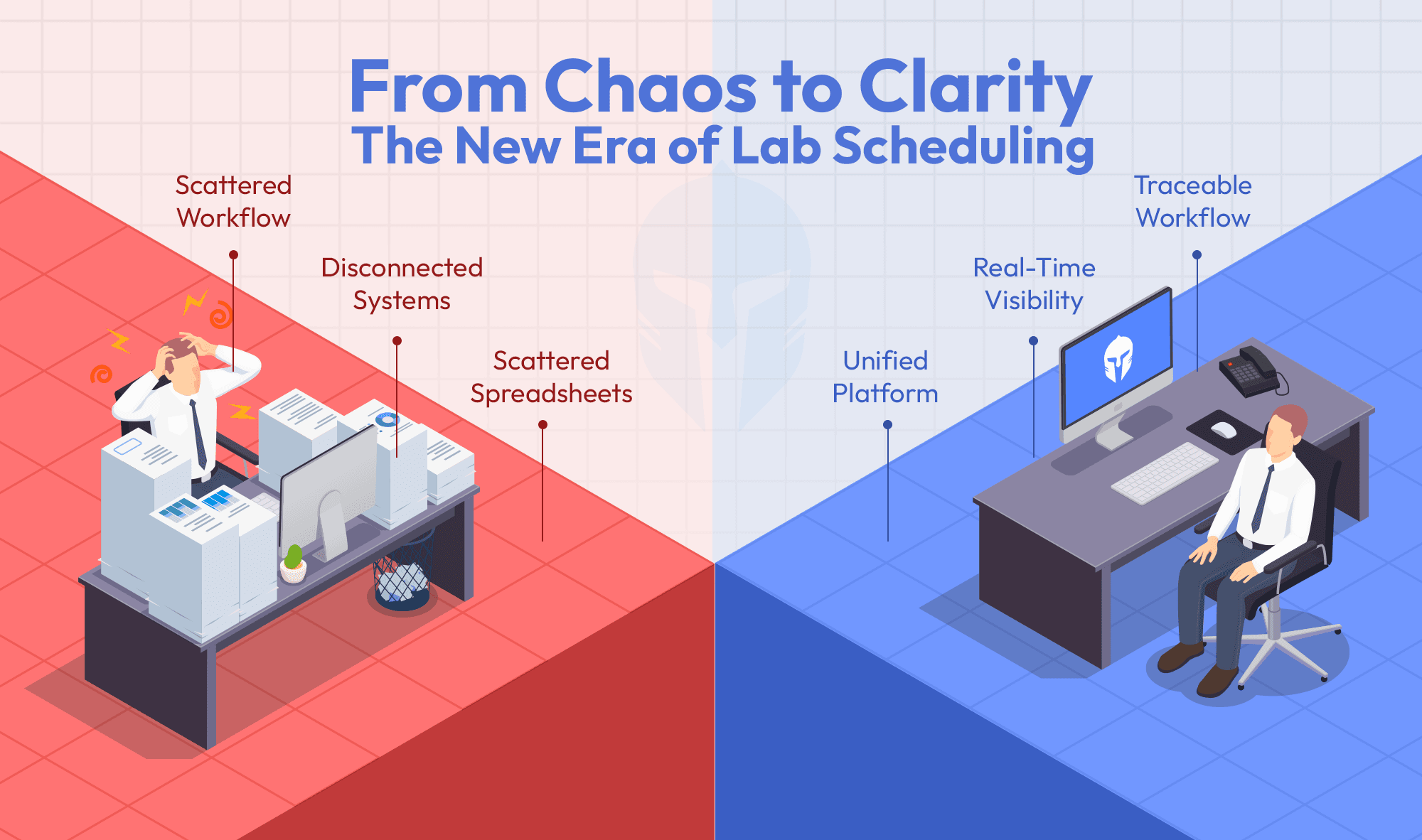

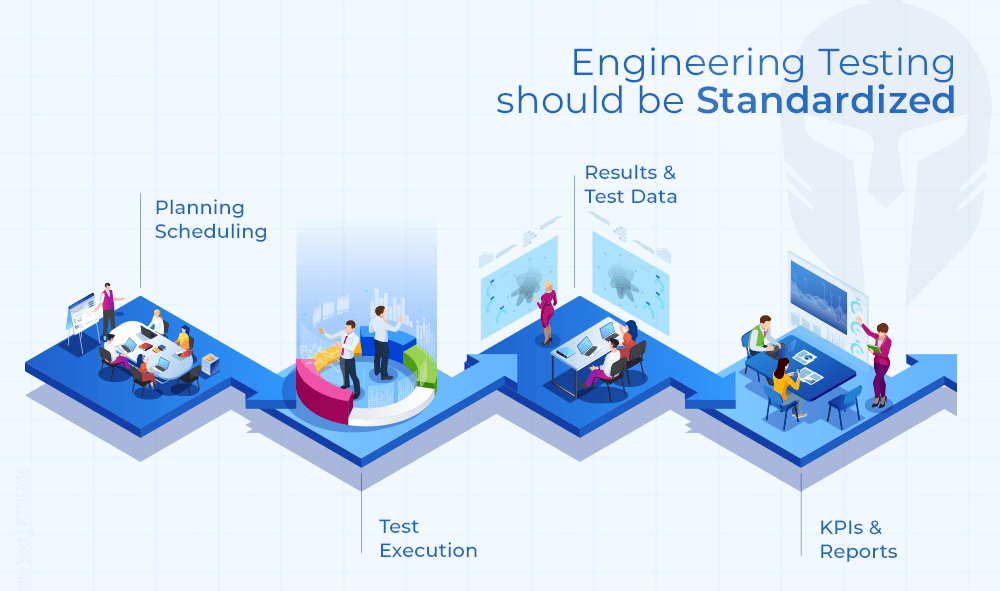

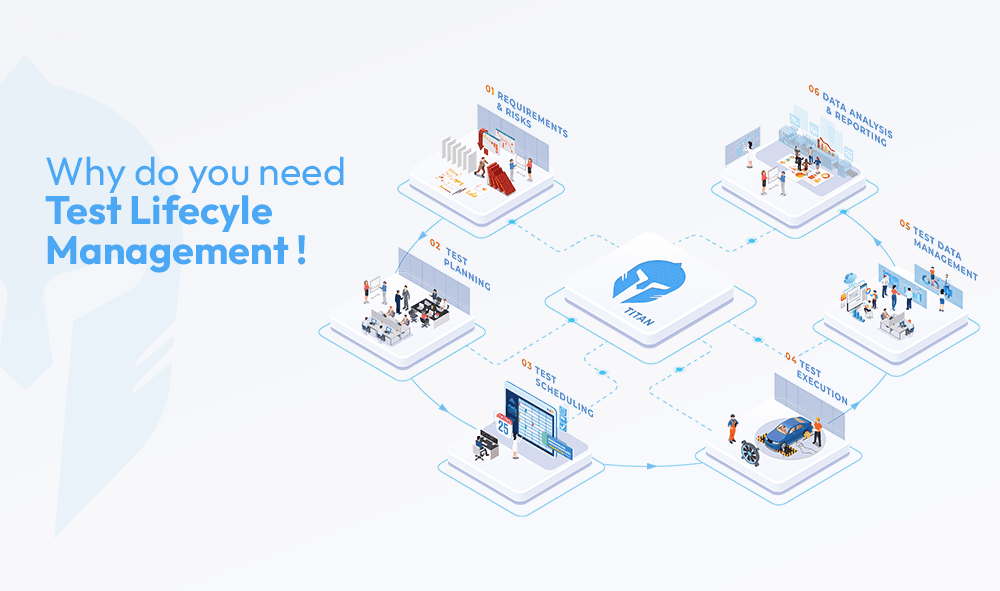

The qualities that make a winter test program genuinely valuable repeatability, fast iteration, tight validation timelines, knowledge that accumulates across seasons are products of how a program is planned, managed and learned from. The proving ground provides the conditions. The discipline of test lifecycle management is what turns those conditions into insight.

Time is lost in unclear test objectives and in the effort required to analyze and reconcile results afterward.

In most organizations, testing is simultaneously the most critical part of product development and the most chaotically managed. Test plans live in spreadsheets. Results sit in inboxes. Scheduling conflicts surface at the worst possible moments. Validation decisions get made in meetings rather than from a clear, real-time picture of where a program stands. Knowledge accumulated over a season walks out the door when the team disperses, and the next cycle rebuilds context from a half-remembered summary.

The cost shows up in slower cycles, reduced repeatability, and the persistent risk of arriving at the end of a season with gaps in the data you needed.

What test lifecycle management looks like in practice

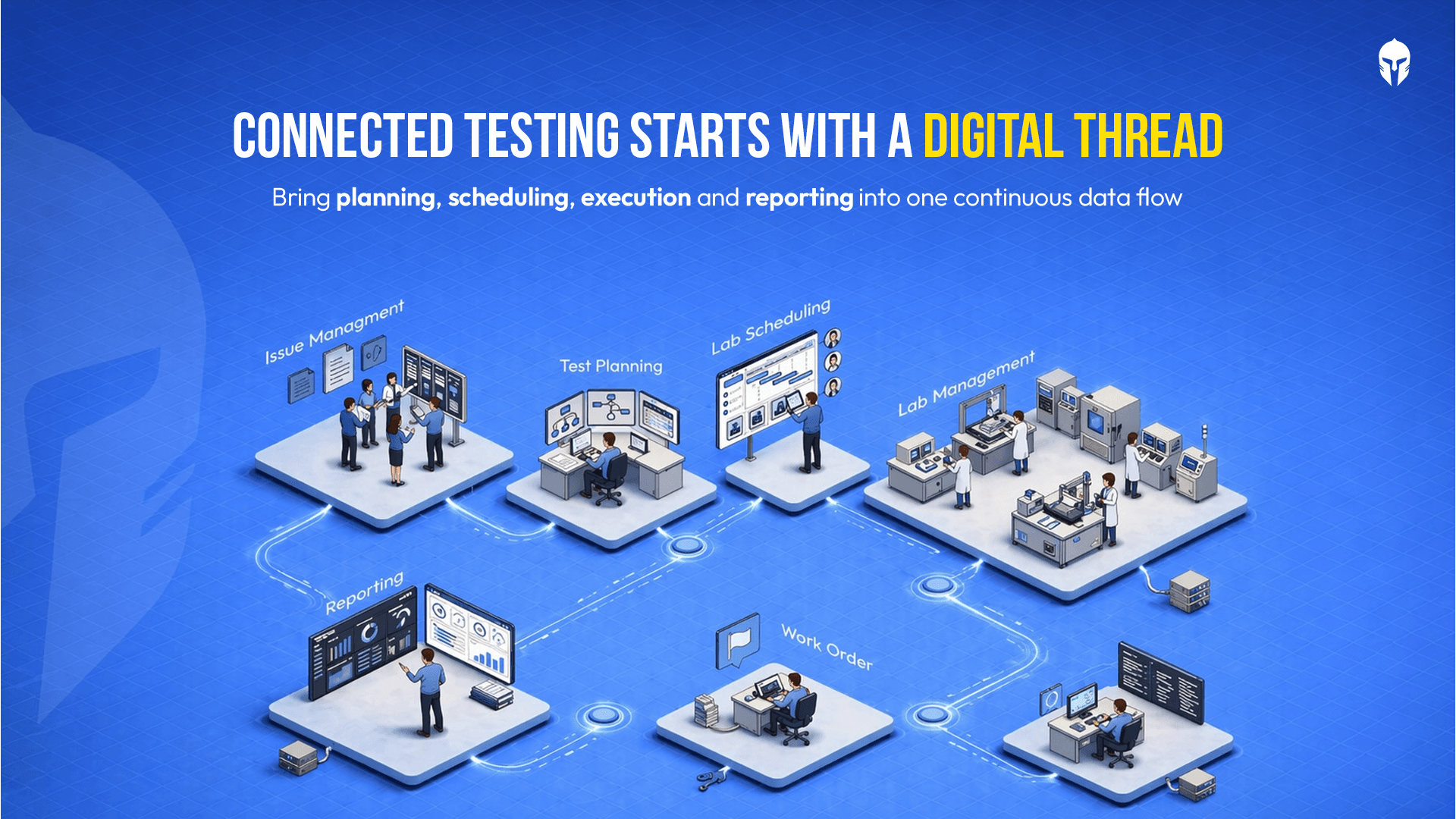

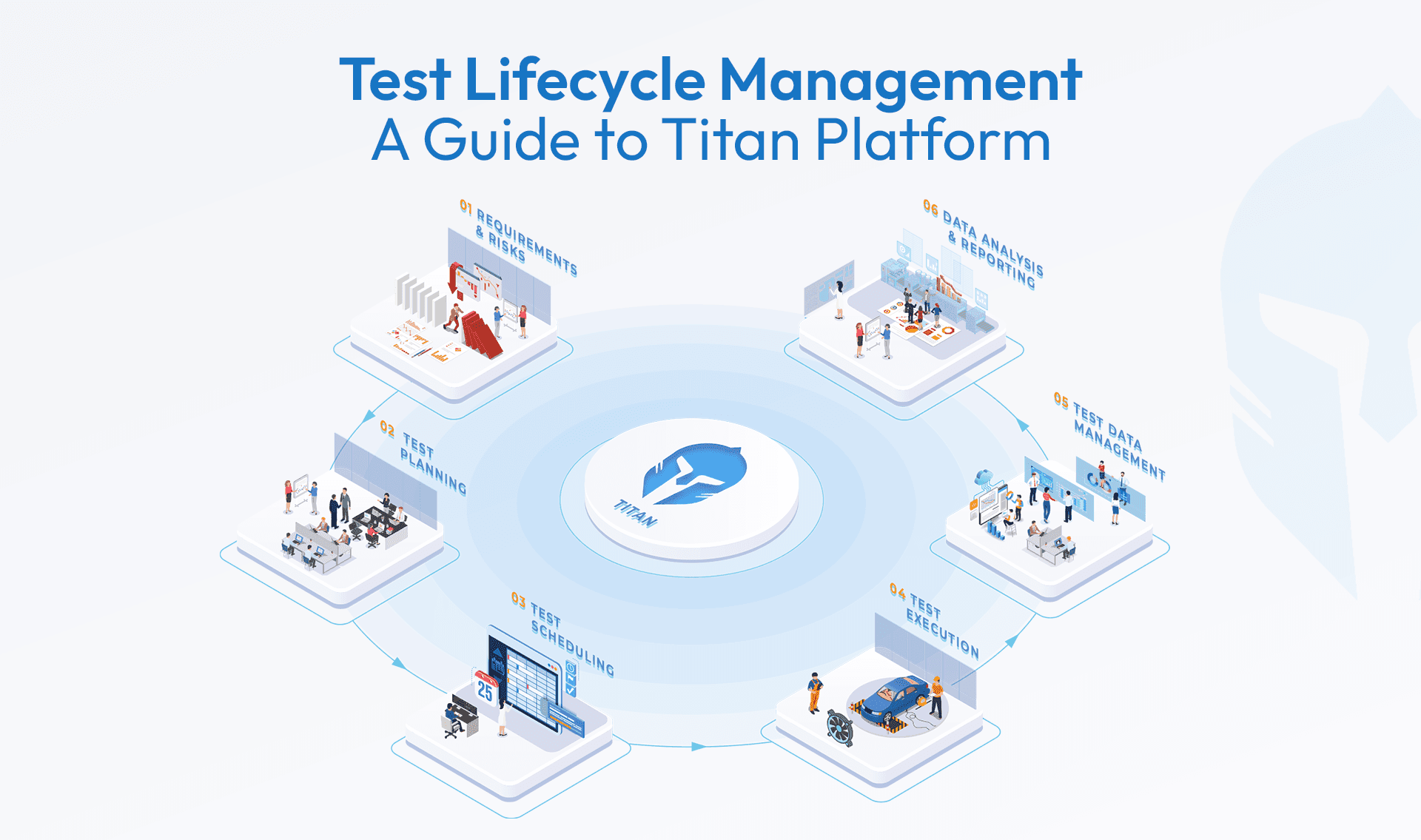

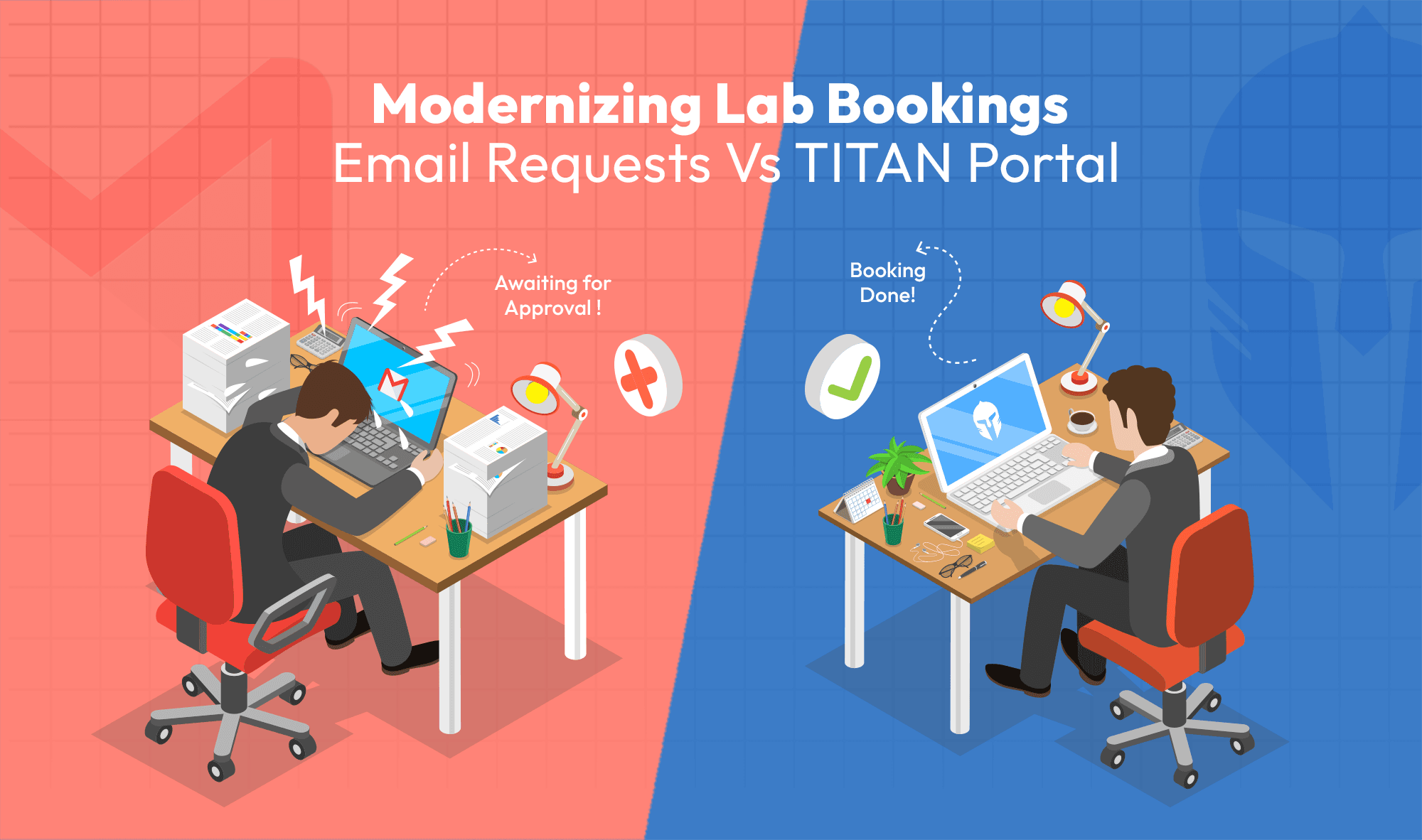

TITAN TLM is a platform built specifically for this layer of R&D the planning, coordination and validation work that determines what any test environment is worth. It brings structure to the entire lifecycle of a test program, from initial requests through to final validation reports, wherever that testing physically takes place.

"Every hour spent on a proving ground is worth more when the program behind it is structured, connected and built to carry learning forward."

In practice, that means teams arrive at the track with a clear test catalog already prepared every test case defined, parameters set, acceptance criteria documented. Scheduling is managed centrally, with equipment and prototypes allocated without conflict. As runs are completed, results flow into a system where the engineering team can see, in real time, exactly where the validation program stands: what has passed, what has not, what needs a rerun before the ice changes.

When the season ends, nothing is lost. The data, the findings, the decisions all of it is logged and available for the next cycle to build on. The learning compounds rather than resets.

On a dedicated proving ground in Ivalo or in a climate chamber closer to home, the effect is the same: a development program that moves faster, produces more consistent results and generates the kind of trustworthy data that serious R&D demands.

Rigour is not a location. It is a process.

The organizations that get the most from world-class testing infrastructure are the ones that show up with a program structured to take full advantage of it. Clear objectives. Defined acceptance criteria. Scheduling that reflects actual priorities. A system that captures what each test reveals and connects it to every test that follows.

Most OEMs already have access to the infrastructure they need proving grounds, labs, climate facilities, third-party test houses. TITAN is the system that connects planning to execution to validation in a way that accelerates the development cycle and reduces the risk of finishing a season short of the data that matters.

Not as a replacement for the test environment, but as what makes every hour spent in one count for more.

The question worth asking

The engineers listening to tires in Arctic Finland are doing something genuinely important. They are in pursuit of conditions that tell the truth about how a product performs when it matters most. That instinct to close the gap between the testing you need to do and the conditions under which you do it is exactly the right one.

"The gap worth closing is the operational one the distance between running a test and understanding what it means, between collecting data and being able to act on it, between one test season and the next."

Closing that gap is what TITAN is built for. And it is closer than most development teams realize.

Connected Test Lifecycle Management for Modern R&D

Improve clarity, repeatability and control across your testing lifecycle.