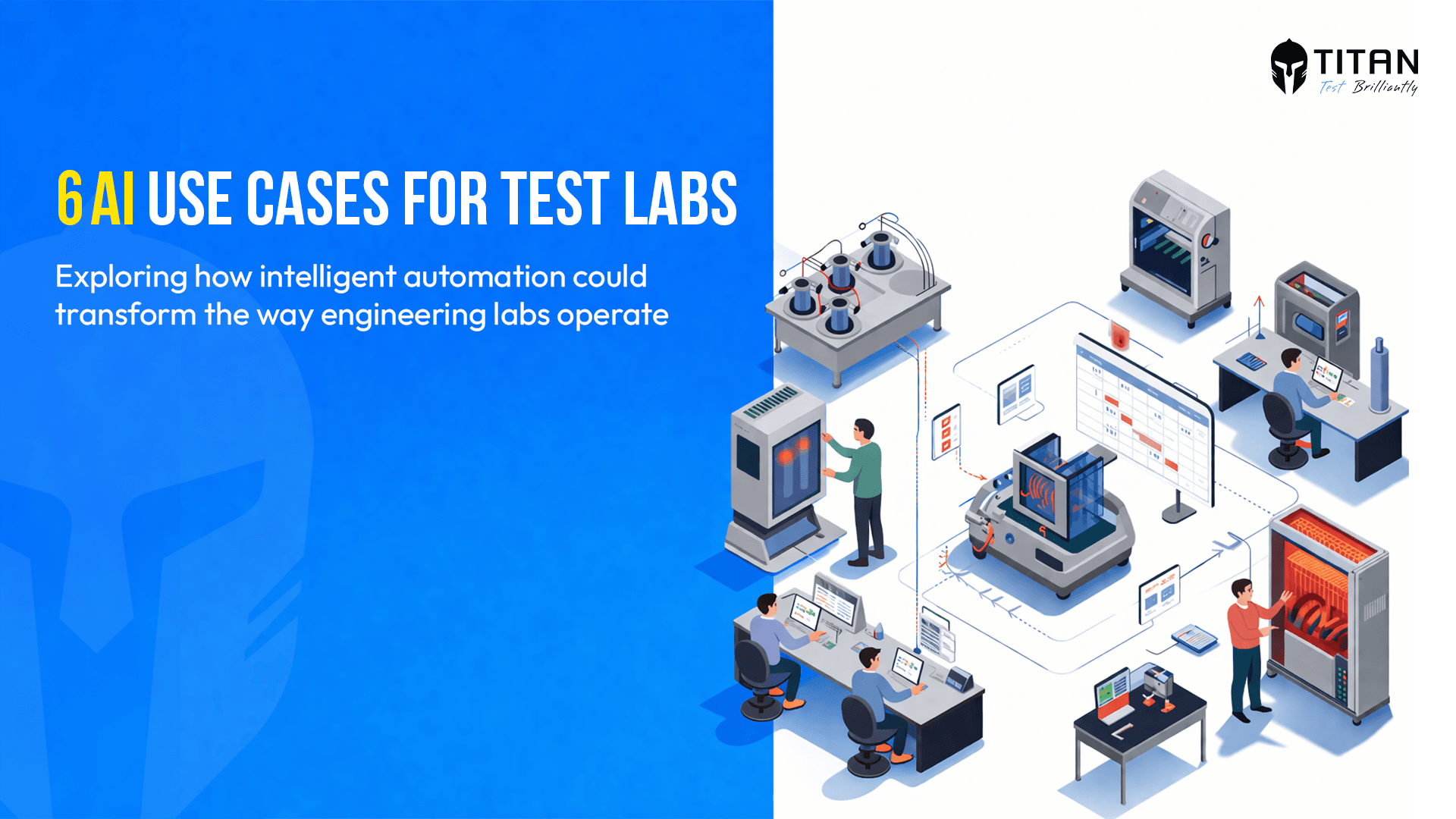

AI in Test Scheduling: Six Use Cases Worth Watching

Author

Neerav Singh

Technical Product Specialist

Author

Neerav Singh

Technical Product Specialist

Reading Time

3 min read

AI in Test Scheduling: Six Use Cases Worth Watching

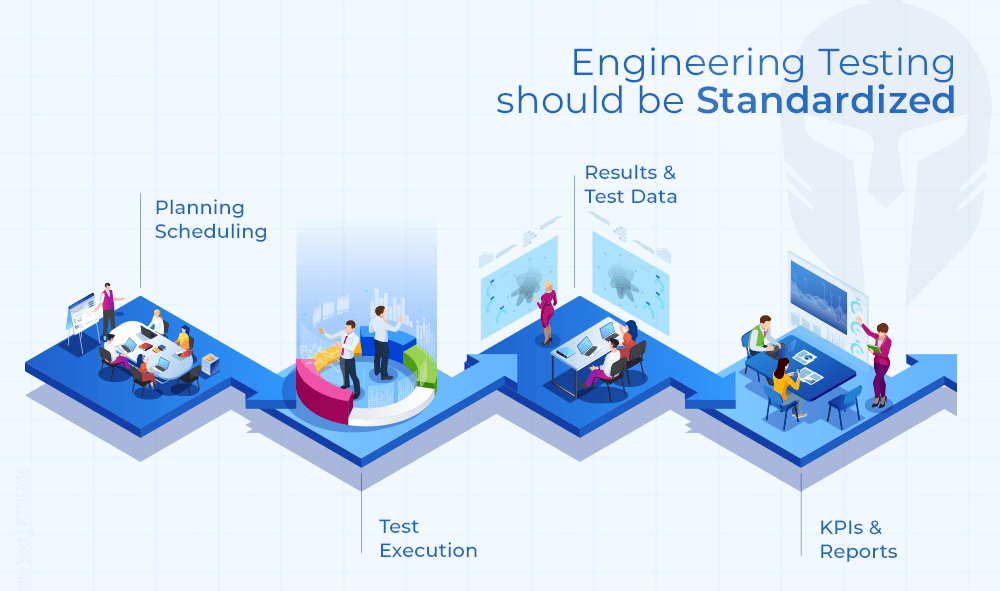

Test scheduling looks administrative from the outside. Inside an engineering lab, it is one of the most layered coordination problems a team faces and one of the most expensive when it unravels.

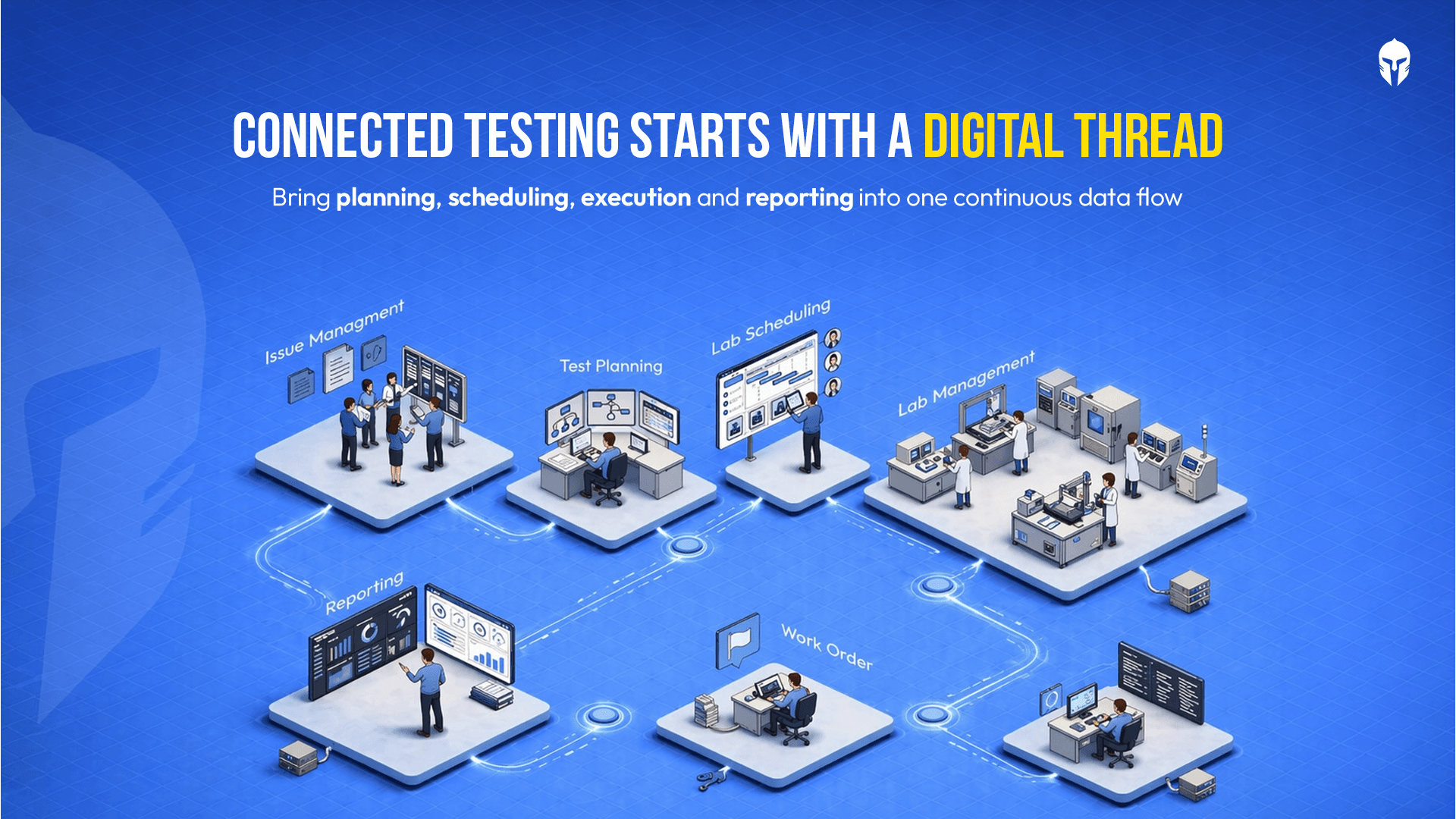

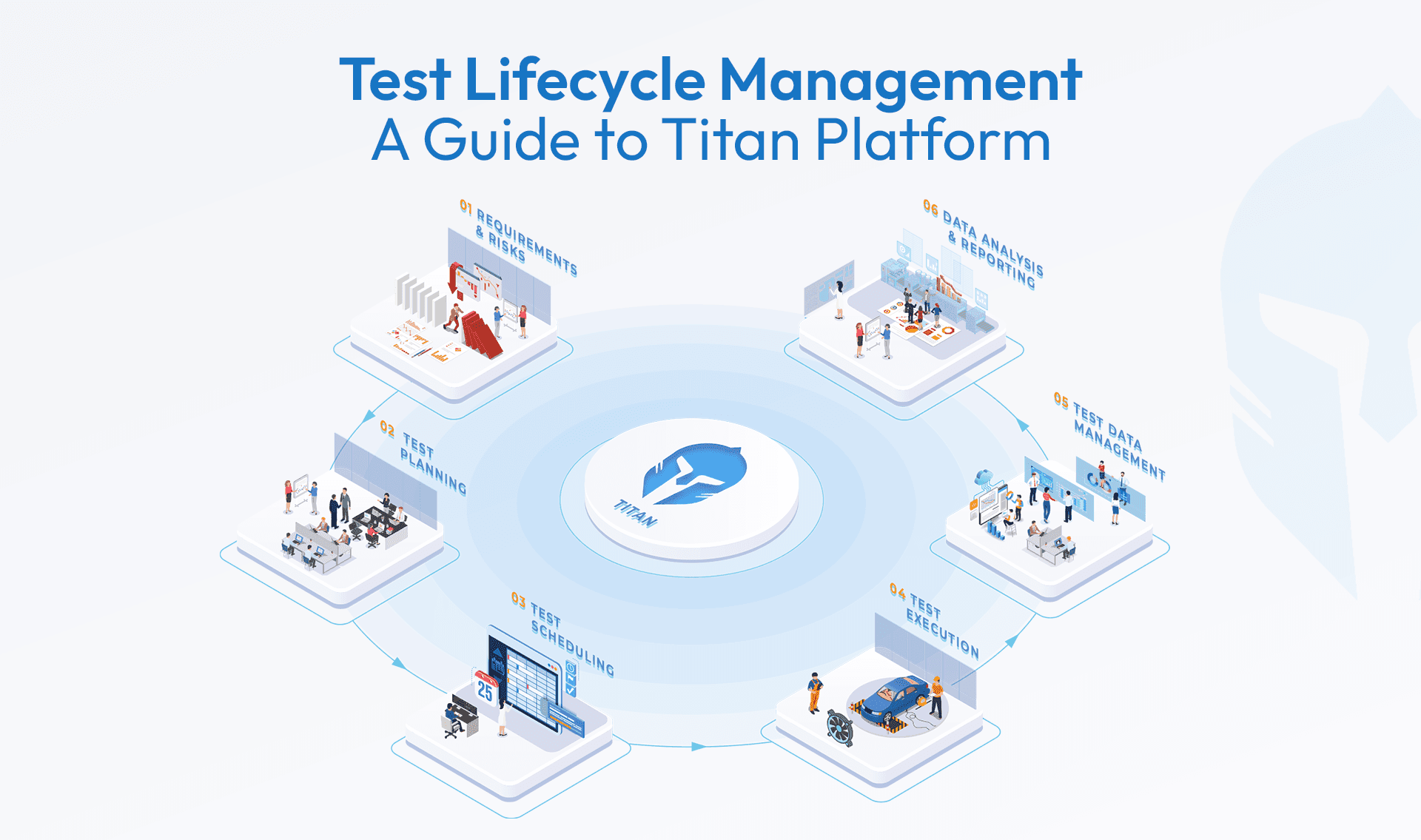

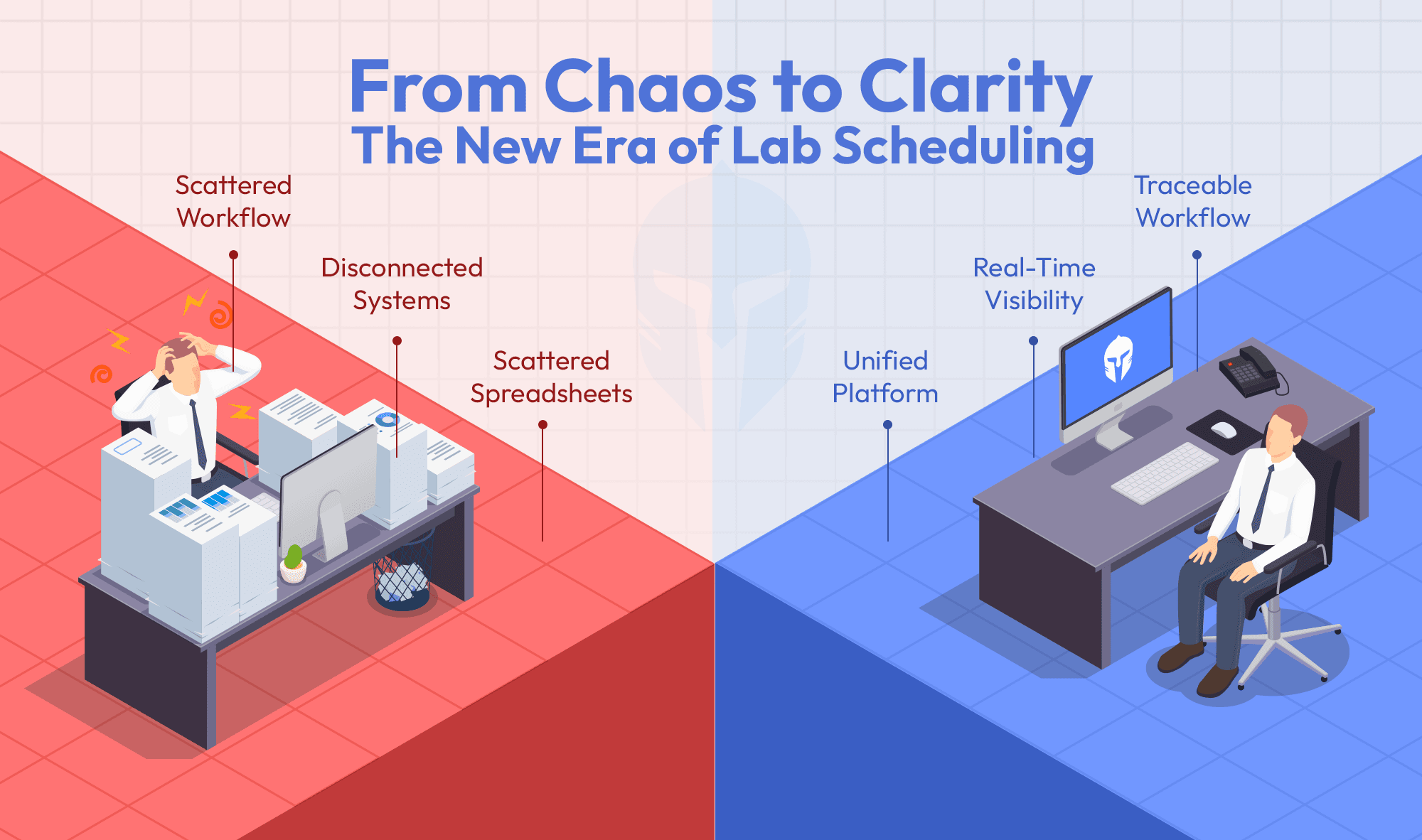

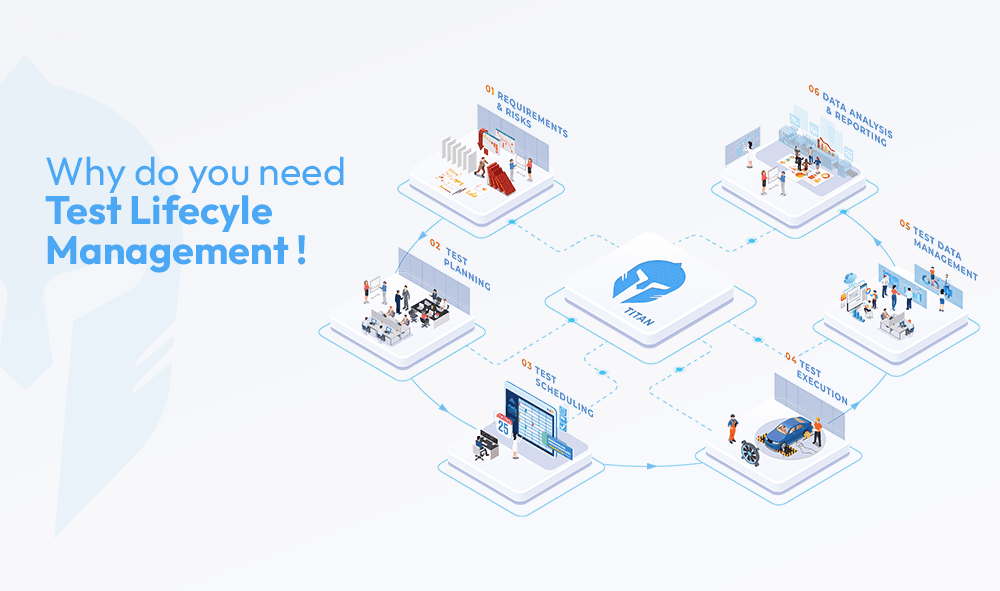

The platform already unifies test planning , lab operations, asset management and scheduling in one place, bringing order to validation workflows that have historically lived across spreadsheets, emails and tribal knowledge. The question worth sitting with is what that connected foundation unlocks when AI is layered on top of it. These six use cases sketch out what that could look like and why the direction matters.

Predictive Conflict Intelligence

Traditional scheduling tools catch the obvious. A double-booked chamber, an overlapping test slot, these surface quickly. What they routinely miss are the subtler risks that derail real campaigns.

A piece of equipment due for calibration next week. A technician whose certification does not cover the test type. A prototype that has not cleared its prerequisite inspection. These go undetected until test day, at which point the damage is already done.

An AI layer working across connected data asset records, technician profiles, prototype readiness status, and test catalog could scan all these dimensions simultaneously before a slot is ever confirmed. The output is not just a conflict flag. It is a proactive warning issued days in advance, giving engineers time to resolve the issue rather than react to it.

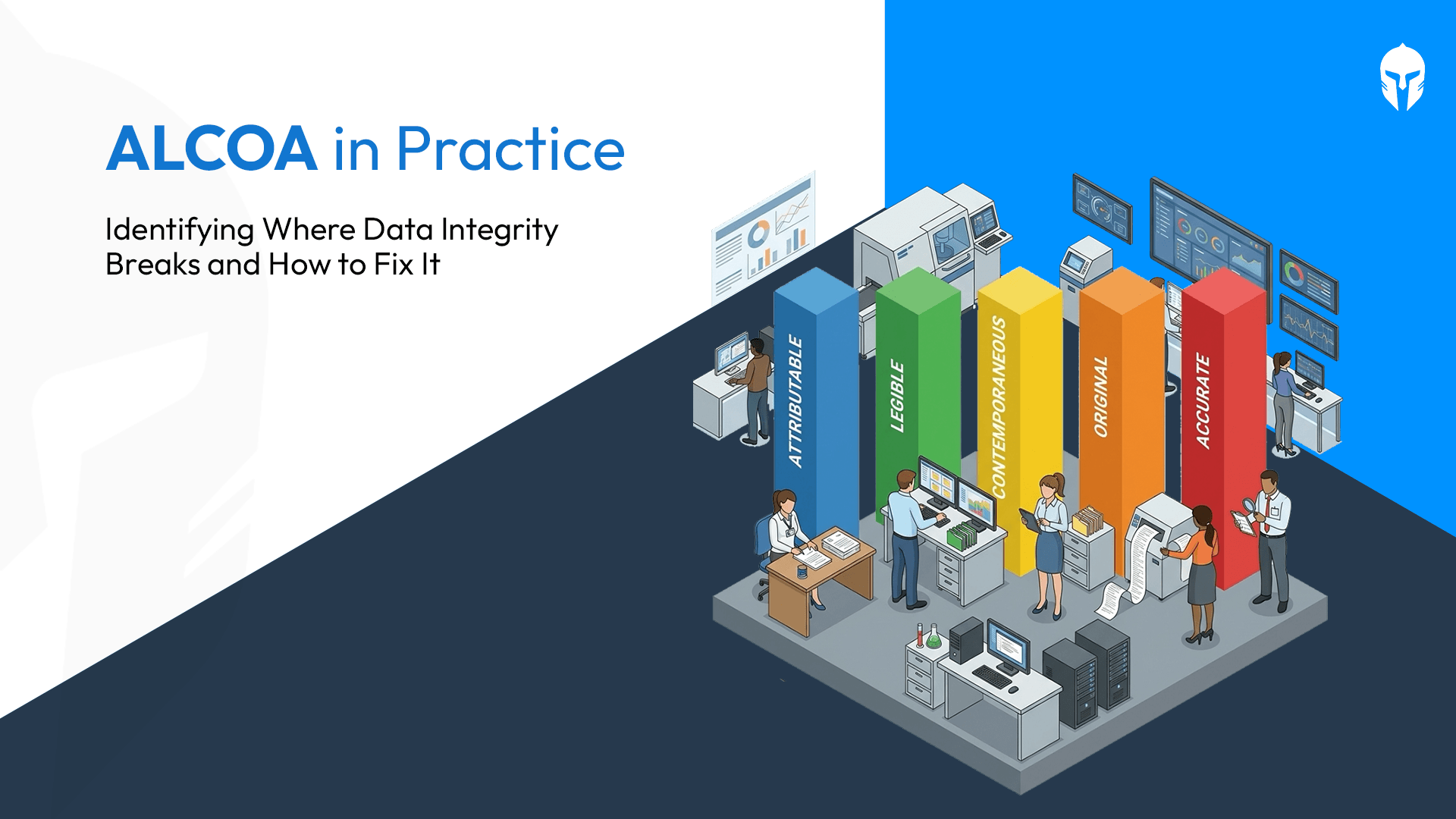

The data already exists on the platform. The opportunity is letting AI read across it in ways that rule-based systems cannot.

Intelligent Test Sequencing

In automotive and electronics validation, test orders carry real consequences. Non-destructive tests must precede destructive ones. Regulatory prerequisites must clear before downstream certification tests begin. Dependencies across teams and test types, rarely fully documented, must hold or entire campaigns get invalidated.

Manually tracking all of this across large test plans is error prone. Engineers carry much of the sequencing logic in their heads and when plans change, re-reasoning through the full dependency map takes time and introduces risk.

With the verification plan, test catalog and traceability matrix already stored in one place, an AI layer working across that foundation could:

- Map inter-test dependencies automatically

- Enforce destructive versus non-destructive ordering

- Ingest regulatory requirements and sequence tests to satisfy them

- Flag any proposed change that would create a compliance sequencing violation

The logic already exists in people's heads. This is the path to making it enforceable and visible without relying on individual memory.

Dynamic Disruption Recovery

When a test fails unexpectedly or equipment goes down, the downstream effect on a manually managed schedule can take days to untangle. The disruption itself is rarely the expensive part. It is the coordination that follows phone calls, emails and negotiations across multiple teams that consumes the time.

A connected view of lab operations, scheduling and asset status creates the data foundation that fast recovery requires. An AI re-optimization layer on top of that could:

- Re-optimize the full schedule within seconds of a disruption event

- Present ranked recovery options sorted by downstream impact and feasibility

- Simulate what each option costs the project timeline before anyone commits

What currently takes days of manual coordination becomes a decision made in minutes, with full visibility into the trade-offs before the choice is locked in.

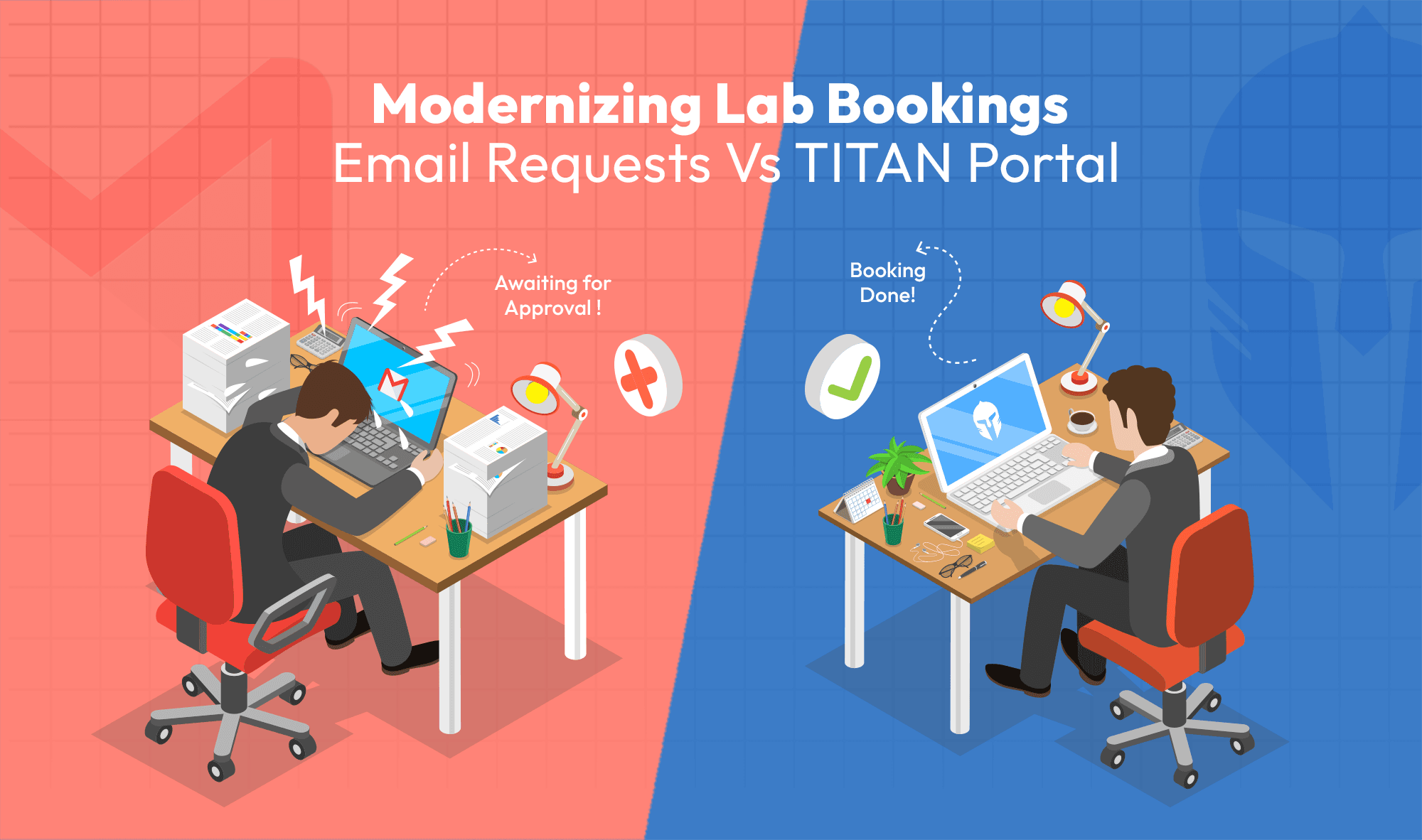

Natural Language Scheduling

One of the quieter friction points in lab operations is the scheduling request itself. Engineers with an informal test need must translate it into a structured form requiring resource codes, lab identifiers, duration estimates and sequencing dependencies. This requires information they may not have readily to hand, which leads to incomplete submissions, back-and-forth with coordinators and delays before anything moves.

A natural language interface sitting on top of an existing scheduling workflow could change this. Engineers describe what they need conversationally. The system:

- Parses intent and maps it to the right resources and test types

- Fills in structure using existing catalog and asset data

- Asks targeted follow-up questions only when genuinely needed

- Allows booking and modification through a chat interface without form-filling

The engineering knowledge stays with the engineer. The administrative translation layer disappears.

Lab Bottleneck Forecasting

Resource bottlenecks in test labs are almost never visible until multiple requests collide simultaneously. A single thermal chamber needed by five teams in the same week. The only certified EMC technician is fully booked during a critical test window. By the time this surface, mitigation options are limited and often expensive.

Equipment availability, personnel assignments, and scheduling demand are already tracked in one system. An AI forecasting layer working across that data could project utilization weeks ahead and flag single-point constraints before they become blockers. When a bottleneck is forecast, it could also surface concrete mitigation options:

- Resequencing test requests to redistribute demand

- Redistributing workload across available resources

- Identifying external lab sourcing where internal capacity falls short

The value here is entirely about timing. The same information arriving earlier means teams have options. Arriving later, it becomes a crisis.

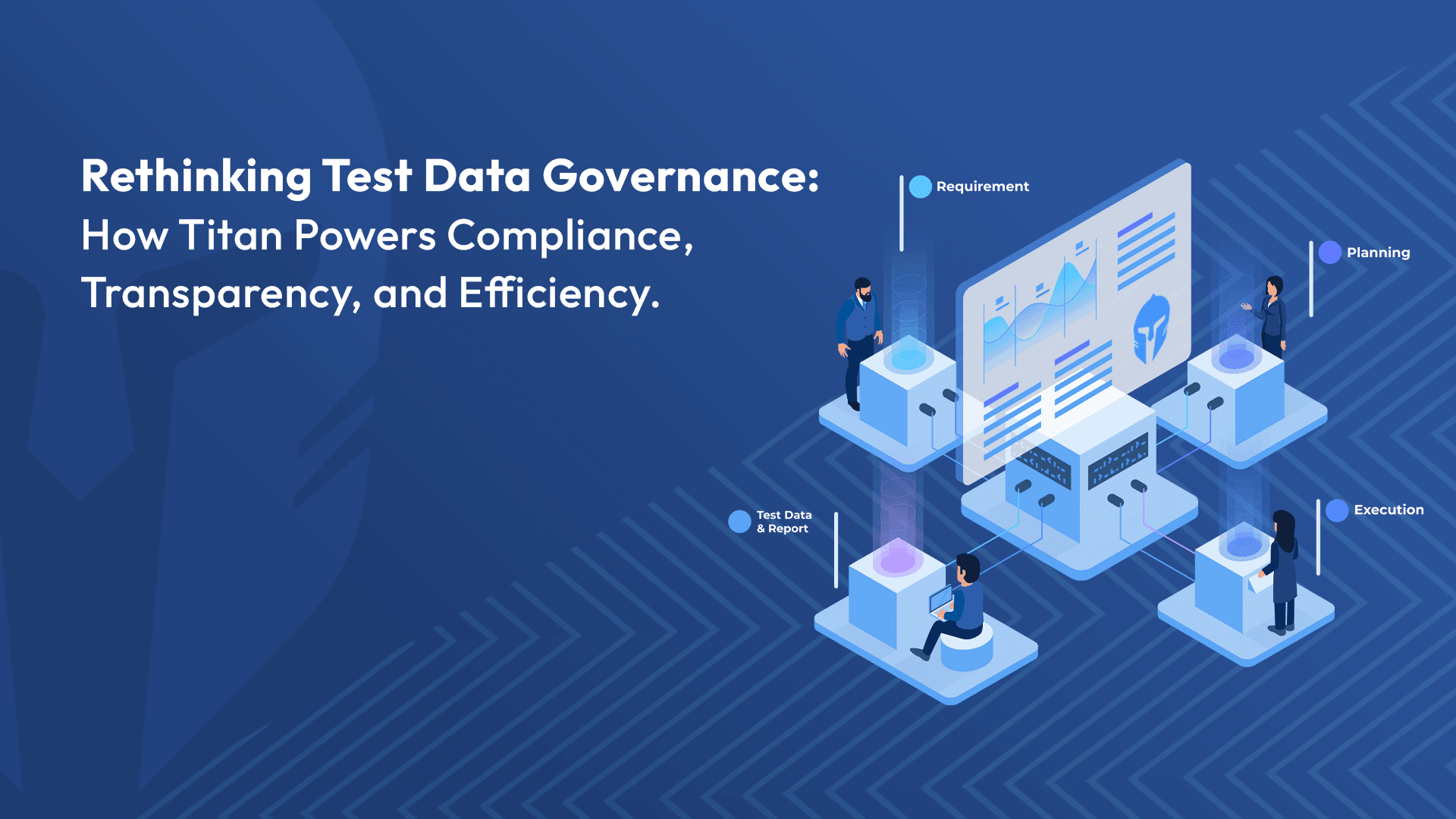

Regulatory Deadline Backplanning

Homologation and certification deadlines typically live in programme management systems with no live connection to the test schedule. Minor delays accumulate quietly. Teams sometimes realize weeks too late that a regulatory window has been missed.

Scheduling and verification planning modules that already sit close to this problem make an AI layer genuinely viable. It could close the gap by:

- Ingesting certification and homologation deadlines as fixed anchors

- Automatically reverse-engineering a full test campaign schedule from those dates backwards

- Evaluating every subsequent schedule change against compliance milestones in real time

- Triggering alerts the moment a delay puts a regulatory deadline at risk

The deadline stops being a distant fixed point the schedule occasionally glances at. It becomes something the schedule is actively defending, continuously.

The Bigger Picture

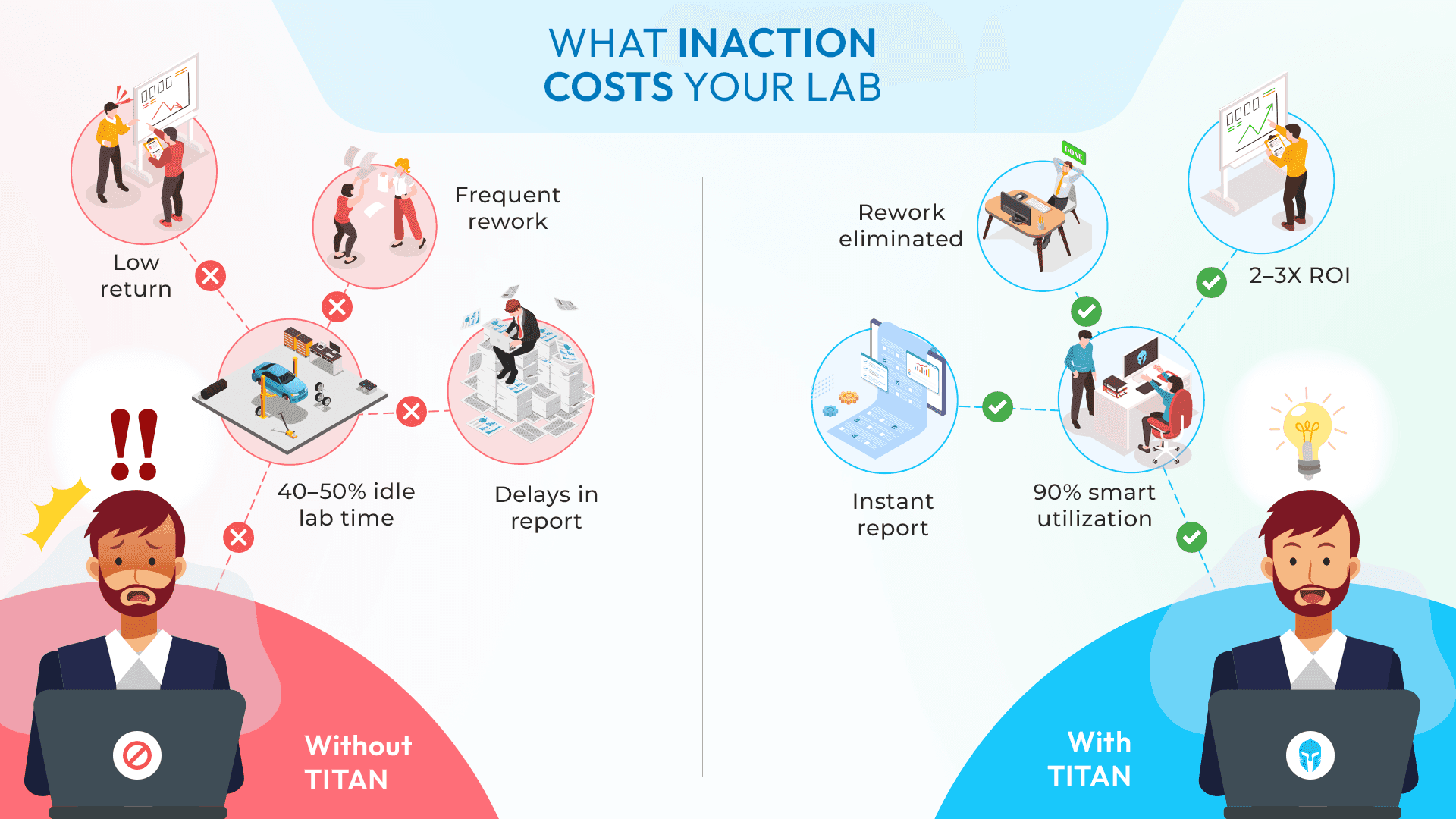

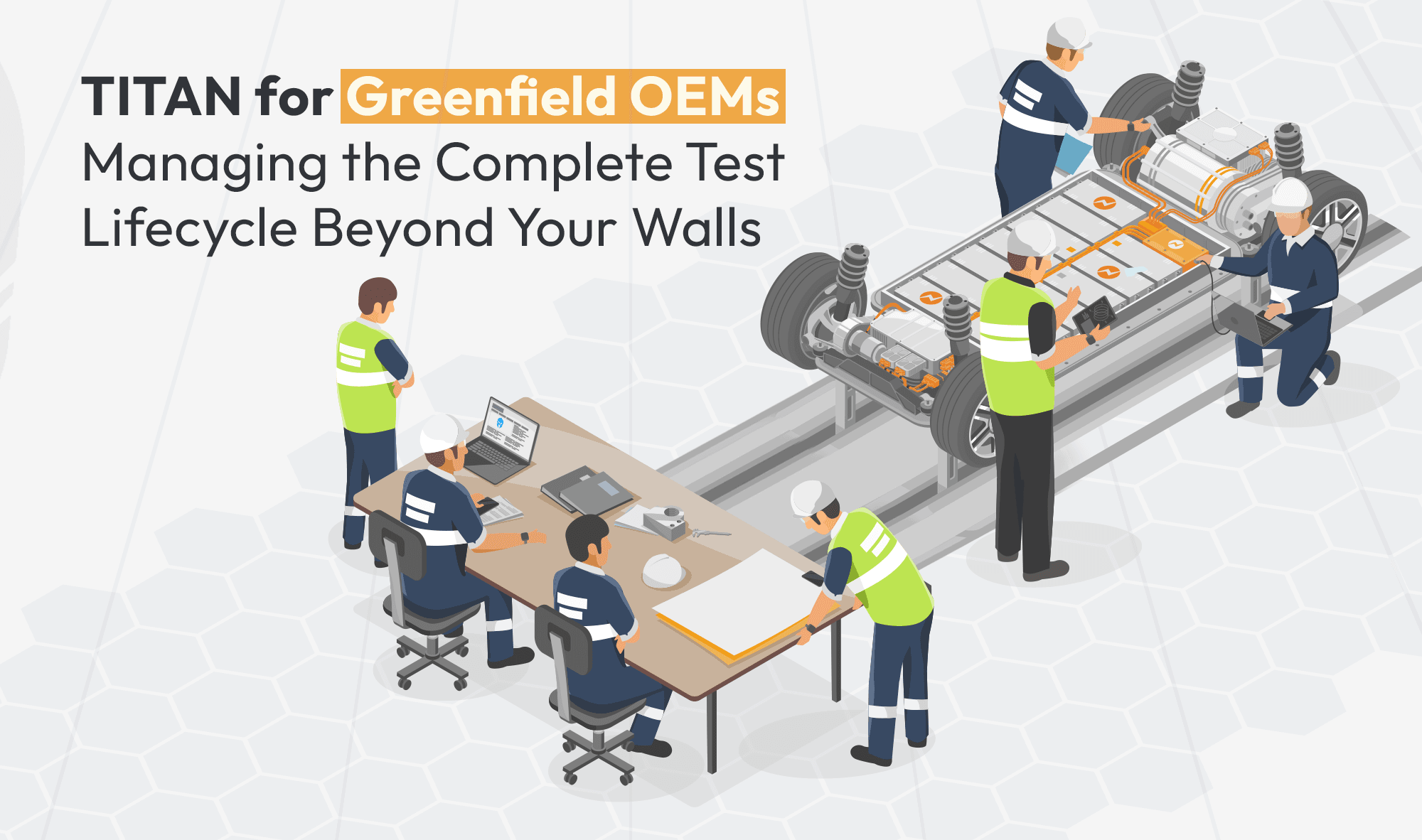

Taken individually, each of these capabilities addresses a real and recurring pain point. Taken together, they represent a shift from reactive coordination to something closer to predictive test lifecycle management.

A unified data architecture across requirements, tests, lab operations, assets and scheduling is precisely what makes this kind of AI application viable. The connected foundation is already there. What these use cases explore is what becomes possible when it is put to work in new ways.

The workflows are still being defined. But the direction is clear.

Where Test Planning Meets Real Execution.

Manage schedules, labs, equipment and teams in one place.