What Is a Design Verification Plan (DVP)? A Practical Guide for Test Engineers

Author

Neerav Singh

Technical Product Specialist

Author

Neerav Singh

Technical Product Specialist

Reading Time

4 min read

What Is a Design Verification Plan (DVP)? A Practical Guide for Test Engineers

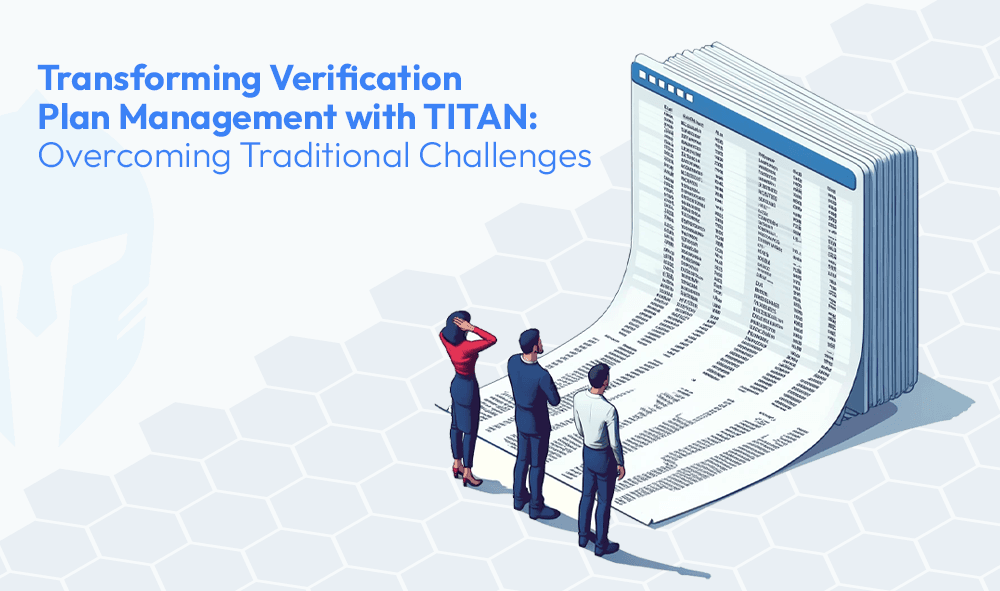

Design Verification Plans (DVP) sit at the heart of any product development program. They define what gets tested, when, how and to what standard. A well-executed DVP is what stands between a product that performs in the field and one that fails in ways nobody anticipated. And yet, despite the critical weight of that responsibility, most engineering teams are still managing their DVPs in mere spreadsheets.

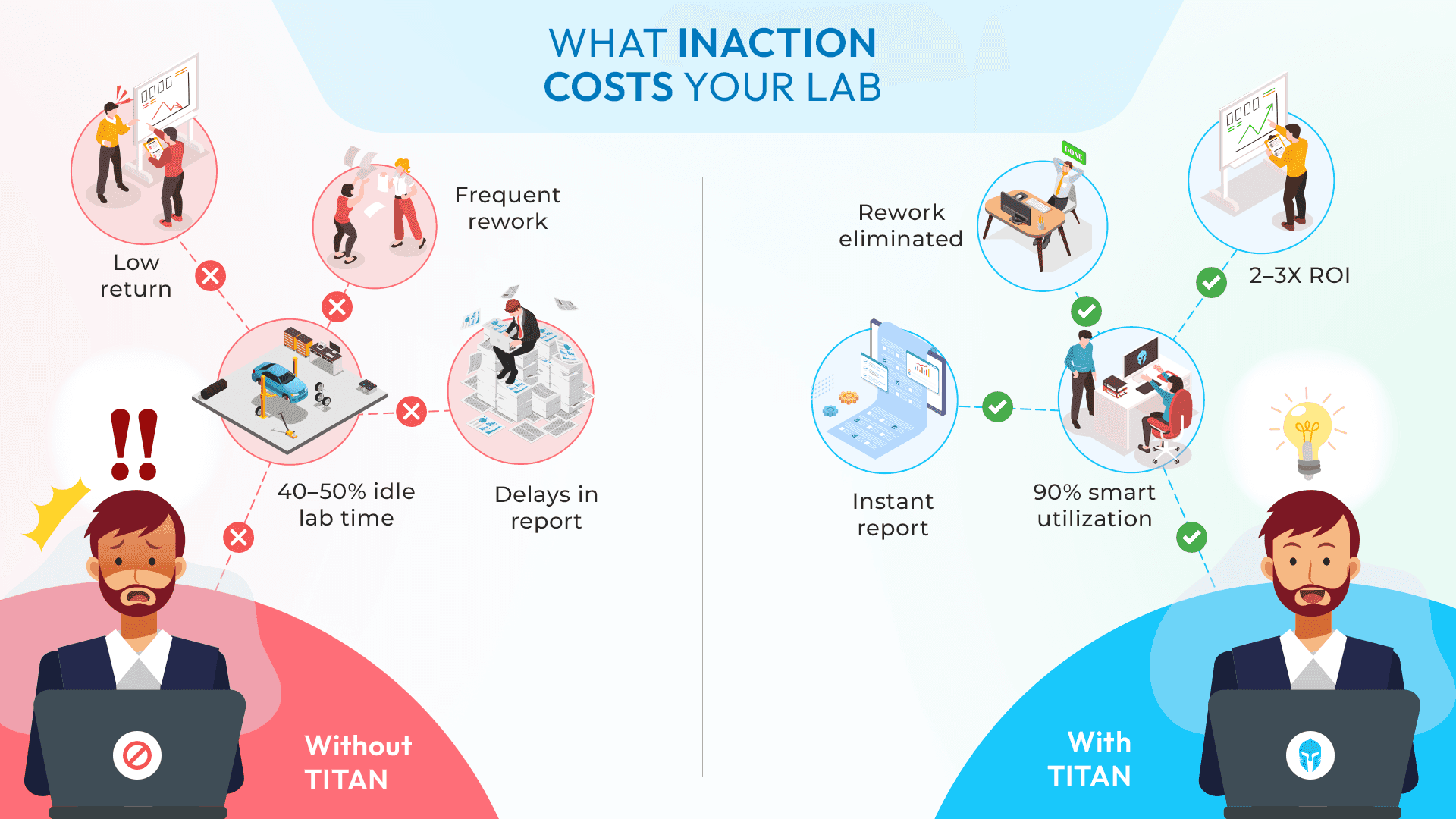

That's not an indictment of the people doing it. It's a reflection of how long the right platform took to arrive. Spreadsheets were the best option available for a long time. But product complexity has outpaced what a grid of cells can realistically handle and the cost of that mismatch shows up in delayed approvals, miscommunicated schedules, missed test windows and project burndown charts that nobody saw coming.

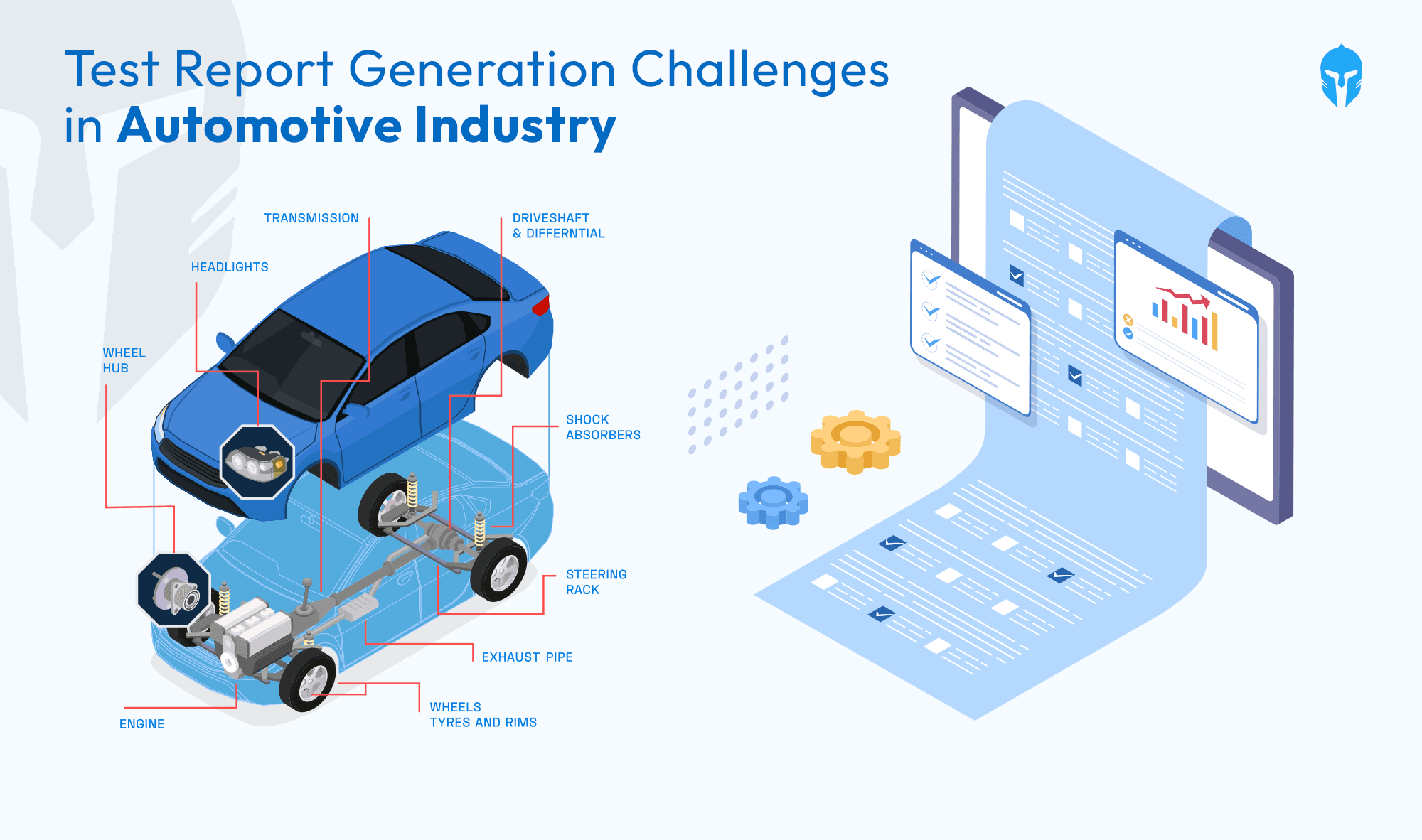

What Is DVP&R?

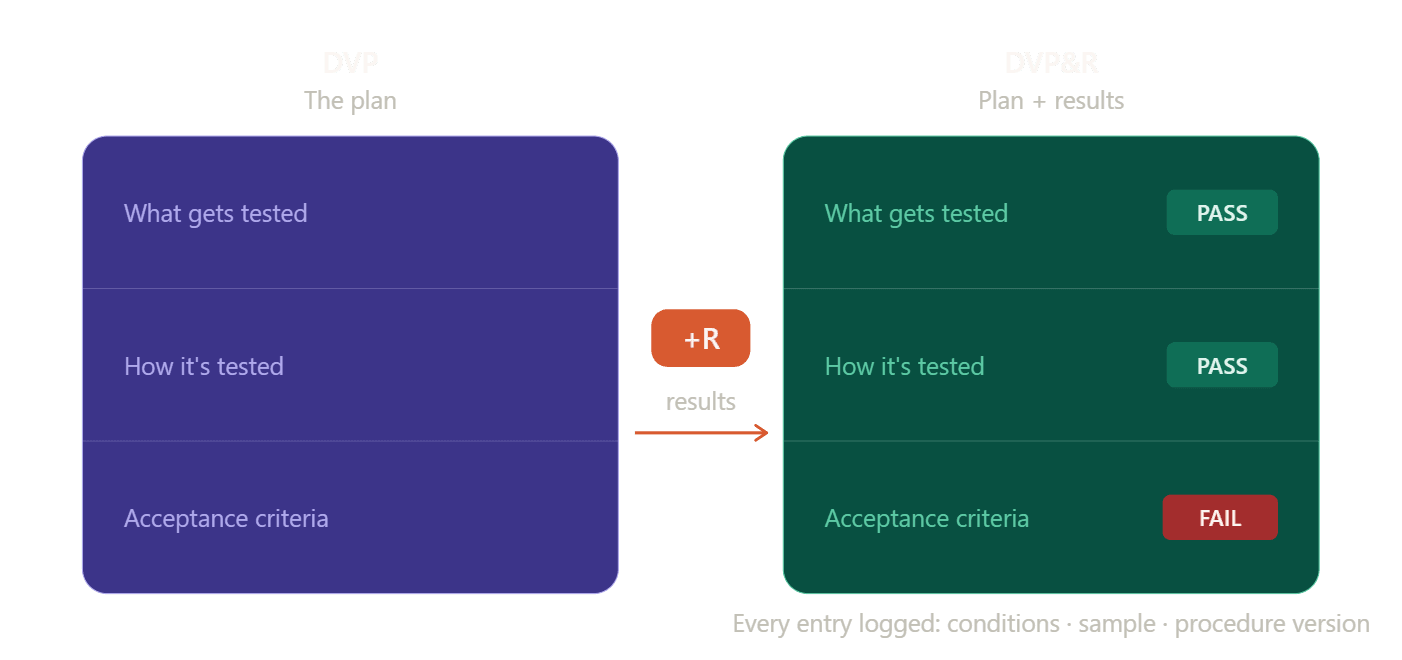

DVP&R stands for Design Verification Plan and Report. It is the same structured commitment as a DVP, defining what gets tested, how, and to what standard but with one critical addition: the results.

Where a DVP is the plan, the DVP&R is the plan and its outcomes are treated as a single, continuous record. Every test entry in the plan has a corresponding result logged against it, pass, fail, conditional or pending along with the conditions under which it was run, the sample it was run on and the procedure version that governed it. The plan and the report are not two separate documents produced at different points in the program. They are one traceable record that grows as the program progresses.

This distinction matters more than it might appear. When a DVP and its report are managed separately, the plan in one spreadsheet, results assembled into a report at the end, there is always a gap between what was planned and what happened, and that gap requires manual reconciliation to close. In programs with hundreds of test activities across multiple teams, that reconciliation is where significant time and traceability both get lost.

The "R" is not an administrative formality. It is the evidence layer that turns a test program into a defensible record, one that supports certification sign-off, responds to audit requests and gives program leadership a live picture of where verification stands, not where it was planned to stand six weeks ago.

Why Is a Design Verification Plan Important?

A DVP is not just a list of tests. It is a commitment, signed by the right people, that a product will be verified and validated against its design requirements through a defined set of tests, on defined samples, within a defined timeline. In many test programs, this is referred to as DVP&R (Design Verification Plan and Report) where the plan and its outcomes are managed as a single traceable record throughout the product validation and verification process. That means every entry in the plan carries implications for resource availability, lab scheduling, test article (sample/prototype/DUT/UUT) readiness and downstream reporting.

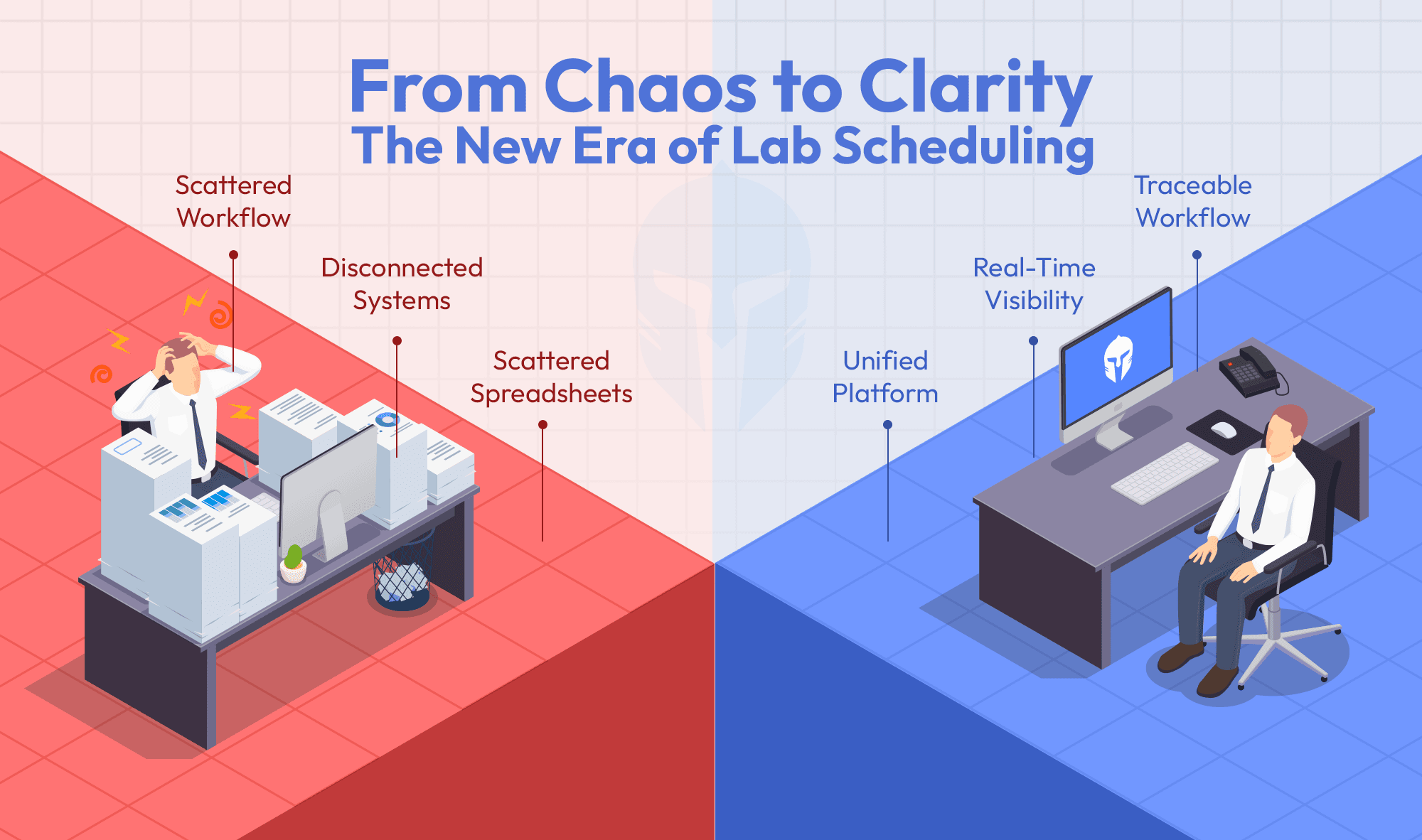

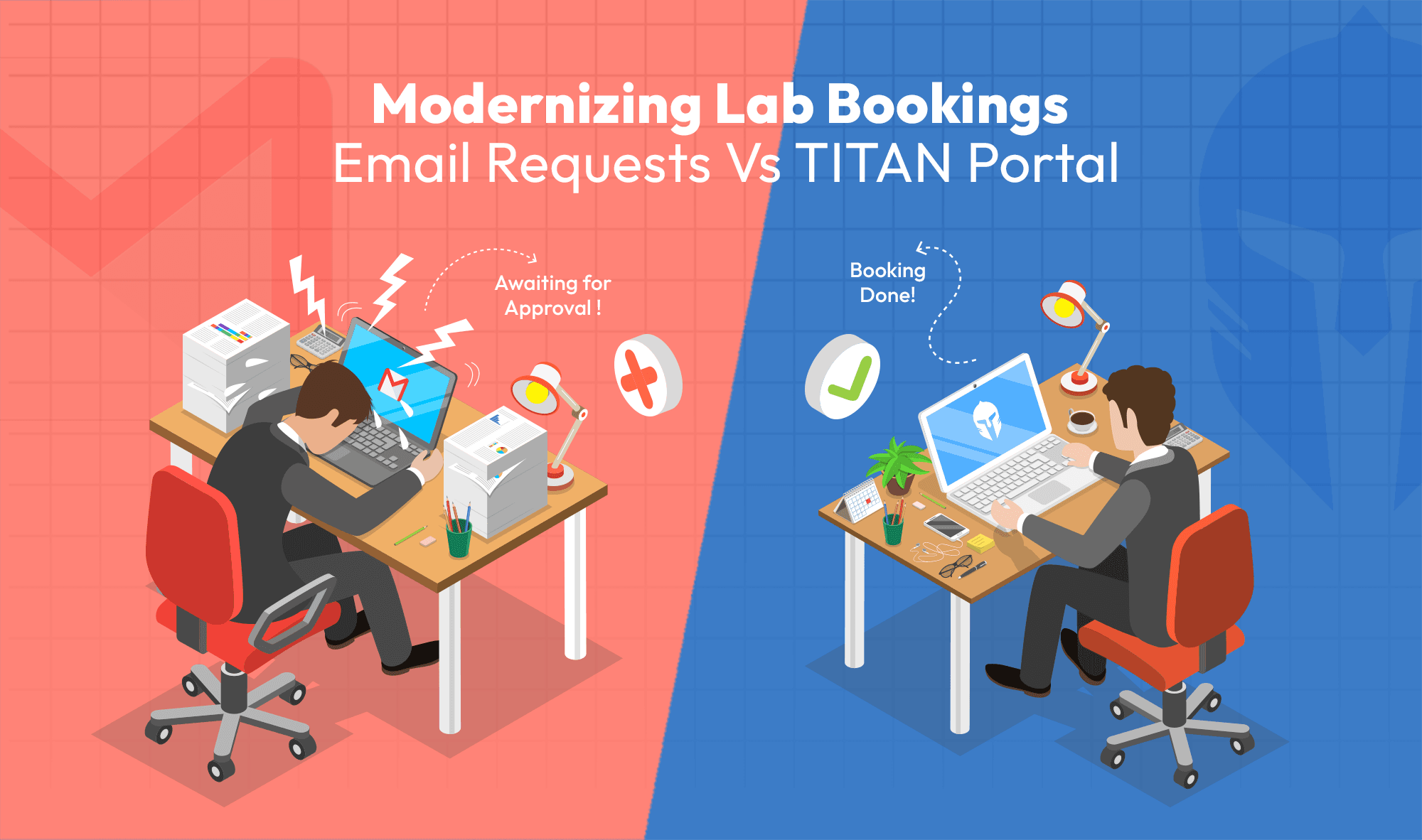

Managing all of that in shared documents creates a quiet kind of friction. Versions proliferate. Approvals happen over email chains. Resource conflicts only surface when someone tries to book a lab that’s already spoken for. And when a test slips, the downstream impact on the test schedule is invisible until it’s already too late to recover cleanly.

Where DVP Management Goes Wrong

Most DVP-related problems trace back to the same structural issues. This is particularly visible in how teams manage DVP at scale, where the volume and interdependency of tests make informal processes a source of risk.

Understanding them is the first step toward addressing them:

- Initiation gaps: Test plans are often built informally, with tests added by memory or precedent rather than drawn from a validated test catalogue. In complex product programs, verification plans can include hundreds of requirements and thousands of individual test activities, and without a structured test teams struggle to maintain consistent coverage and traceability across those tests.

- Approval bottlenecks: Sending tests for approval one at a time, or via email, introduces latency. In high-velocity test beds, this compounds quickly and delays the entire test planning cycle.

- Resource conflicts: Without visibility into lab calendars, equipment availability, and operator schedules at the point of planning, scheduling is essentially optimistic guessing.

- Static plans in dynamic environments: Tests slip. Samples aren't ready. Equipment fails. A plan that can't be easily modified without breaking its structure forces engineers to manage change manually, outside the system.

- Invisible project impact: Test delays don't exist in isolation. They affect milestones. Without a direct link between test execution status and project progress, leadership is always operating on lagging information.

This is exactly the set of problems TITAN was built to address.

What Are the Key Components of a DVP?

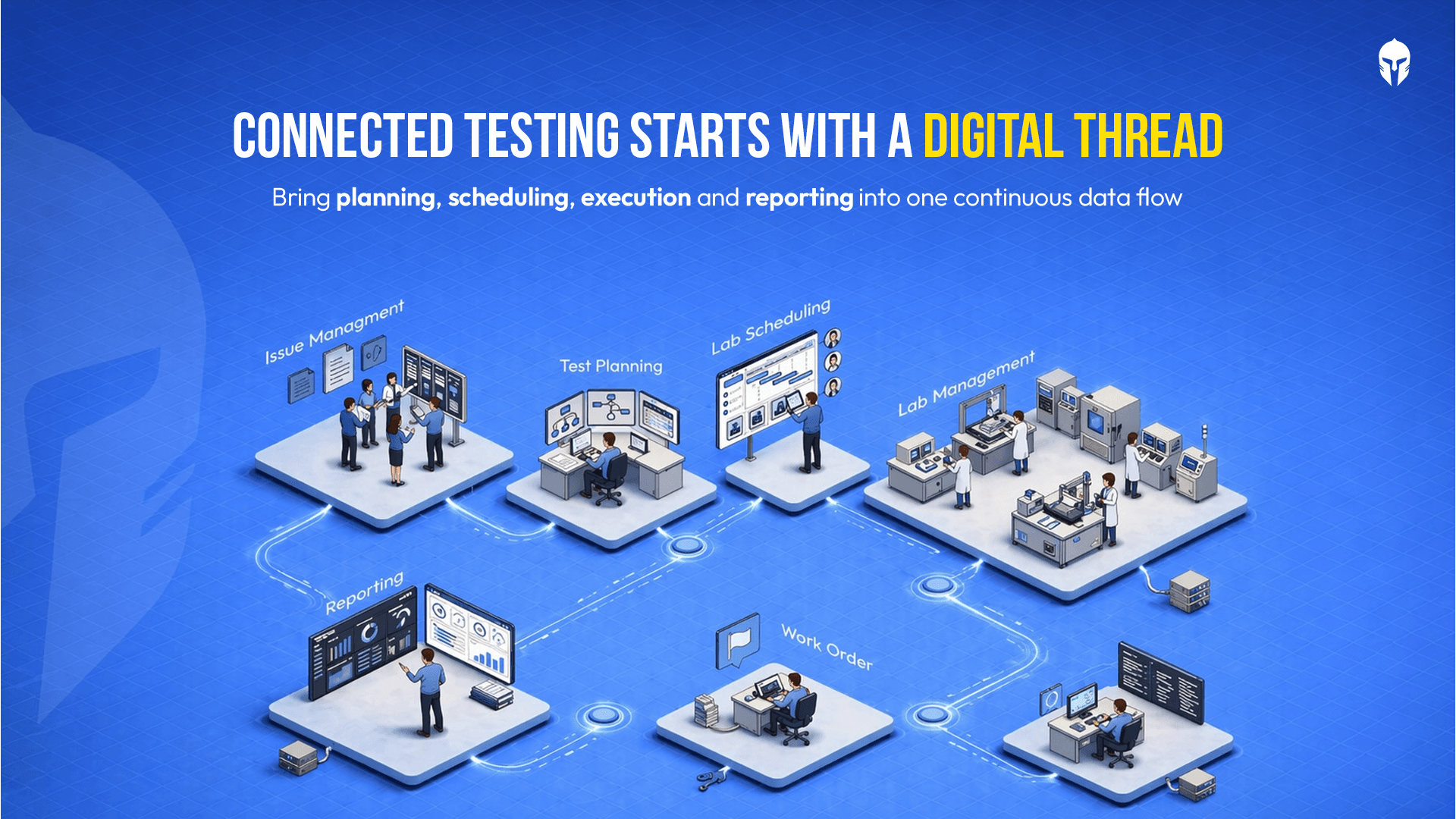

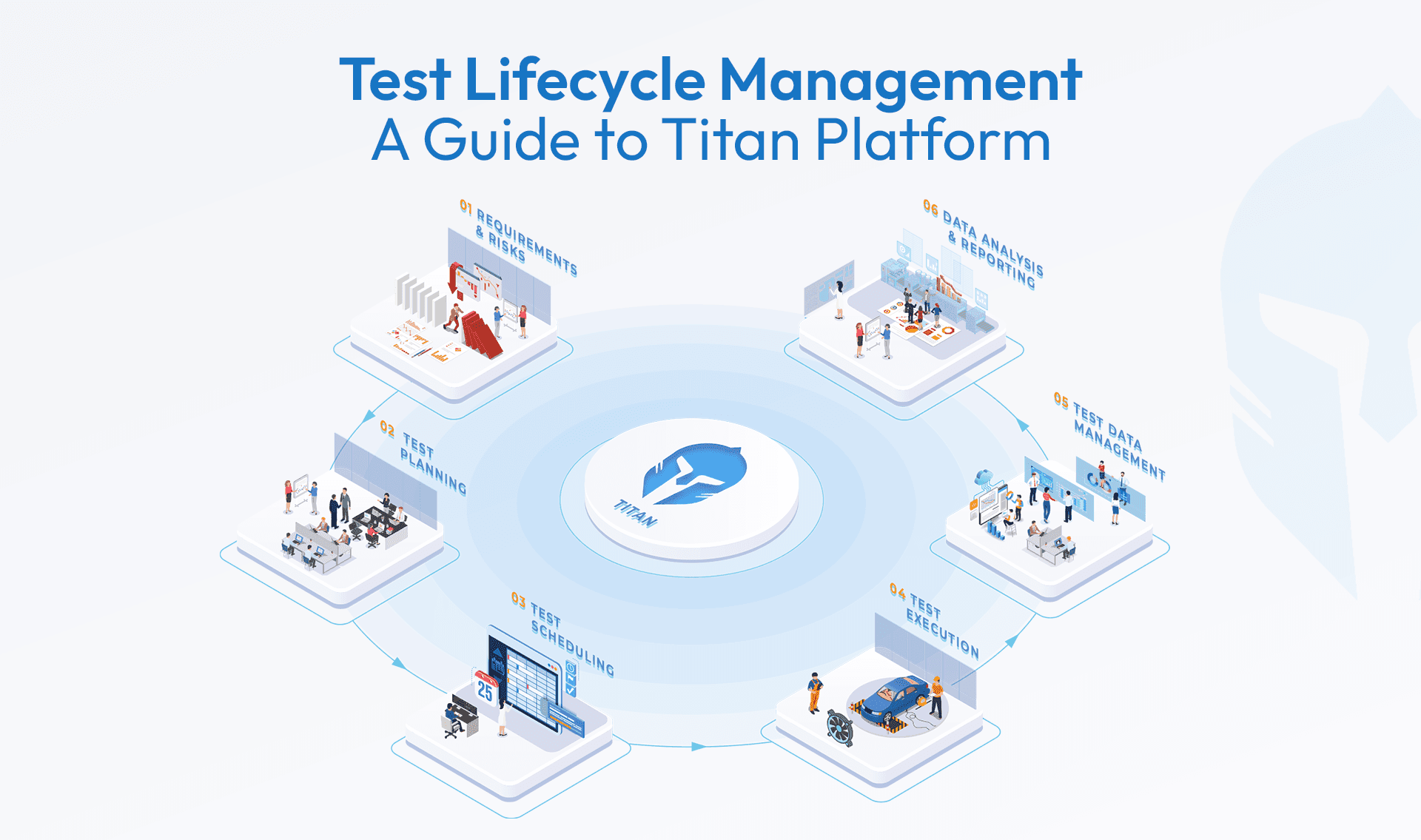

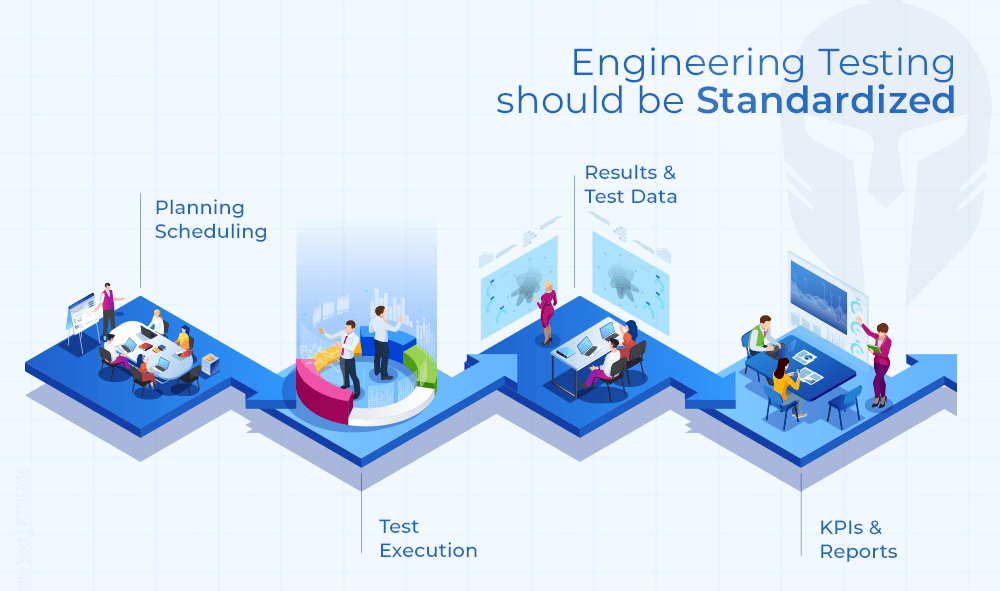

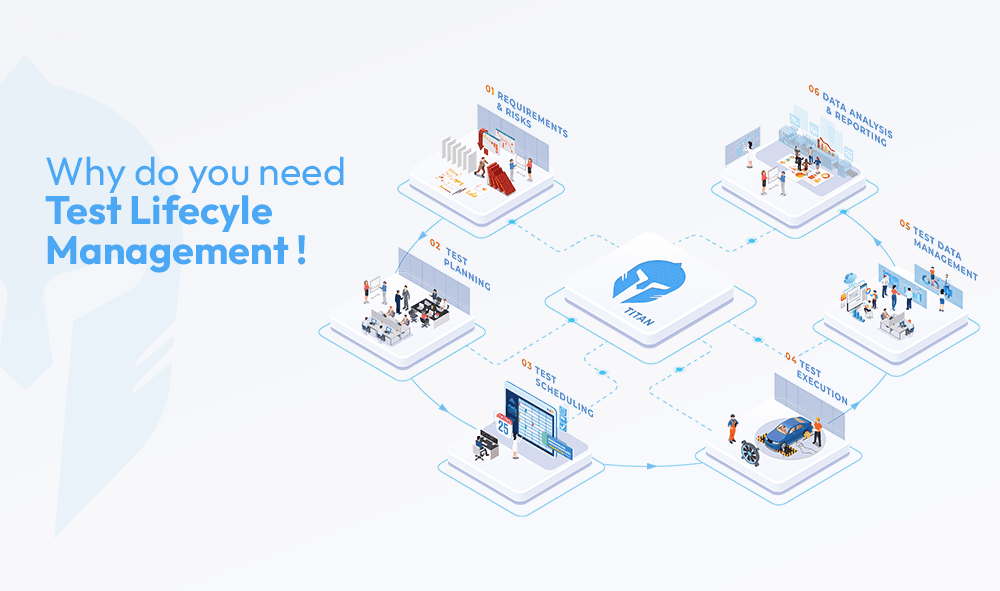

A properly managed DVP process has a clear lifecycle: initiation, approval, resource assignment, scheduling, execution, and closure. Each phase has dependencies on the one before it, and problems introduced early compound through every subsequent stage.

During initiation, tests should be drawn from a curated catalogue rather than created ad hoc. This ensures that each test has a defined methodology, acceptance criteria, and standard before it ever gets added to a plan. Sample types and sizes should be specified at the point of planning, not figured out later when test articles are already being consumed.

Approval workflows need to support bulk actions. In a testing bed, where dozens of tests need sign-off before execution can begin, single-test approval flows are a bottleneck waiting to happen. The ability to submit a full verification plan for review, route it to multiple approvers and receive consolidated sign-off saves material time.

Resource allocation should happen within the same system where the plan lives. Assigning a lab, piece of equipment, and test operator to a scheduled test, and then notifying those stakeholders automatically, eliminates the coordination overhead that normally lives in someone's inbox.

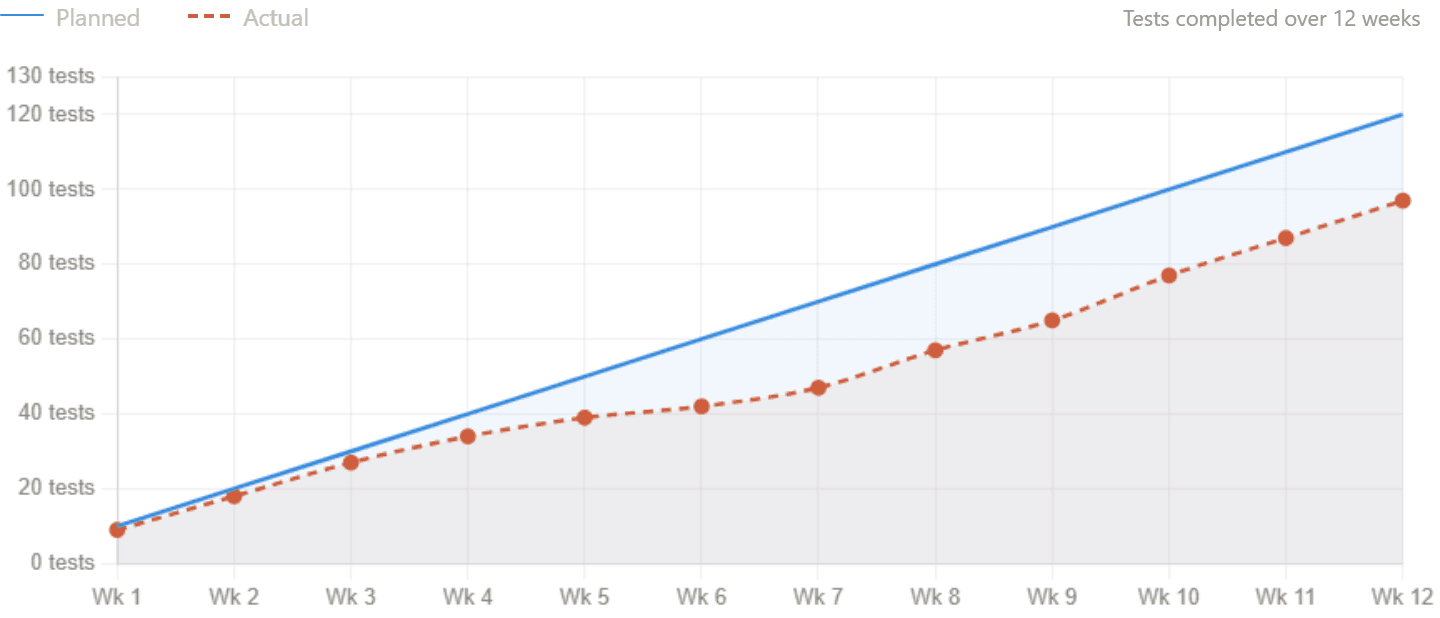

Once execution is underway, the plan needs to be a live document. Test status, emerging issues, and uploaded reports should be visible at the test level and rollable up to the project level. The gap between planned progress and actual progress is one of the most useful signals in verification and validation and it should be surfaced continuously rather than reconstructed at review meetings.

Rescheduling is inevitable. The question is whether the system supports it without creating manual rework. Maintaining test sequencing through schedule changes, identifying resource conflicts before they become problems, and propagating changes to affected stakeholders automatically are the difference between a manageable test and a reactive one.

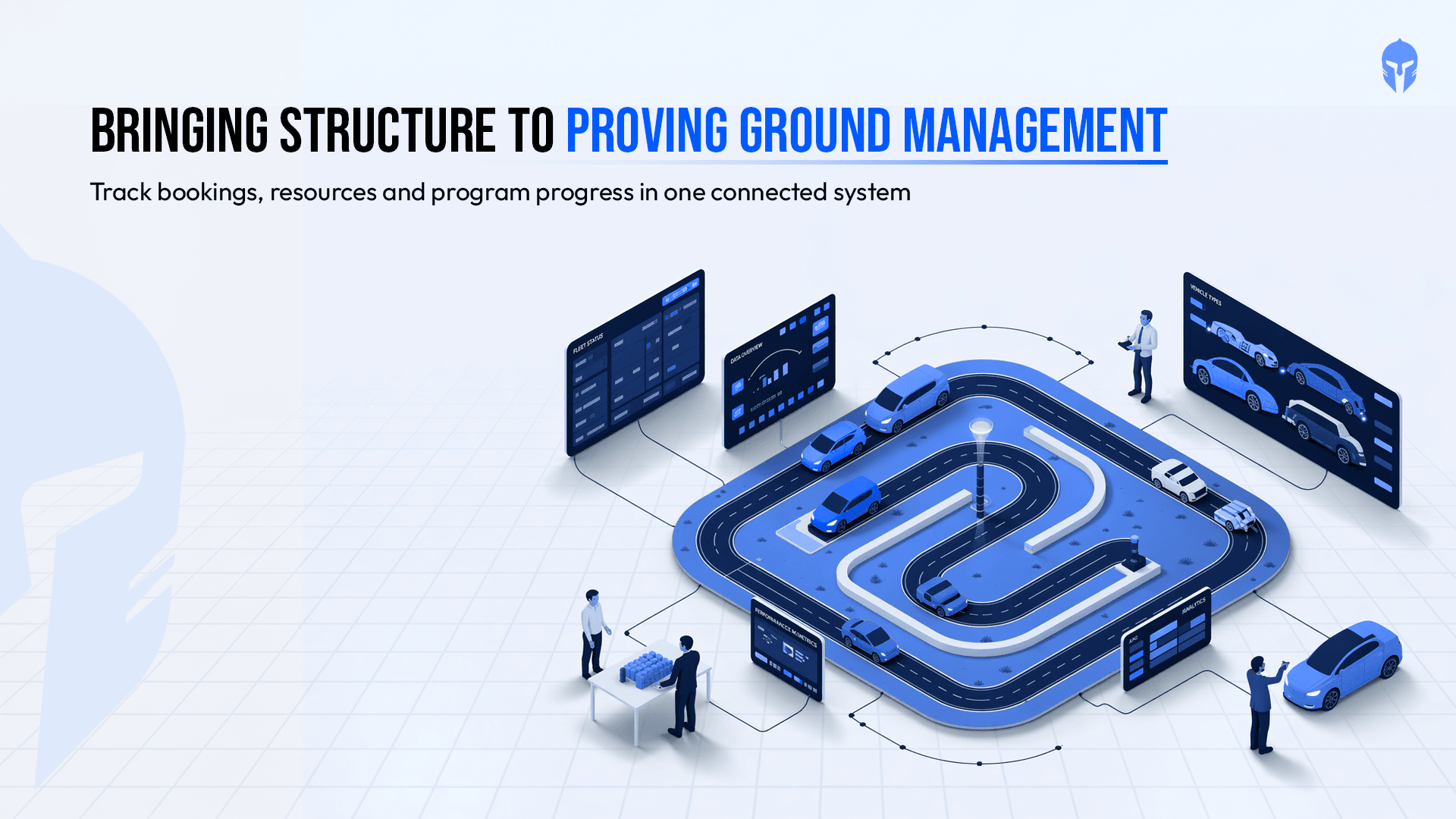

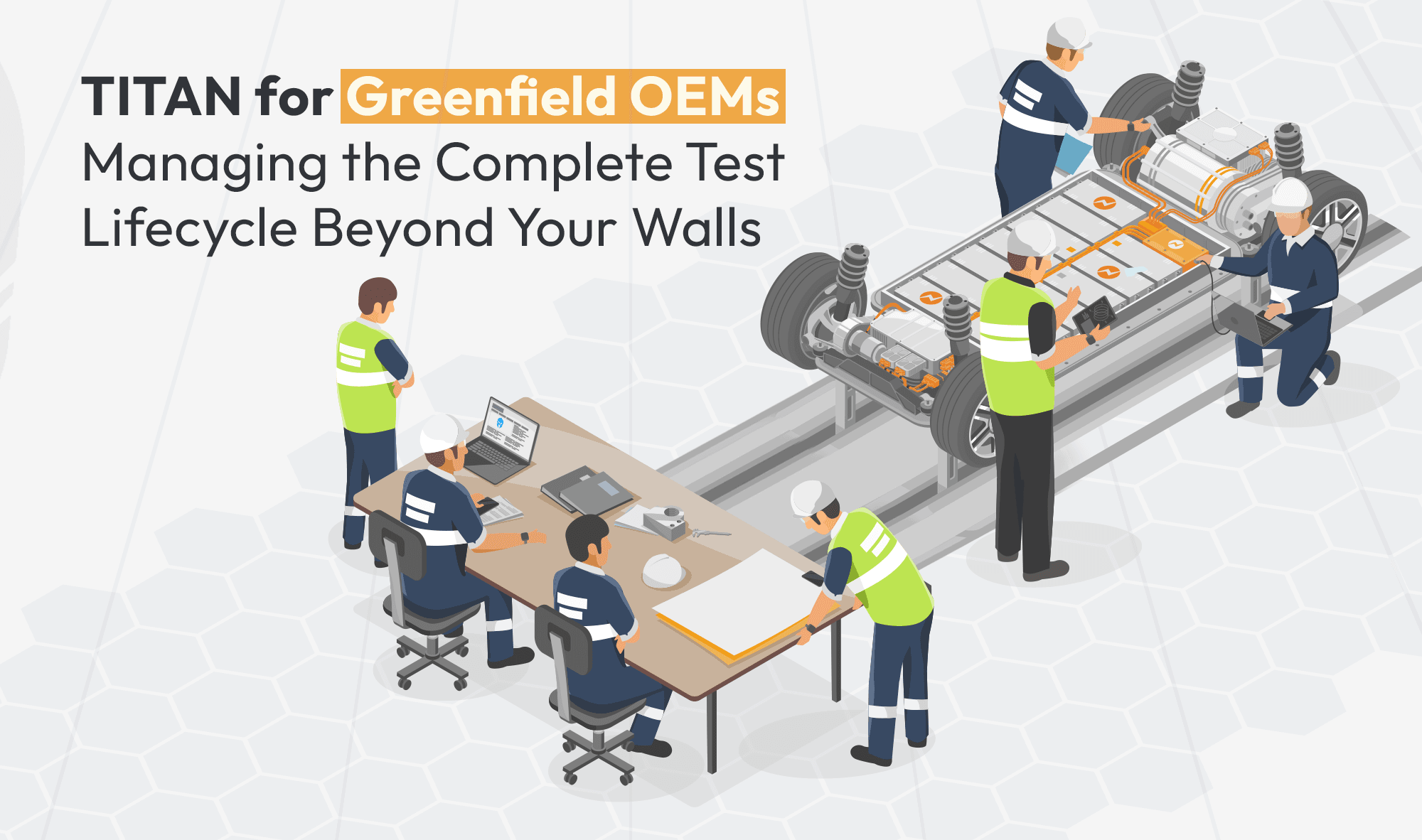

How Do Teams Manage DVP at Scale?

Individual test tracking solves a local problem. What most tests are missing is the project-level perspective: how does the current state of all verification plans translate into project health? A burndown view that reflects actual versus planned test completion gives engineering leads and program managers a shared, accurate picture of where the test stands, without requiring a weekly reconciliation exercise.

For example: In large vehicle or aerospace programs, verification plans can include 1,000+ requirements and several thousand individual test activities across multiple teams and studies show engineers can spend 20–30% of their time reconciling test information across disconnected systems. A structured DVP system brings those elements together, ensuring requirements, tests, and results remain aligned. That kind of visibility turns a verification plan from a static document into a living instrument for managing product quality through development.

Making the Shift from Static Document to Living System

A DVP that is connected to the lab calendar, test article records, approval workflows and project timeline becomes the system of record the entire program runs against rather than a document that trails behind it.

The practical effect is straightforward. Engineers spend less time chasing information across disconnected tools. Program managers work from live data instead of status updates that are already out of date. When a certifying authority asks for a complete picture of what was tested, in what configuration, and with what outcome, the answer is already there.

For teams under pressure from compressed timelines and increasing regulatory scrutiny, managing a DVP in spreadsheets carries real program risk. The infrastructure to do it properly now exists, and the teams using it are seeing the results in their audit outcomes and in how their programs run daily.

A DVP has always been one of the most consequential artefacts in product development. It deserves a system built to match that responsibility.

Want to see how TITAN simplifies DVP management?

Plan verification, schedule tests, track results, and manage DVP&R in one live system.