3 Questions Your Dashboard Should Answer Instantly

Author

Neerav Singh

Technical Product Specialist

Author

Neerav Singh

Technical Product Specialist

Reading Time

3 min read

3 Questions Your Dashboard Should Answer Instantly

There's a framework in operations management that measures any operation across four dimensions - Volume, Variety, Variation and Visibility.

For most automotive test labs, three out of four are well covered.

Volume? Dozens of tests running in parallel across multiple programmes.

Variety? Powertrain, chassis, crash, battery, ADAS and the range is enormous.

Variation? Constant. Shifting timelines, changing requirements, evolving OEM expectations.

And then there's the fourth V. Visibility.

Ask yourself honestly, do you actually have it?

What Leadership Is Really Asking For

Every test manager has been in that meeting. The one where a senior leader asks a simple question, "What's the status of the programme?" and the honest answer is somewhere between "let me check" and "I'll get back to you."

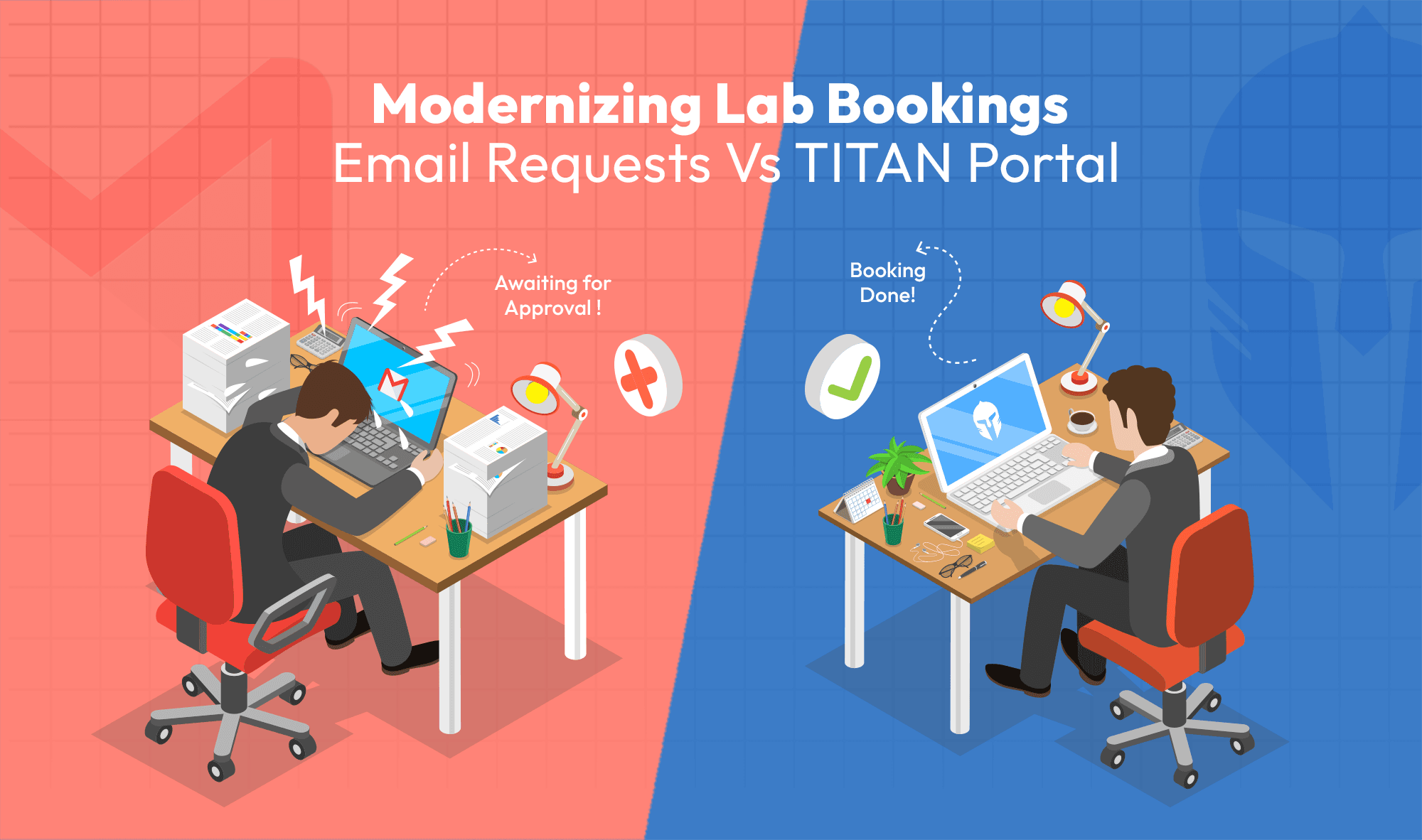

The team is working hard, but the answer lives across four different systems, two spreadsheets, endless email loops, a shared drive nobody fully maintains and the memory of one engineer who was on-site last Tuesday.

Leadership's role in any testing and validation programme is more than oversight, it has a lot to do with intervention too. When something goes wrong, they need to identify it, understand the impact, and make a call.

Do we reschedule it? Do we pull resources from another programme? Do we escalate this?

Instead of beautiful charts, they're asking three very specific questions:

What is happening? What is wrong? And what do I need to do about it?

That only happens when visibility is built into the system instead of being assembled after the fact.

The Story Every Test Manager Recognizes

Here's a scenario that plays out in automotive test labs more often than anyone likes to admit.

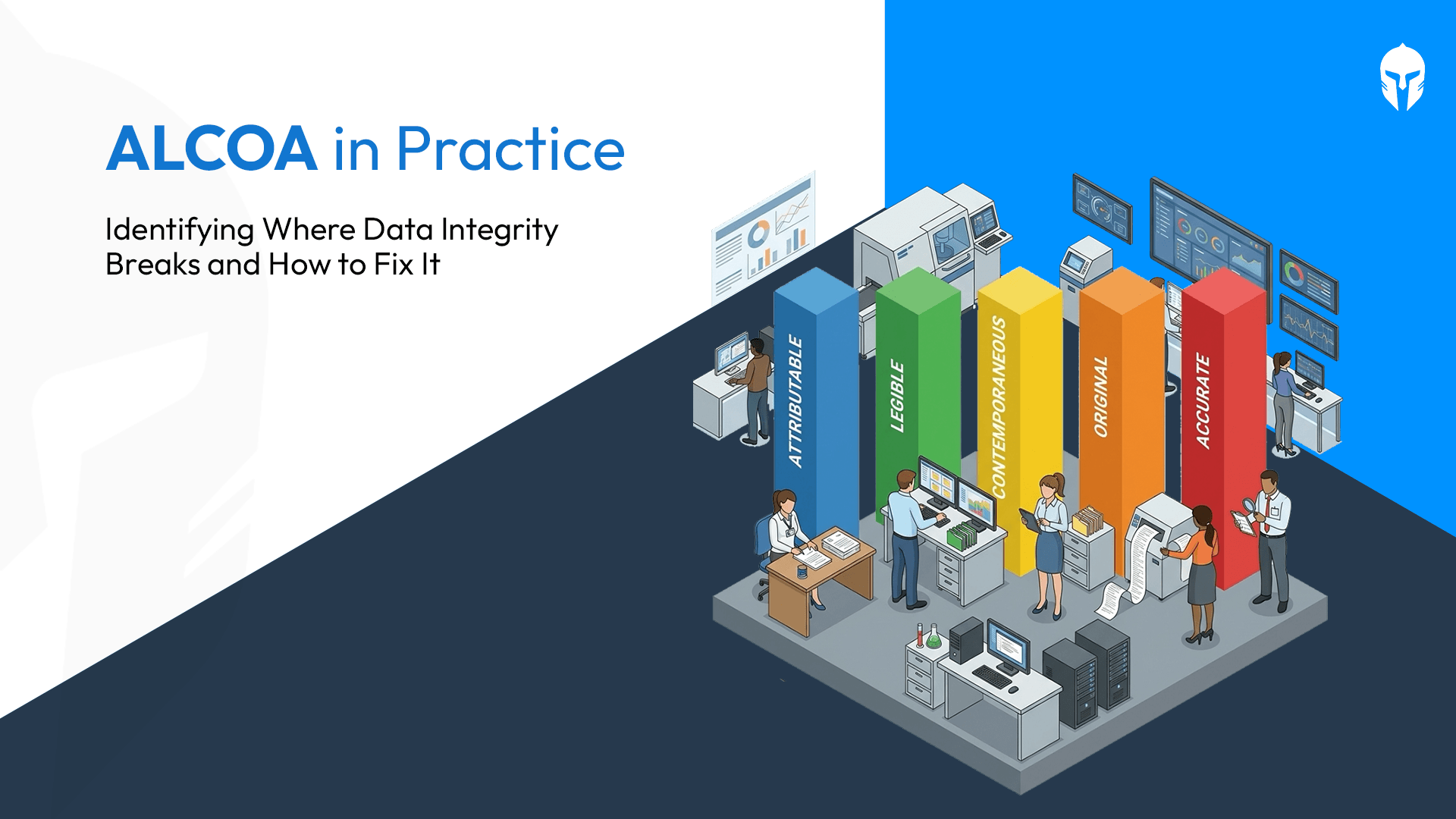

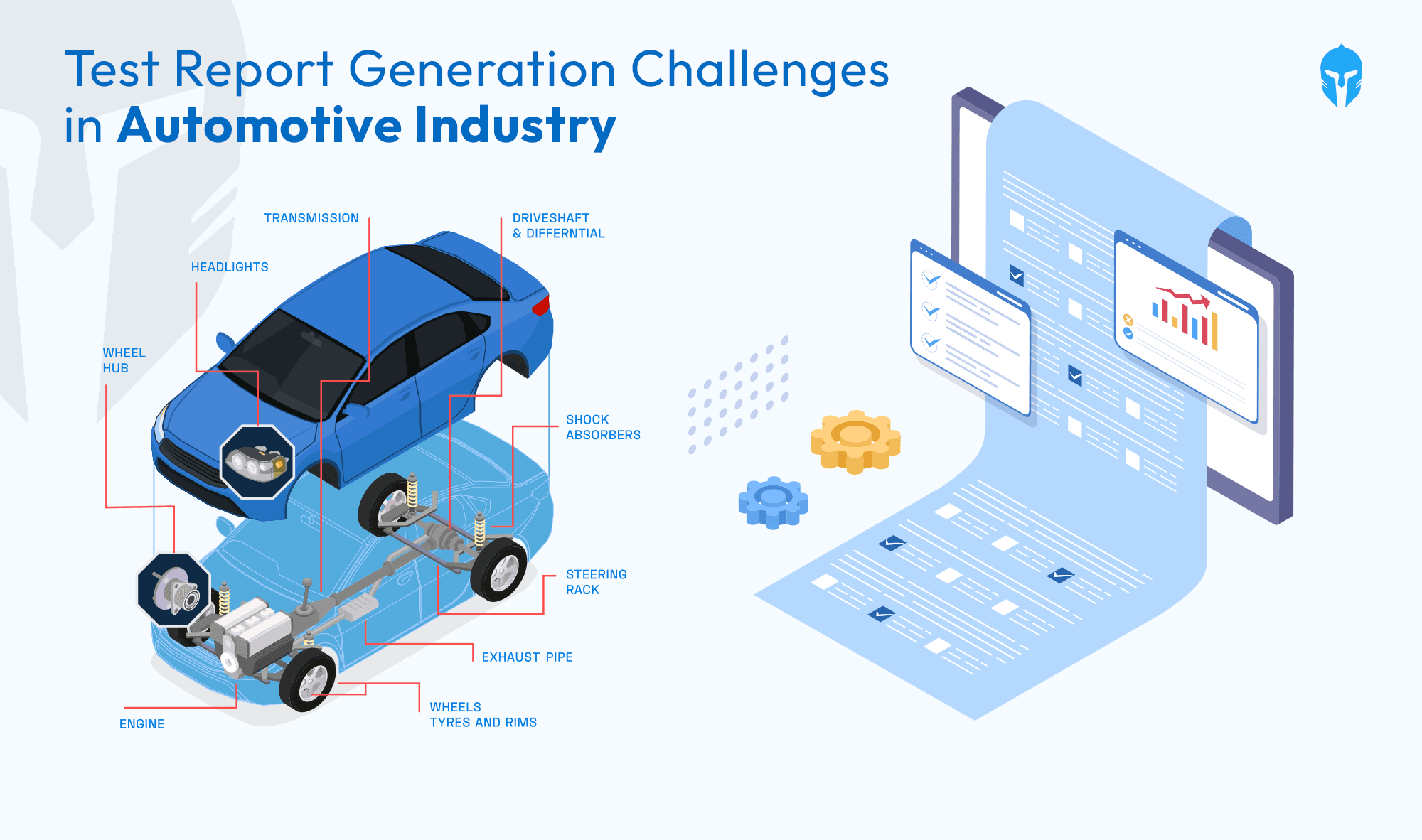

A test rig starts showing abnormal readings during a powertrain validation run. The technician notes it but not in the central system. It goes into a local log, an email thread or a maintenance record that sits in a separate tool from the test scheduling platform.

Two weeks later, the same asset fails completely mid-test. The programme loses three weeks. The escalation call happens. And in the results, everyone can see the early warning was always there, it just wasn't visible to the people who could have acted on it.

This is the real cost of disconnected systems in test lifecycle management. Not just inefficiency. Actual programme risk.

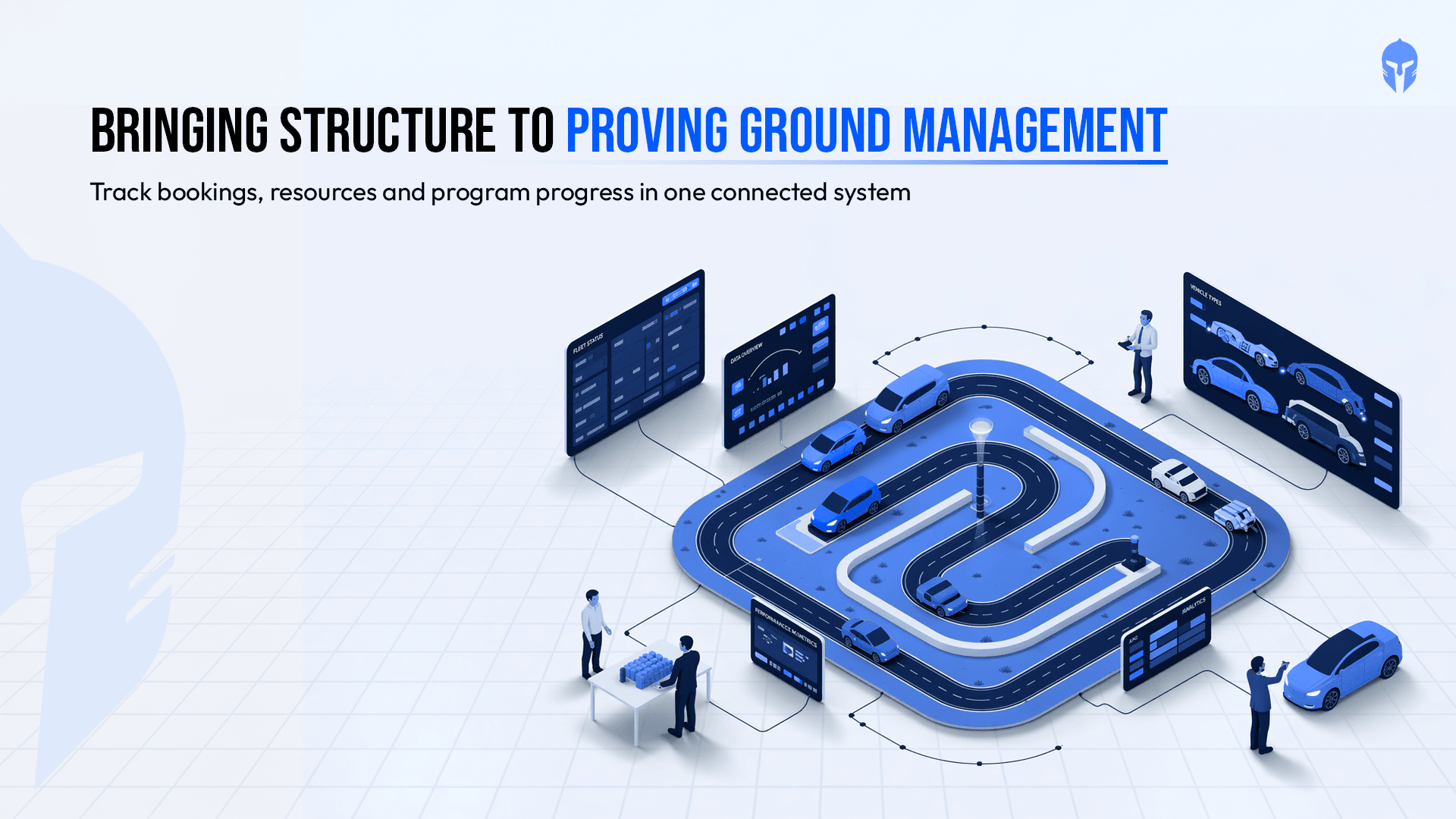

The question was never whether the equipment would eventually fail, it always will. The question is whether the right person knows about it early enough to do something. Which asset is causing trouble right now? Is it a recurring issue? When was it last calibrated? Is it booked for three programmes next week?

In a connected system, these answers are immediate. In a fragmented one, they require an investigation and investigations take time that most programmes don't have.

Clarity Delayed Is Progress Denied

High volume, high variety, and constant variation, when all three are present without centralized visibility, the cost compounds fast.

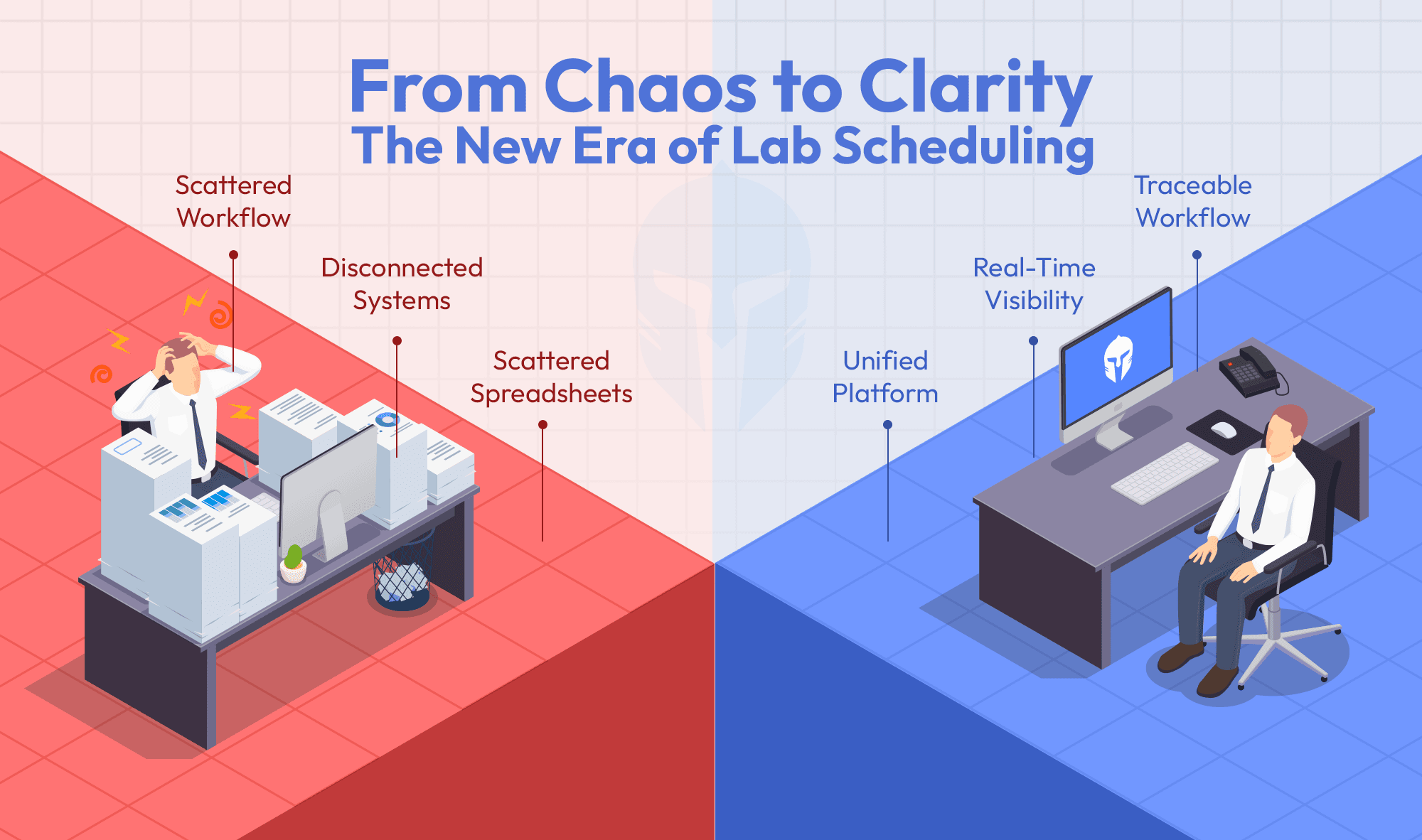

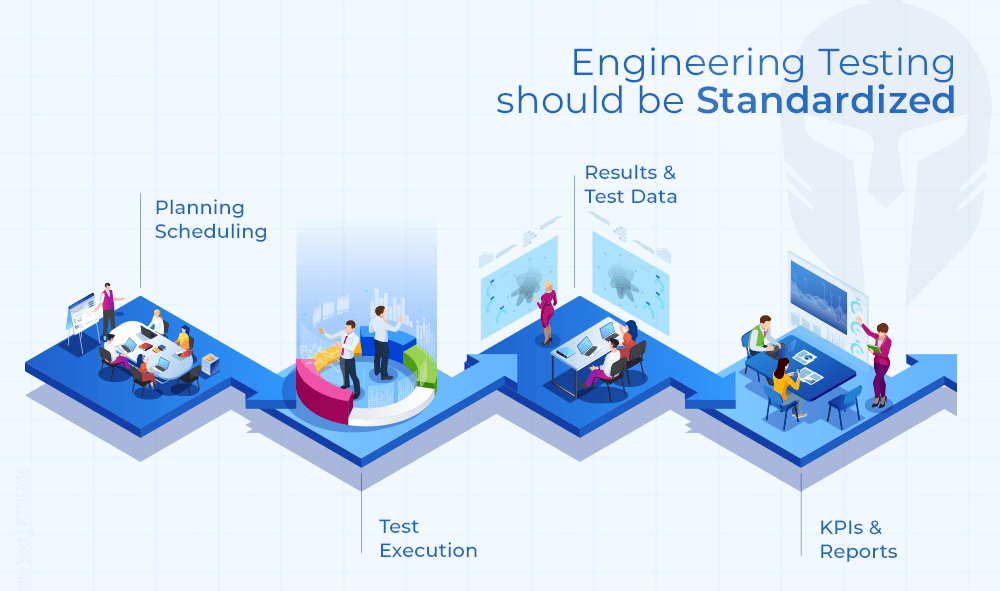

One team tracks DVP progress in a spreadsheet. Equipment status lives in a separate maintenance log. Test requests arrive by email. Issues get raised in a project management tool that has no connection to the test execution layer. Somewhere in the middle of all this, a programme is either on track or it isn't and nobody can say with confidence which.

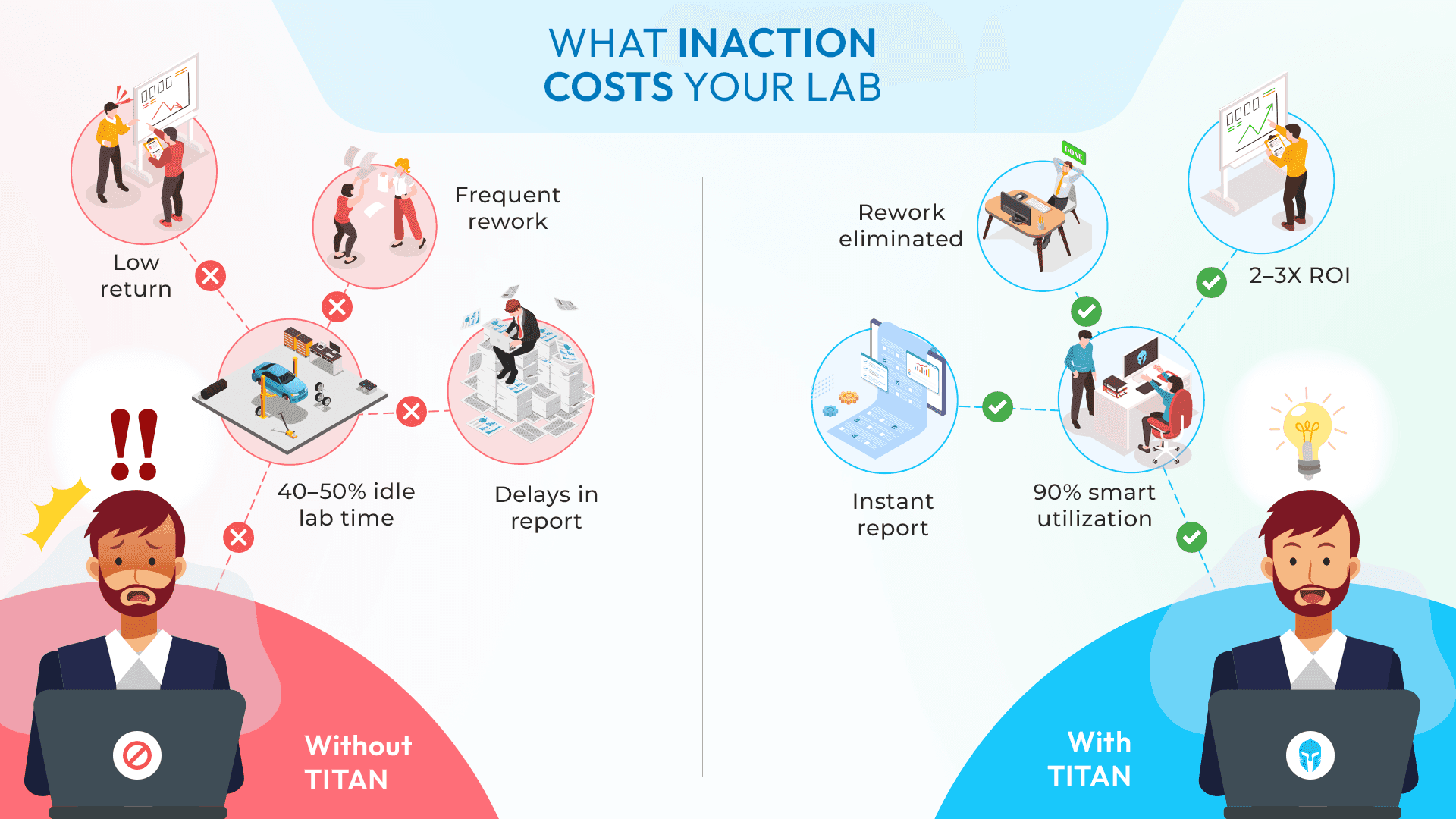

In fragmented environments, this kind of information gap drives scheduling delays up by 35-40%. This occurs due to reconciliation time and not resource shortages alone.

Someone always must manually pull status from five sources before leadership can get a straight answer and by then, the meeting is already running on assumptions.

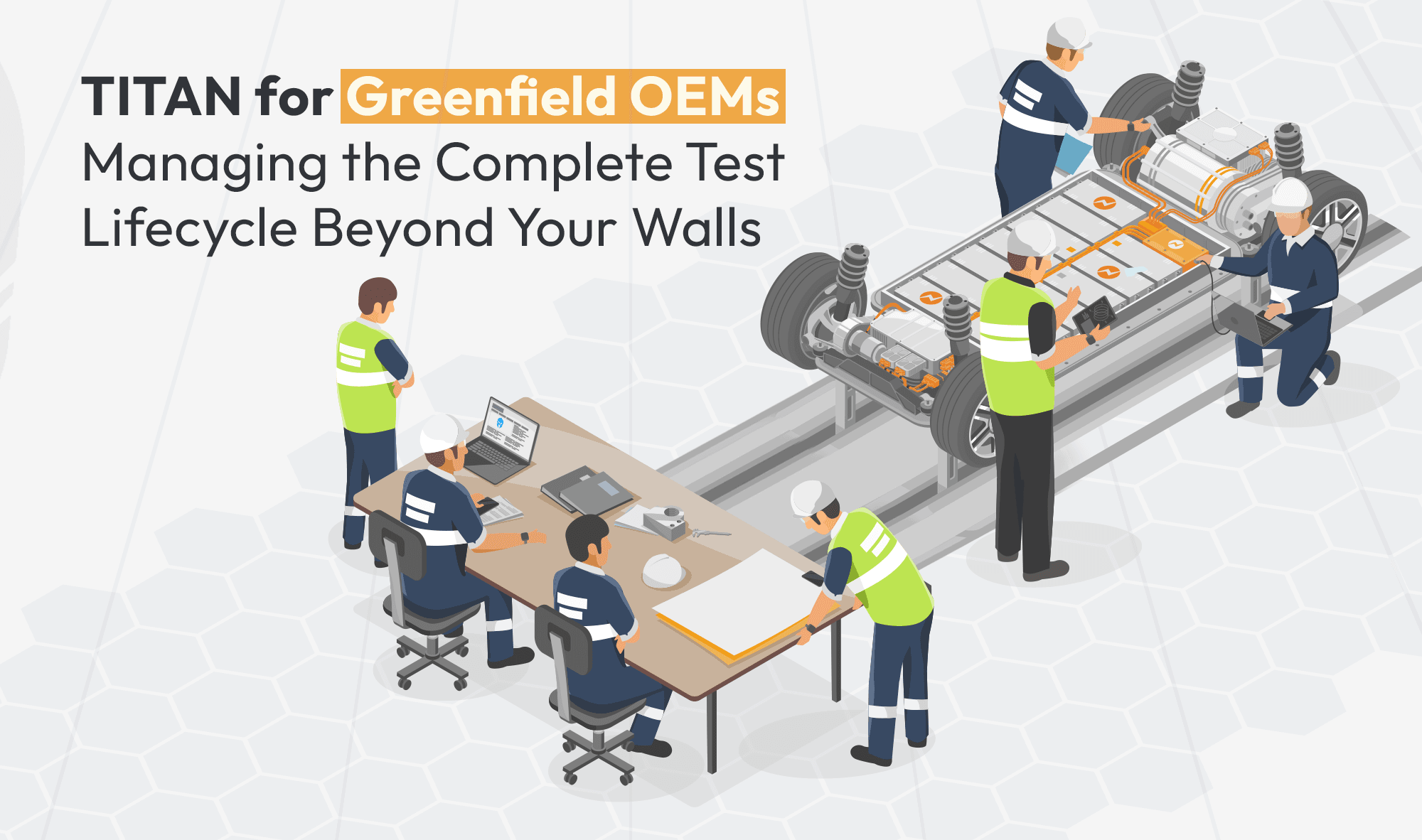

Automotive validation programmes involve test engineers, lab managers, programme managers, OEM stakeholders, Tier-1 suppliers and many other stakeholders needing different slices of the same information, all working from different tools.

The result is predictable: inconsistent updates, missed maintenance windows, double-booked equipment and decisions made without the full picture.

Visibility Is a Choice

Volume, variety and variation are fixed realities of test lab operations. You can't reduce them. You can't simplify your way out of programme complexity.

But visibility? That's not a given; it's a function of the systems you operate with.

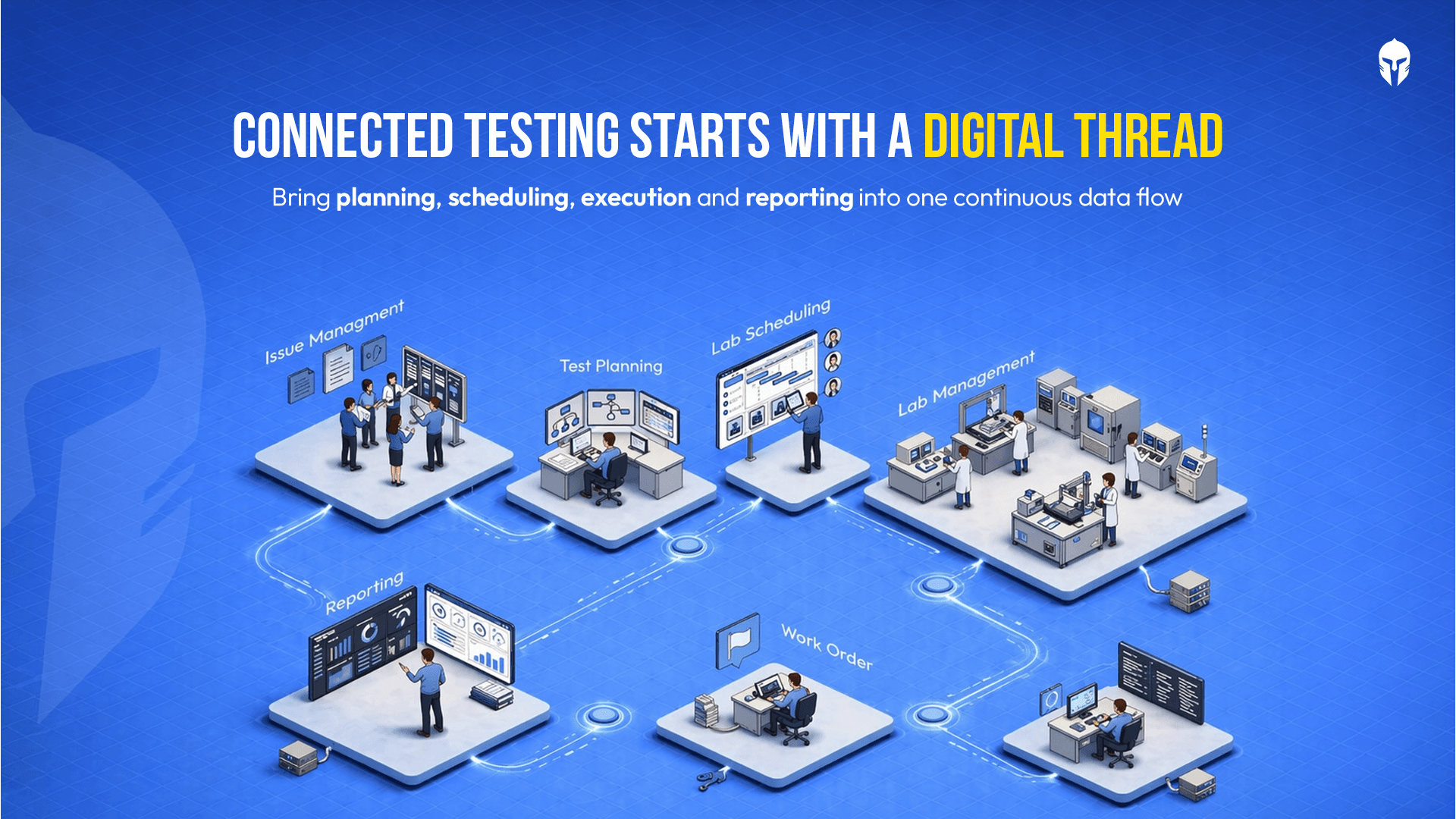

When asset status, maintenance schedules, equipment availability, DVP progress and issue tracking are connected in one place, problems surface before they become emergencies. Leaders stop chasing engineers for updates. Scheduling conflicts reduce by up to 45%. Reporting turnaround drops by up to 50%. And when something goes wrong on the floor, the right person knows about it immediately and not three days later in a status meeting.

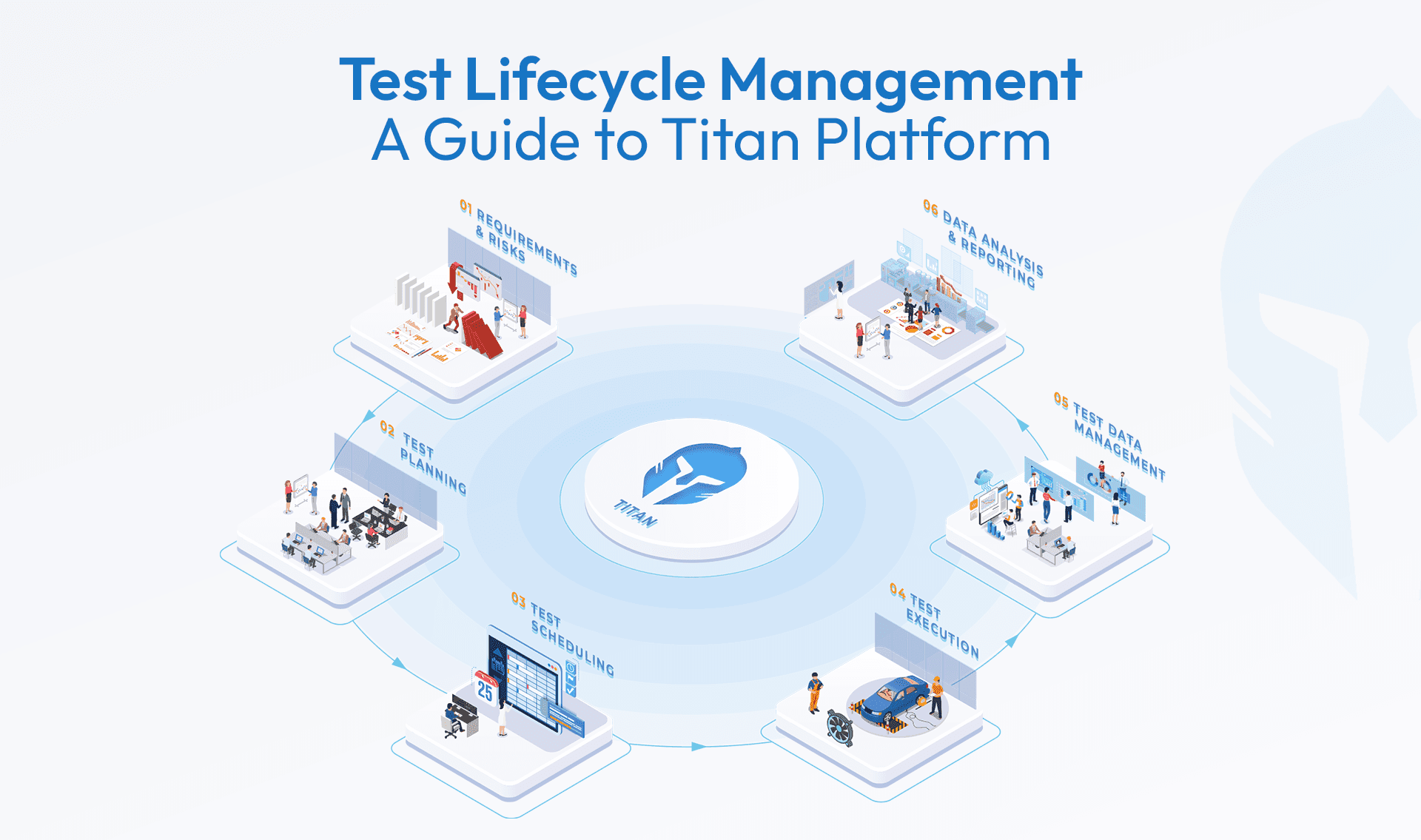

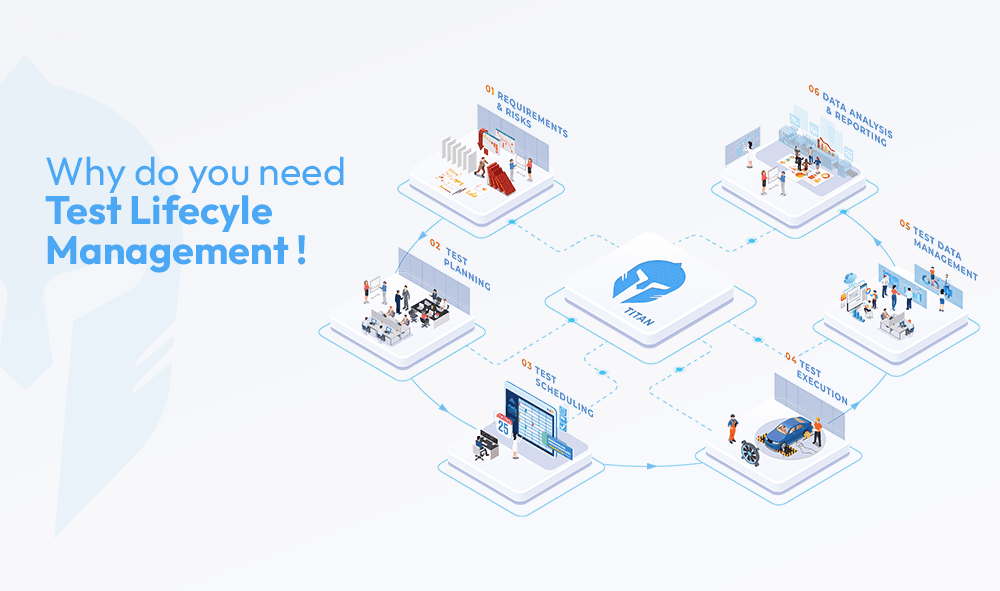

That's what TITAN is built to do. You get a dashboard. Visualize everything you need in one unified platform.

Surface the information that drives decisions, before those decisions become urgent.

The complexity isn't going away. The question is whether you're watching it or waiting to hear about it!

Your Dashboard Should Answer the Hard Questions Instantly

Know what’s happening, what’s at risk, and what to do, all in one place.