Test Data Management: Best Practices for High-Volume Labs

Author

Neerav Singh

Technical Product Specialist

Author

Neerav Singh

Technical Product Specialist

Reading Time

4 min read

- Centralize Everything Into One Structured Repository

- Make Data Searchable and Instantly Accessible

- Don't Let File Size Become a Bottleneck

- Enforce Structure Through Hierarchy, Not Just Habit

- Build Compliance into the Data Layer

- Enable Collaboration Without Compromising Control

- Treat Data Management as Part of the Test Lifecycle, Not an Afterthought

- The Bottom Line

Test Data Management: Best Practices for High-Volume Labs

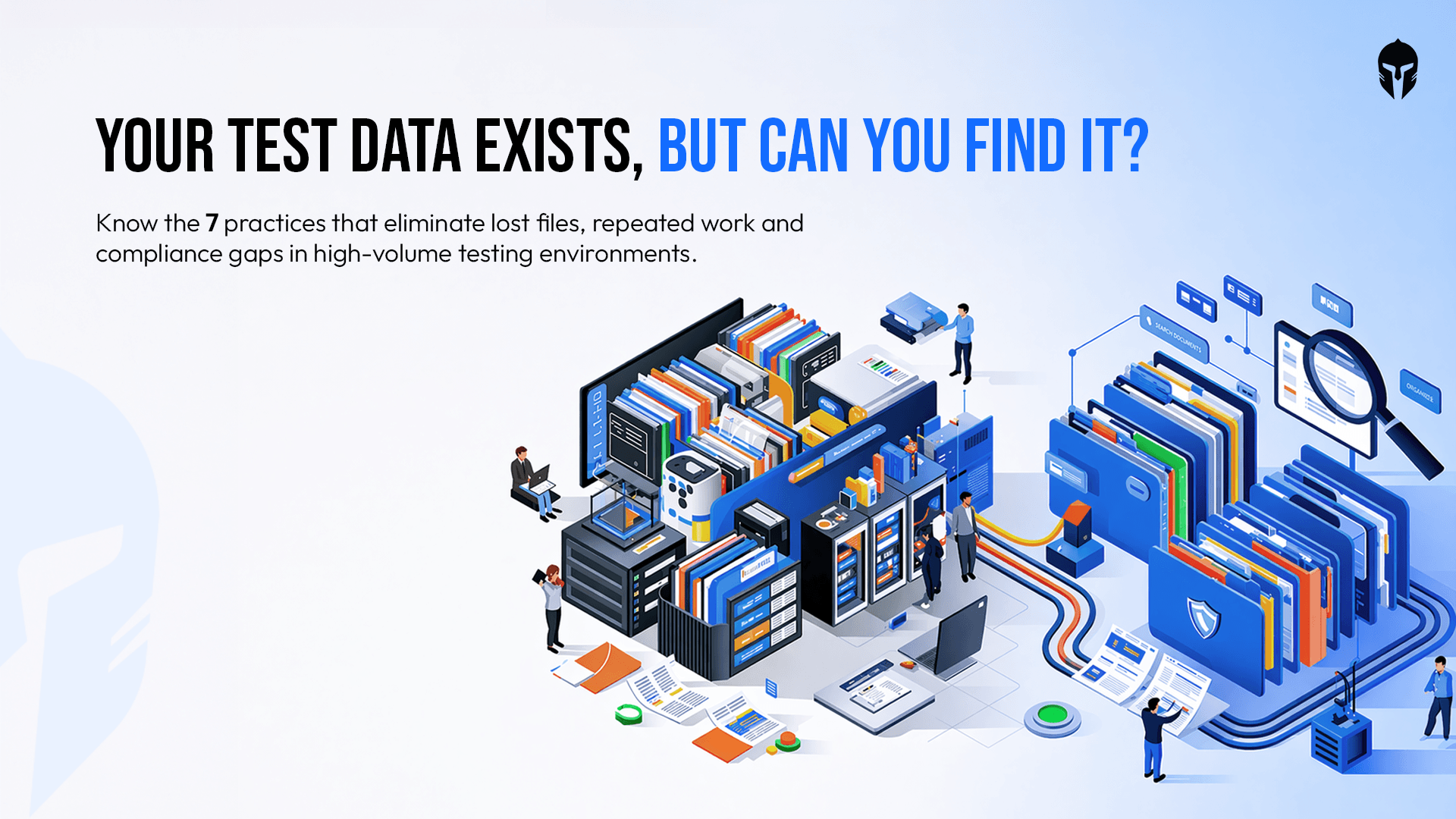

In a high-volume R&D lab, data is everywhere and that's exactly the alarming problem.

Test files, equipment logs, video recordings, structured forms, raw observations and images pile up across teams, projects and multiple tools. Without a deliberate approach to managing all of it, the result is predictable: lost data, repeated work, compliance gaps and engineering teams spending their time searching for information instead of acting upon it.

For labs trusted by the pioneers of the industry, managing test data is a competitive concern.

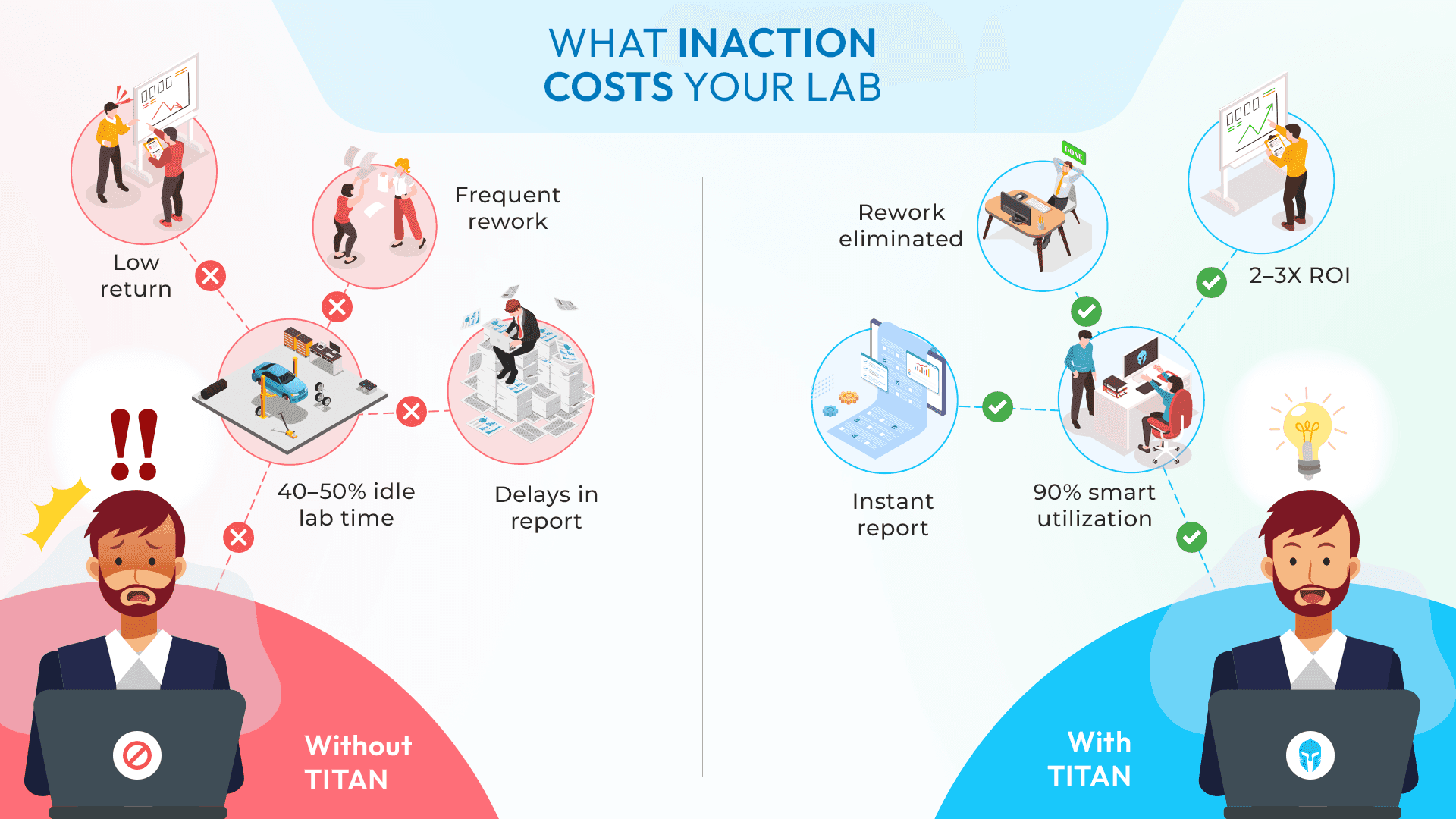

Organizations report that data issues cause 15% to 20% of test failures. Addressing test data management eliminates this waste.

Conversely, well managed test data enables comprehensive coverage, reliable execution and meaningful validation. Yet most organizations treat test data as an afterthought, creating it reactively and maintaining it haphazardly.

Here's what best-in-class test data management actually looks like.

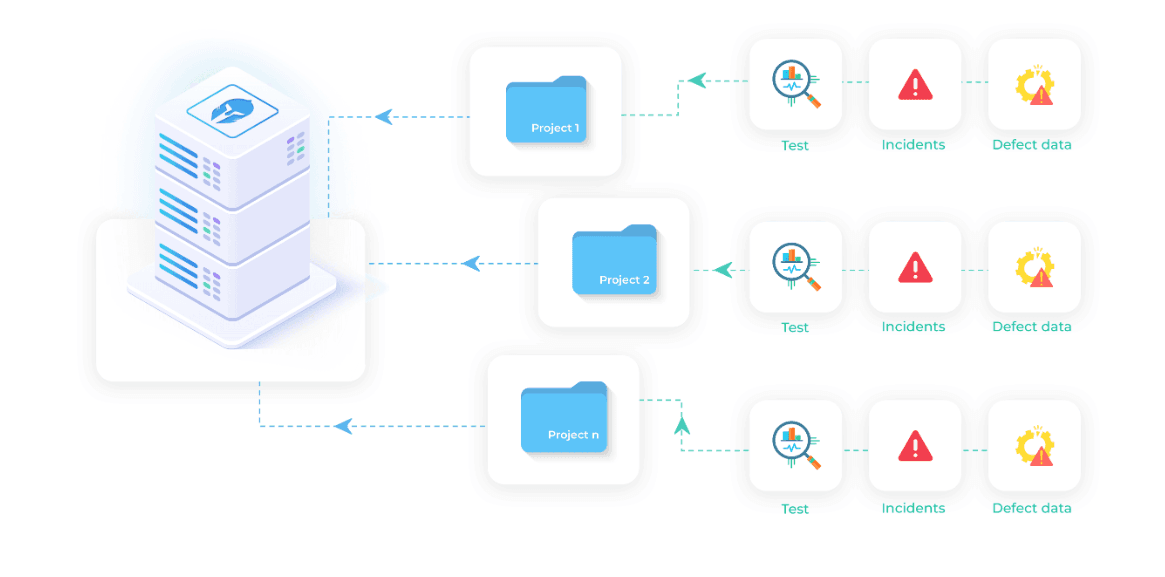

Centralize Everything Into One Structured Repository

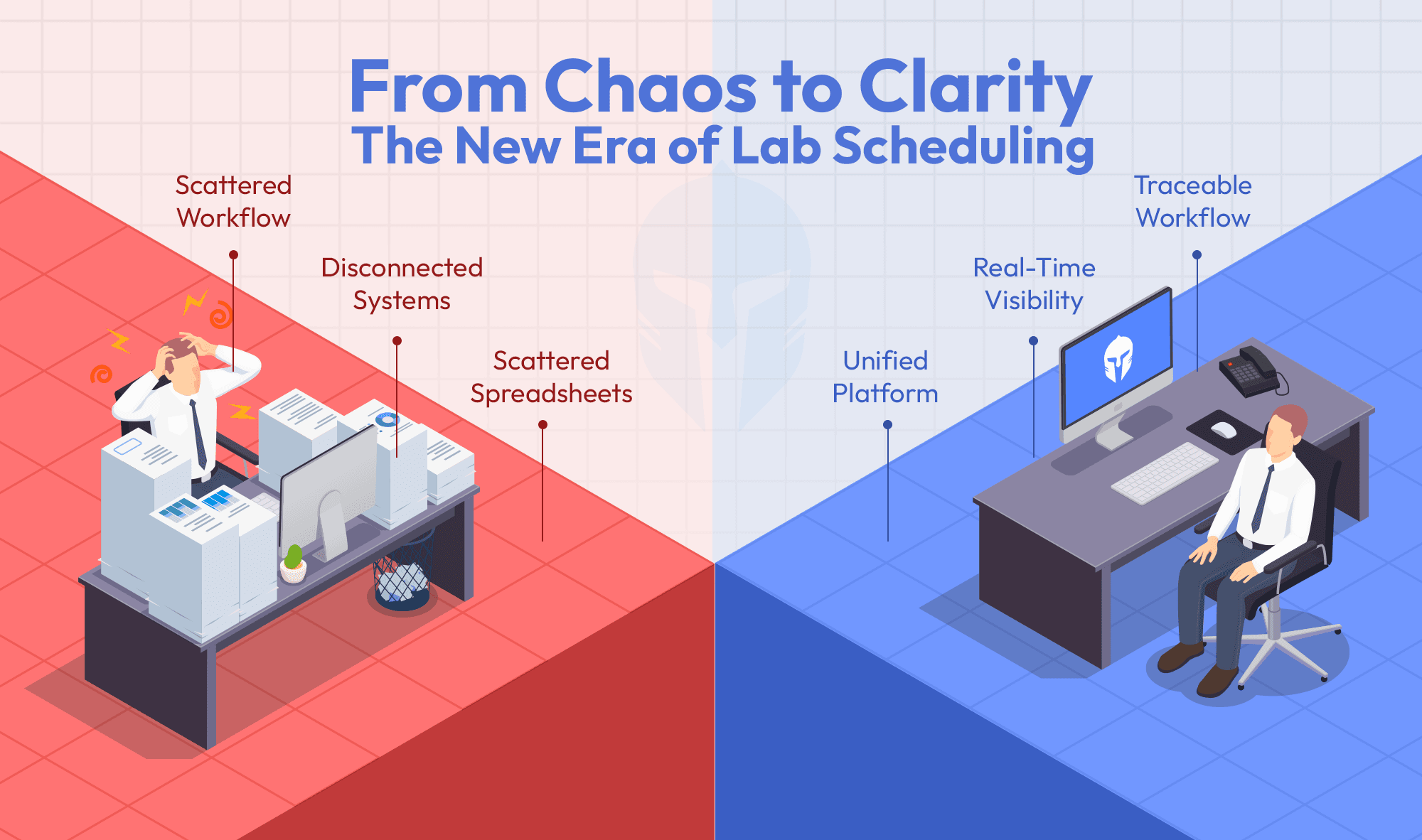

The most common failure in high-volume labs is a fragmented data landscape. Teams store files in different locations, use inconsistent naming conventions and operate disconnected from one another. The result is an information flow that nobody fully controls.

The foundation of good test data management is a centralized repository, a single, structured platform where all test-related information lives. This means test files, videos, external attachments, equipment data, observations and images all captured and organized under a consistent project-level hierarchy.

When data is centralized, nothing gets lost or misplaced. Teams always know where to look, and more importantly, they can trust what they find.

TITAN as a testlifecycle management platform also supports integration with NAS (Network Attached Storage) and other existing storage systems, meaning labs don't have to replace their infrastructure but connect it.

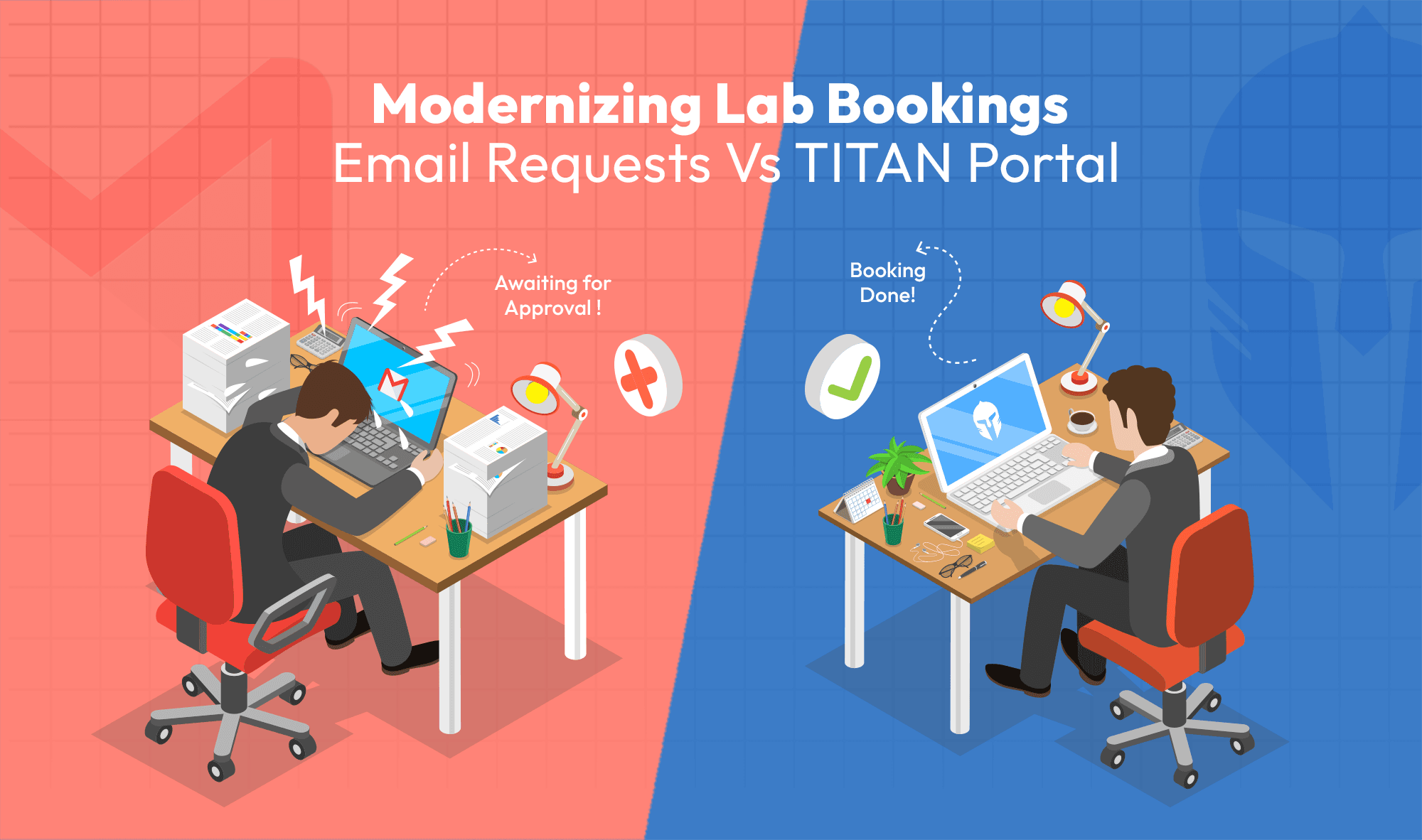

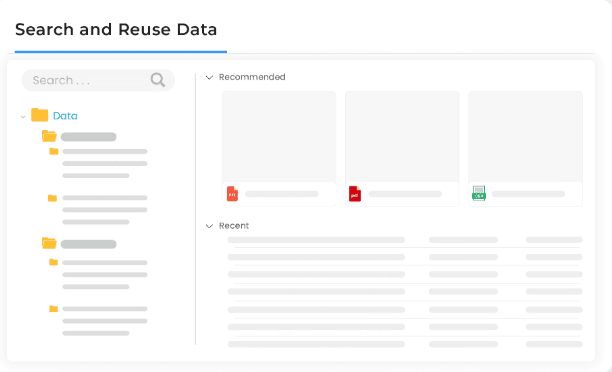

Make Data Searchable and Instantly Accessible

Centralization alone isn't enough. If your teams can't find what they need quickly, the repository becomes just another place data goes to sit unused.

High-volume labs need the ability to search, filter and preview files instantly, not just within a single project, but across projects and test requests. The ability to do a cross-project search dramatically reduces the time engineers spend hunting for historical test data, prior results or reference files.

In-app file preview adds another layer of efficiency. Being able to check a file before downloading it sounds like a small detail, but in a lab running dozens of concurrent tests, it saves meaningful time every day. A platform like TITAN estimates that structured, searchable data management saves teams up to 50% of the time typically spent searching for and reusing information.

Don't Let File Size Become a Bottleneck

Large video files and raw data outputs are a reality in modern testing environments, particularly in automotive, crash and powertrain testing. A system that can't handle this creates workarounds: local drives, personal storage folders, email attachments. All of which break the centralized model.

The right approach is to support linking to large files stored externally, so they remain manageable and accessible without hitting storage limits. This keeps the data environment clean while ensuring that even the heaviest test outputs are tied back to the right test request, asset and record.

TITAN as a test lifecycle management platform also supports integration with existing storage systems, meaning labs don't have to replace their infrastructure but connect it.

Enforce Structure Through Hierarchy, Not Just Habit

In a high-volume environment, you cannot rely on individual engineers to consistently organize their own data. Structure needs to be built into the system itself.

A project-level data hierarchy ensures that every file, every attachment and every log entry is captured in the right context, linked to the correct test or work order. This structure is what makes data reusable rather than just be stored. It transforms test history from an archive into a working asset.

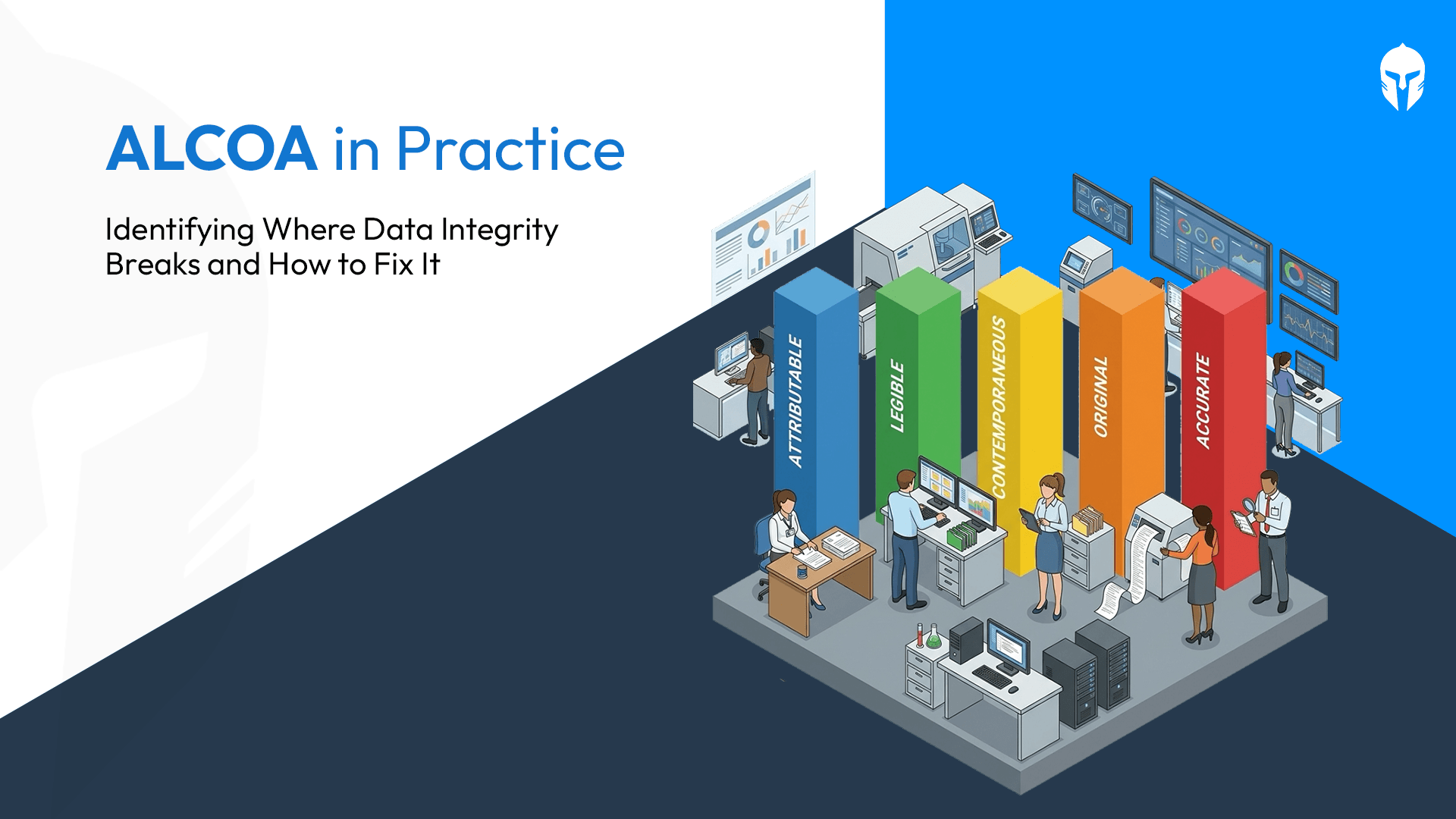

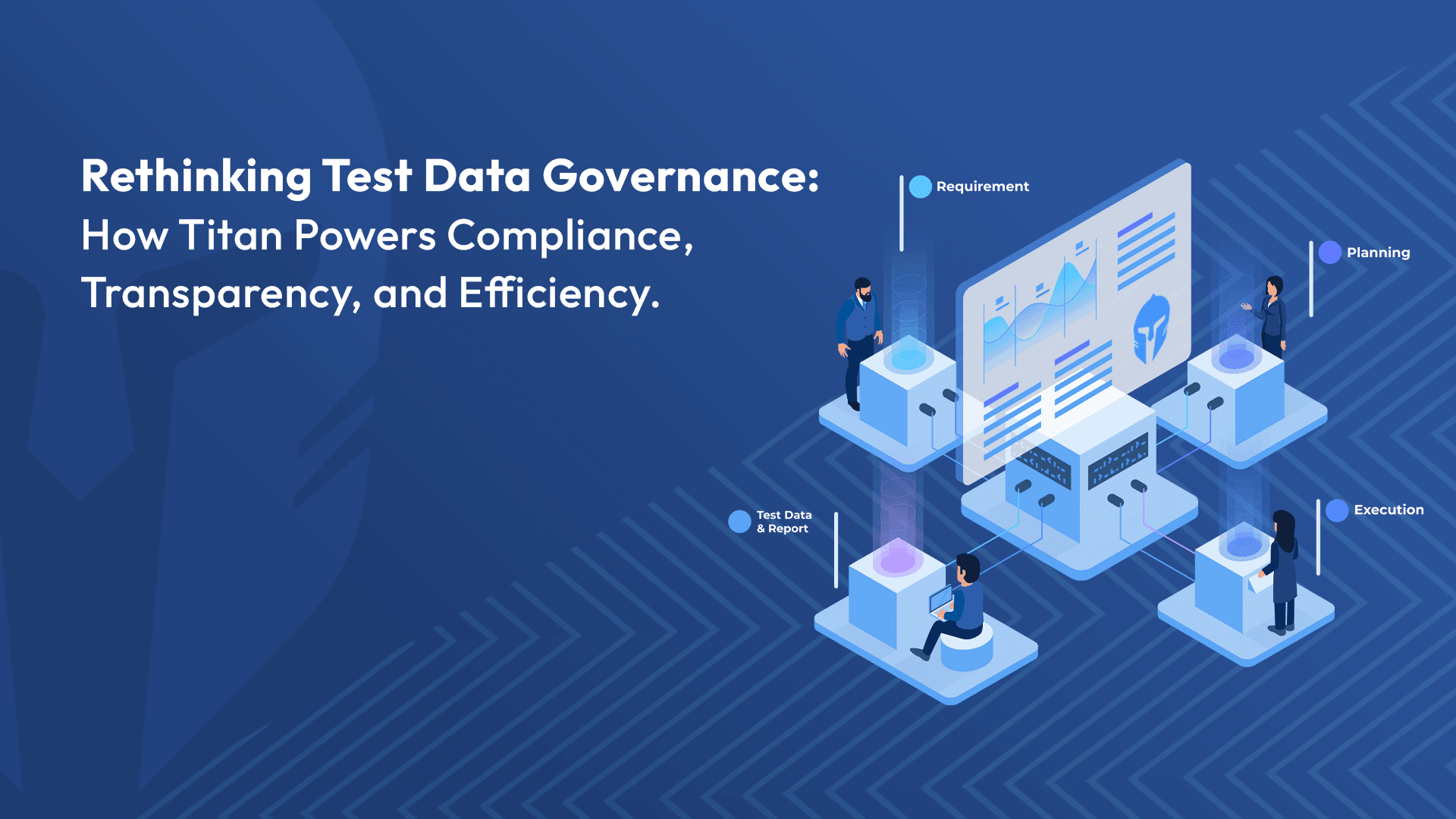

Build Compliance into the Data Layer

Audit readiness is not something labs can retroactively bolt on. Every access event and every modification to test data needs to be logged automatically, creating a trail that is available on demand and not assembled under pressure before a review.

Permission-based access controls ensure that the right people can see and edit the right data, while everyone else is appropriately restricted. Combined with full audit trails, this approach protects data integrity and maintains compliance without creating extra administrative burden for engineering teams.

This is particularly critical for labs operating across automotive, aviation, railway and consumer electronics industries, where regulatory scrutiny is high and documentation standards are non-negotiable.

Enable Collaboration Without Compromising Control

One of the most persistent tensions in high-volume labs is between accessibility and control. Teams need to collaborate often across locations, disciplines and devices but open access creates risk.

The answer is centralized, secure data that all teams work from simultaneously. When everyone operates from the same source of truth, you eliminate the version confusion, the "which file is latest" conversations and the immense rework caused by teams acting on outdated information.

Reports from a structured test lifecycle management platform suggests that this kind of collaborative data structure drives a 70% increase in team productivity and a 40% reduction in human error, both of which trace directly back to teams working from consistent, reliable data.

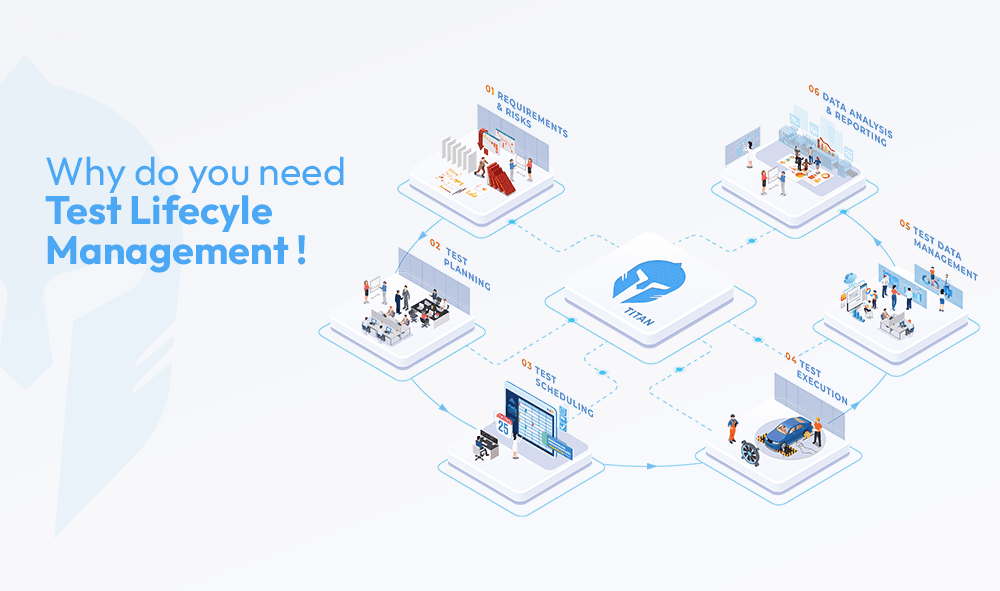

Treat Data Management as Part of the Test Lifecycle, Not an Afterthought

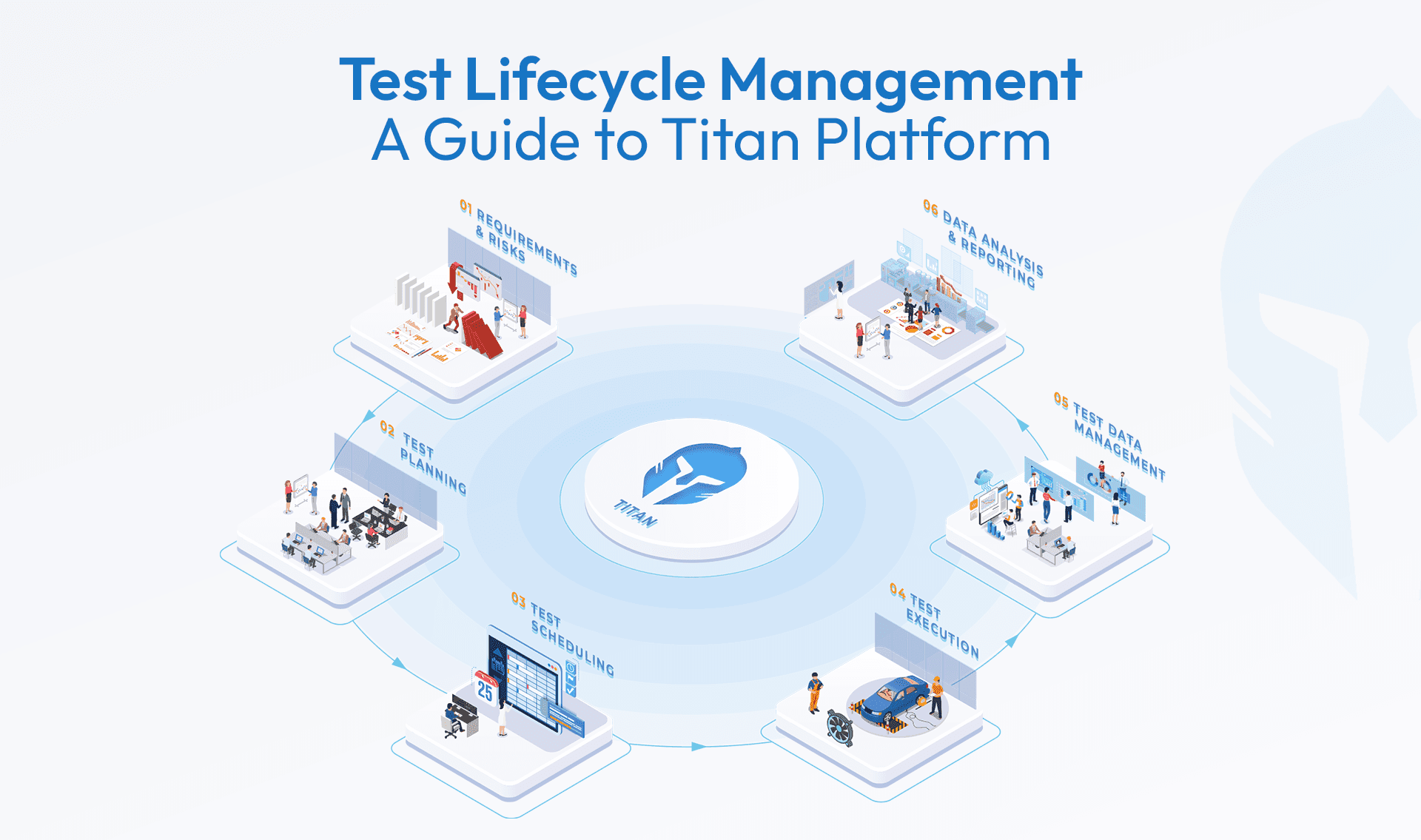

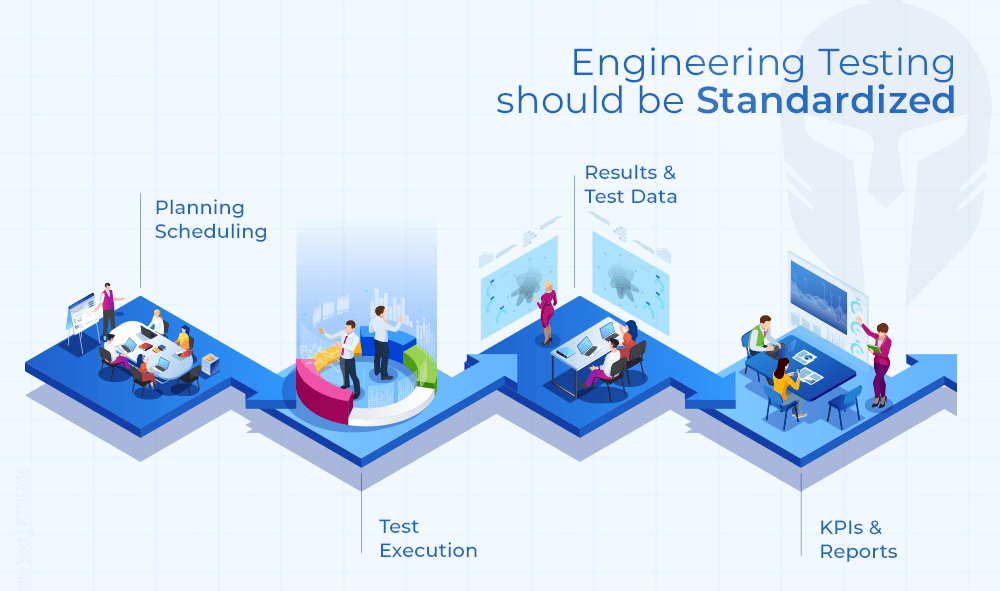

Perhaps the most important shift high-volume labs need to make is a cultural one. Test data management is not an administrative function that happens after testing is done. It is an integral part of the test lifecycle itself from test requests and verification planning through execution, results and reporting.

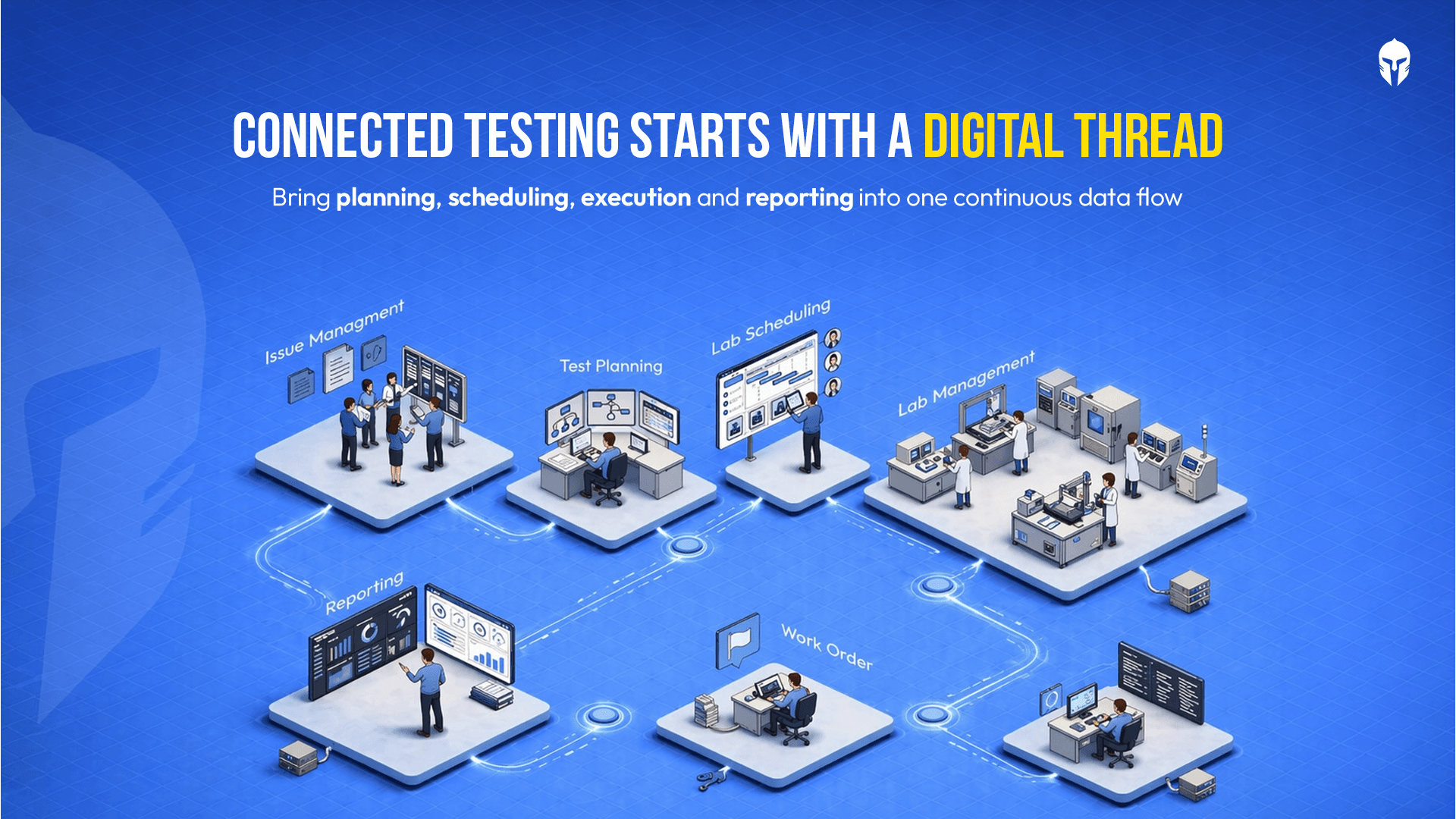

When data management is embedded in the same platform that handles traceability, test scheduling, lab management, work orders and issue tracking, the result is a connected, frustration-free workflow. Data doesn't fall between the cracks because there are no cracks to fall into.

TITAN's unified approach, bringing together Knowledge, Test, Lab, Prototype, Maintenance, Data and Work management in one place exists precisely to solve this. Fragmented tools create fragmented data. A unified platform creates a digital thread that runs through every stage of the testing process.

The Bottom Line

High-volume labs suffer from data management problem. Files exist, results exist, history exists but without structure, search, control and connectivity, none of it delivers the value it should.

The labs that get this right, that centralize, secure and make their test data effortlessly accessible are the ones that move faster, make fewer errors and stay audit-ready without the last-minute scramble.

That's what modern test data management makes possible.

Explore Test Data Management for High-Volume Labs

Centralize, organize and access your test data efficiently across every project